I was invited to Microsoft HQ in the UK yesterday to be a speaker at one of their launch event for SQL Server 2012. It’s the second of these events that I’ve appeared at and it finally made me realise I need to change something about this blog.

So far until now I have resisted making any critical remarks about the Oracle Exadata product here, other than to quote the facts as part of my History of Exadata series. I’m going to change that now by offering my own opinions on the product and Oracle’s strategy around selling it.

Before I do that I should establish my credentials and declare any bias I may have. For a number of years, until very recently in fact, I was an employee of Oracle Corporation in the UK where I worked in Advanced Customer Services. I began working with Exadata upon the release of the “v2” Sun Oracle Database Machine and at the time of the “X2” I was the UK Team Lead for Exadata. I personally installed and supported Exadata machines in the UK and also trained a number of the current Exadata engineers in ACS (although that wasn’t exactly difficult as all of the ACS engineers I know are excellent). I also used to train the sales and delivery management communities on Exadata using my trademarked “coloured balls” presentation (you had to be there).

I now work for Violin Memory, a company that (to a degree) competes with Oracle Exadata. Exadata is a database appliance, whilst Violin Memory make flash memory arrays… so that doesn’t immediately sound like a true competition. But I’ll let you into a little secret: Exadata isn’t a database appliance at all – it’s an application acceleration product. That’s what is does, it takes applications which businesses rely on and makes them run faster. And in fact that’s also exactly what Violin is – it’s an application acceleration product that just happens to look like a storage array.

So now we have everything out in the open I’m going to talk about my issue with Exadata – and you can read this keeping in mind that everything I say is tainted by the fact that I have an interest in making Violin products look better than Oracle’s. I can’t help that, I’m not going to quit my exciting new job just to gain some journalistic integrity…

There are a number of critiques of Exadata out there on the web, ranging from technical discussions (the best of which are Kevin Closson’s Critical Analysis videos) to stories about the endless #PatchMadness from Exadata DBAs on Twitter. My main issue is much more fundamental:

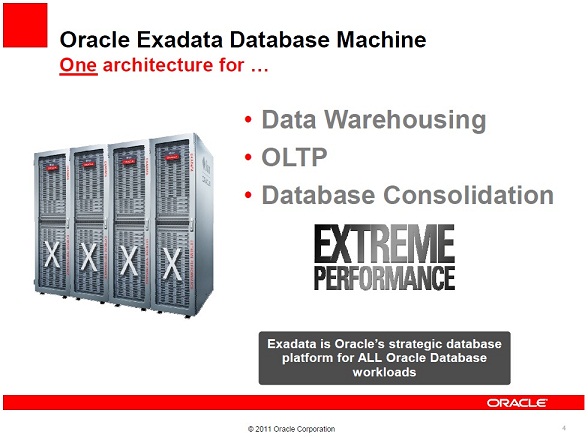

Oracle now say that Exadata is the strategic database platform for ALL database workloads. This did not used to be the case. If you read my History of Exadata piece you will see that when the original v1 HP Oracle Database Machine was released, “Exadata” was the name of the storage servers. And those storage servers were, in Oracle’s own words, “Designed for Oracle Data Warehouses“.

Upon the release of the v2 Sun Oracle Database Machine there came an epiphany at Oracle: the realisation that flash technology was essential for performance (don’t forget I’m biased). This was great news for Violin as back in those days (this was 2009) flash was still an emerging technology. However, the Sun F20 Accelerator cards that were added to the v2 were (in my biased opinion) pretty old tech and Oracle was only able to use them as a read cache. However, that didn’t stop Oracle’s marketing department (never one to hold back on a bold claim) from making the statement that the v2 was “The First Database Machine For OLTP“. We are now on the X2 model (really only a minor upgrade in CPU and RAM from the v2) and Oracle has now added Database Consolidation to the list of things that Exadata does. And of course the new bold claim has now appeared, as in the image above, “Exadata is Oracle’s strategic database platform for ALL database workloads“. Sure the X2 now came in two models, the X2-2 and the X2-8, but they weren’t actually different in terms of the features that you get above a normal Oracle database… you still get the same Exadata storage, Hybrid Columnar Compression and Exadata Flash Cache features regardless of the model.

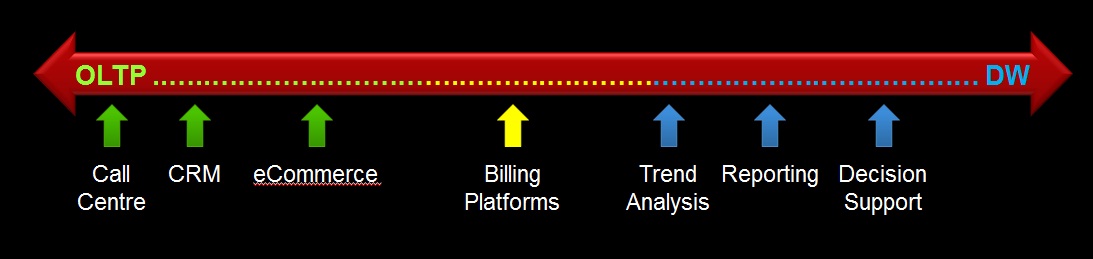

So what’s my problem with this? Well first of all let’s just think about what a workload is. Essentially you can define the workload of a database by the behaviour of its users. There are two main types of workload in the database world, OnLine Transactional Processing (OLTP) and Data Warehousing (DW). OLTP systems tend to have highly transactional workloads, with many users concurrently querying and changing small amounts of data. Conversely, DW systems tend to have a smaller number of power users who query vast amounts of data performing sorts and aggregation. OLTP systems experience huge amounts of change throughout their working period (e.g. 9am-5pm for a national system, 24×7 for a global system). DW systems on the other hand tend to remain relatively static except during ETL windows when massive amounts of data are loaded or changed.

In fact, you can pretty much picture any workload as fitting somewhere on a scale between these two extremes:

Of course, this is a sweeping generalisation. In practice no system is purely OLTP or purely DW. Some systems have windows during which different types of workload occur. Consolidation systems make things even more complicated because you can have multiple concurrent workloads taking place.

There’s a point to all this though. Take a random selection of real life databases and look at their workloads. If you agree with my OLTP <> DW scale above then you will see that they all fit in different places. Maybe you don’t agree with it though and you think there are actually many more dimensions to consider… no matter. What we should all be able to agree on is this:

In the real world, different databases have different workloads.

And if we can agree on that then perhaps we can also agree on this:

Different workloads will have different requirements.

That’s simple logic. And to extend that simple logic just one more step:

One design cannot possibly be optimal for many different requirements.

And that’s my problem with Oracle’s strategy around selling Exadata. We all know that it was originally designed as a data warehouse solution. Although I defer to Kevin’s knowledge about the drawbacks of an asymmetric shared-nothing MPP design, I always thought that Exadata was an excellent DW product and something that (at the time) seemed like an evolutionary step forward (although I now believe that flash memory arrays are a revolutionary step forward that make that evolution obsolete – keep remembering that I’m biased though). But it simply cannot be the best solution for everything because that doesn’t make sense. You don’t need to be technical to get that, you don’t even need to be in IT.

Let’s say I wanted to drive from town A to town B as fast as I can. I’d choose a Ferrari right? That’s my OLTP requirement. Now let’s say I wanted to tow a caravan from A to B, I’d need a 4×4 or something with serious towing ability – definitely not a Ferrari. There’s my DW requirement. Now I need to transport 100 people from A to B. I guess I’d need a coach. That’s my Database Consolidation requirement. There is no single solution which is optimal for all requirements. Only a set of solutions which are better at some and worse at others.

A final note on this subject. The Microsoft event at which I spoke was about Redmond’s new set of database appliances: the Database Consolidation Appliance, the Parallel Data Warehouse, and the Business Decision Appliance. Microsoft have been lagging behind Oracle in the world of appliances but I believe that they have made a wise choice here in offering multiple solutions based on customer workload. And they are not the only ones to think this. Look at this document from Bloor comparing IBM and Exadata:

“Oracle’s view of these two sets of requirements is that a single solution, Oracle Exadata, is ideal to cover both of them; even though, in our view (and we don’t think Oracle would disagree), the demands of the two environments are very different. IBM’s attitude, by way of contrast, is that you need a different focus for each of these areas and thus it offers the IBM pureScale Application System for OLTP environments and IBM Smart Analytics Systems for data warehousing.”

Now… no matter how biased you think I am… Maybe it’s time to consider if this strategy of Oracle’s really makes sense?

Hello,

I guess depends from the point of view . In my experience at least quite a few system are also in the mixed workload category . Nowadays I rarely see pure OLTP system ( not that those do not exists out there just my experience is mostly with custom systems not apps “out of the box” ). So from implementation point of view , data consolidation,data sharing and etc I think having a single platform to use is a plus not a minus actually . In the specialized workload architectures( one type of database/architecture for OLTP and one type of database/architecture for DW ) having to “split” my system on two parts is something which I consider a negative ( support, data replication when needed and etc ), so the idea to have a single platform which covers the spectrum like Exadata is appealing to me at least . In case of pure DW Exadata has smart scans and etc , for read IOPS has flash cache all connected by Infiniband. At least to me a similar thing is also appealing in theory in Violin ( if I look for a pure storage solution – i.e no smarts scans or any similar technologies by the database vendors ) – single performing array where I can run mixed workload type of a database ( that is based on white papers and etc – I do not have real experience with Violin ) . So I think Oracle’s idea to have a single system covering most of the workload requirements ( except I guess very very write intensive OLTP system ) is actually a good thing I believe.

Regards,

Alex

Hi Alex

OK I take your point about how having a single platform is great from an operational and support point of view. But that single platform, in this example, costs $1.6m just for the storage licenses (for a full rack). That’s a large amount of money to spend unless it’s worthwhile – and in the case of many workloads it isn’t worthwhile. The storage licenses are what make Exadata something other than a bunch of Sun commodity servers pre-racked; they give you Smart Scan, Hybrid Columnar Compression and the Exadata Smart Flash Cache. But Smart Scan is a sequential IO feature, it isn’t relevant in OLTP and is unlikely to help you out in a consolidation environment (since the more databases you consolidate, the more random the IO becomes). Likewise HCC is to be absolutely avoided in an OLTP environment where data is changing. The Exadata Smart Flash Cache is intended to be an OLTP feature but it doesn’t accelerate writes, only reads (and OLTP has a much higher ratio of writes to reads than other workloads).

So the point I’m making is that selling one solution for all problems doesn’t make sense. Sure you are right in that having one solution certainly keeps things simple, but if that solution is not cost effective then that argument is void. In fact if you are paying large amounts of money for features you will never be able to use then that’s a serious flaw.

And if Oracle is positioning this expensive solution to fit workloads where it doesn’t actually add any value then that, in my opinion, is a bad thing.

Hello,

I tend to see a quite a bit more felixible pricing around than that quoted 🙂 . When you think about it the storage vendors are doing excatly the same thing no ? In Violin’s case isn’t VMA a single paltform targeting all of OLTP/Mixed and DW workloads ?

Regards,

Alex

That pricing is going to have to be pretty flexible to make it worthwhile paying for features you don’t use, surely?

I like your argument about VMA, you nearly had me there 🙂 But Violin offer flexibility in the choice of flash memory array. We have the 3000 and 6000 series of arrays; we have SLC (performance flash) and MLC (capacity flash) with different performance characteristics; we have multiple different products within each range; and we have a host of connectivity options to boot.

But most of all, you can run anything on Violin. You aren’t restricted to 11gR2 with ASM on Linux. In fact you aren’t even restricted to Oracle. With Exadata you have all the choices made for you up front, there is no flexibility and no range.

And I am aware that Exadata comes in the two flavours of X2-2 and X2-8 – but I don’t buy that they apply to different workloads. At the end of the day Exadata is all about Smart Scan, HCC and Flash Cache. Two of those features only apply to data warehousing and the third is a sub-optimal implementation of flash that a whole chorus of people on the web have already discussed.

Alex I like the way you challenge my arguments, it’s debate like this that makes it worth talking about. But I can’t agree that Oracle’s decision to promote Exadata as the single solution to all problems is anything other than wrong. For me it is a simple failure of logic, there cannot be one solution to multiple and diverse requirements.

In light of 21st century alternatives I fail to see how Exadata can be called a consolidation platform. Sure, you can pack a lot of little databases into an 8-node RAC cluster but that has always been the case? Consider the fact that IBM’s xSeries customers were offered a platform solution back in 2004 that enabled consolidation of 60 (more actually) Oracle databases in a single namespace, easily managed RAC environment: ftp://ftp.software.ibm.com/eserver/benchmarks/FDC_BC_CompAnalysis_Dec2004.pdf

I think the 21st century offers better for consolidation, namely VMware especially embodied in VCE Vblock. But that is just my opinion.

On another point, this comment thread leans heavy on the idea that HCC is an Exadata-feature. It is not. It is a generic Oracle Database 11g feature dating back to it’s time in Beta.

Oracle put in discovery code that shut off the functionality for all but Exadata storage back in 2009. Since then a lot has transpired regarding this particular *generic* (not separately licensed) database feature. Oracle issued patch 13041324 which enables HCC usage on standards-based storage via dNFS. However, since Oracle sells NFS filers they subsequently issued patch 13362079 to take the generic feature away from paid licensees unless, of course, the customer adds the cost of Oracle standards-based storage (e.g., ZFSSA, Pillar) to their existing Oracle spend.

Please don’t be confused, there is no offload processing in ZFSSA or Pillar. The patches serve as a thin veneer to force Oracle’s storage on the customer. If Oracle customers aren’t upset at that situation then neither am I. I find that many customer are unaware of what they are paying for already. Indeed, Oracle is a huge product and customers are paying for features they don’t use all the time because said features might not fit their use case. Everyone would probably get pretty upset, however, if the COMMIT statement only worked on Oracle storage in the 12c time frame 🙂

If you’ll allow I’d like to offer the following material on HCC storage discovery as it pertains to these patches:

http://oracleprof.blogspot.com/2011/11/dnfs-configuration-and-hybrid-column.html

http://oracleprof.blogspot.com/search/label/HCC

Hello,

I guess we will agree to disagree on our views of Exadata 🙂 . You mentioned something quite interesting to me ( having no experience with Violin so sorry if it is a dumb question) – ” We have the 3000 and 6000 series of arrays; we have SLC (performance flash) and MLC (capacity flash) with different performance characteristics” – so are we saying that we should use one array in case of OLTP and different type of array for DW workload ? What about mixed workloads( I tend to get those a lot 🙂 ) ? – or I just misunderstood your point ? Thanks

Regards,

Alex

Hello Kevin,

I was not talking about talking many little databases( not my thing really ) I was talking about mixed workload in the same “bigish” database.. Yup I know about dNFS and ZFSSA and I find both quite good in the real life and I know that there is no offloading with HCC outside of Exadata. BTW congrats on SLOB ..very cool tool to measure IOPS ..and come on can we finally see “Thunder” 🙂

Regards,

Alex

Hi Alex,

I’m glad you find SLOB useful/helpful. As for Thunder when “we” will “see” Thunder, uh, well, I can practically hear it since I have lab work pounding the daylights out of it! 🙂