In the first part of this blog series on In Memory Databases (IMDBs) I talked about the definition of “memory” and found it surprisingly hard to pin down. There was no doubt that Dynamic Random Access Memory (DRAM), such as that found in most modern computers, fell into the category of memory whilst disk clearly did not. The medium which caused the problem was NAND flash memory, such as that found in consumer devices like smart phones, tablets and USB sticks or enterprise storage like the flash memory arrays made by my employer Violin Memory.

In the first part of this blog series on In Memory Databases (IMDBs) I talked about the definition of “memory” and found it surprisingly hard to pin down. There was no doubt that Dynamic Random Access Memory (DRAM), such as that found in most modern computers, fell into the category of memory whilst disk clearly did not. The medium which caused the problem was NAND flash memory, such as that found in consumer devices like smart phones, tablets and USB sticks or enterprise storage like the flash memory arrays made by my employer Violin Memory.

There is no doubt in my mind that flash memory is a type of memory – otherwise we would have to have a good think about the way it was named. My doubts are along different lines: if a database is running on flash memory, can it be described as an IMDB? After all, if the answer is yes then any database running on Violin Memory is an In Memory Database, right?

What Is An In Memory Database?

As always let’s start with the stupid questions. What does an IMDB do that a non-IMDB database does not do? If I install a regular Oracle database (for example) it will have a System Global Area (SGA) and a Program Global Area (PGA), both of which are areas set aside in volatile DRAM in order to contain cached copies of data blocks, SQL cursors and sorting or hashing areas. Surely that’s “in-memory” in anyone’s definition? So what is the difference between that and, for example, Oracle TimesTen or SAP HANA?

Let’s see if the Oracle TimesTen documentation can help us:

“Oracle TimesTen In-Memory Database operates on databases that fit entirely in physical memory”

That’s a good start. So with an IMDB, the whole dataset fits entirely in physical memory. I’m going to take that sentence and call it the first of our fundamental statements about IMDBs:

IMDB Fundamental Requirement #1:

IMDB Fundamental Requirement #1:

In Memory Databases fit entirely in physical memory.

But if I go back to my Oracle database and ensure that all of the data fits into the buffer cache, surely that is now an In Memory Database?

Maybe an IMDB is one which has no physical files? Of course that cannot be true, because memory is (or can be) volatile, so some sort of persistent later is required if the data is to be retained in the event of a power loss. Just like a “normal” database, IMDBs still have to have datafiles and transaction logs located on persistent storage somewhere (both TimesTen and SAP HANA have checkpoint and transaction logs located on filesystems).

So hold on, I’m getting dangerously close to the conclusion that an IMDB is simply a normal DB which cannot grow beyond the size of the chunk of memory it has been allocated. What’s the big deal, why would I want that over say a standard RDBMS?

Why is an In-Memory Database Fast?

Actually that question is not complete, but long questions do not make good section headers. The question really is: why is an In Memory Database faster than a standard database whose dataset is entirely located in memory?

Back to our new friend the Oracle TimesTen documentation, with the perfectly-entitled section “Why is Oracle TimesTen In-Memory Database fast?“:

“Even when a disk-based RDBMS has been configured to hold all of its data in main memory, its performance is hobbled by assumptions of disk-based data residency. These assumptions cannot be easily reversed because they are hard-coded in processing logic, indexing schemes, and data access mechanisms.

TimesTen is designed with the knowledge that data resides in main memory and can take more direct routes to data, reducing the length of the code path and simplifying algorithms and structure.”

This is more like it. So an IMDB is faster than a non-IMDB because there is less code necessary to manipulate data. I can buy into that idea. Let’s call that the second fundamental statement about IMDBs:

IMDB Fundamental Requirement #2:

IMDB Fundamental Requirement #2:

In Memory Databases are fast because they do not have complex code paths for dealing with data located on storage.

I think this is probably a sufficient definition for an IMDB now. So next let’s have a look at the different implementations of “IMDBs” available today and the claims made by the vendors.

Is My Database An In Memory Database?

Any vendor can claim to have a database which runs in memory, but how many can claim that theirs is an In Memory Database? Let’s have a look at some candidates and subject them to analysis against our IMDB fundamentals.

1. Database Running in DRAM – e.g. SAP HANA

I have no experience of Oracle TimesTen but I have been working with SAP HANA recently so I’m picking that as the example. In my opinion, HANA (or NewDB as it was previously known) is a very exciting database product – not especially because of the In Memory claims, but because it was written from the ground up in an effort to ignore previous assumptions of how an RDBMS should work. In contrast, alternative RDBMS such as Oracle, SQL Server and DB/2 have been around for decades and were designed with assumptions which may no longer be true – the obvious one being that storage runs at the speed of disk.

I have no experience of Oracle TimesTen but I have been working with SAP HANA recently so I’m picking that as the example. In my opinion, HANA (or NewDB as it was previously known) is a very exciting database product – not especially because of the In Memory claims, but because it was written from the ground up in an effort to ignore previous assumptions of how an RDBMS should work. In contrast, alternative RDBMS such as Oracle, SQL Server and DB/2 have been around for decades and were designed with assumptions which may no longer be true – the obvious one being that storage runs at the speed of disk.

The HANA database runs entirely in DRAM on Intel x86 processors running SUSE Linux. It has a persistent layer on storage (using a filesystem) for checkpoint and transaction logs, but all data is stored in DRAM along with an additional allocation of memory for hashing, sorting and other work area stuff. There are no code paths intended to decide if a data block is in memory or on disk because all data is in memory. Does HANA meet our definition of an IMDB? Absolutely.

What are the challenges for databases running in DRAM? One of the main ones is scalability. If you impose a restriction that all data must be located in DRAM then the amount of DRAM available is clearly going to be important. Adding more DRAM to a server is far more intrusive than adding more storage, plus servers only have a limited number of locations on the system bus where additional memory can be attached. Price is important, because DRAM is far more expensive than storage media such as disk or flash. High Availability is also a key consideration, because data stored in memory will be lost when the power goes off. Since DRAM cannot be shared amongst servers in the same way as networked storage, any multiple-node high availability solution has to have some sort of cache coherence software in place, which increases the complexity and moves the IMDB away from the goal of IMDB Fundamental #2.

Gong back to HANA, SAP have implemented the ability to scale up (adding more DRAM – despite Larry’s claims to the contrary, you can already buy a 100TB HANA database system from IBM) as well as to scale out by adding multiple nodes to form a cluster. It is going to be fascinating to see how the Oracle vs SAP HANA battle unfolds. At the moment 70% of SAP customers are running on Oracle – I would expect this number to fall significantly over the next few years.

2. Database Running on Flash Memory – e.g. on Violin Memory

Now this could be any database, from Oracle through SQL Server to PostgreSQL. It doesn’t have to be Violin Memory flash either, but this is my blog so I get to choose. The point is that we are talking about a database product which keeps data on storage as well as in memory, therefore requiring more complex code paths to locate and manage that data.

Now this could be any database, from Oracle through SQL Server to PostgreSQL. It doesn’t have to be Violin Memory flash either, but this is my blog so I get to choose. The point is that we are talking about a database product which keeps data on storage as well as in memory, therefore requiring more complex code paths to locate and manage that data.

The use of flash memory means that storage access times are many orders of magnitude faster than disk, resulting in exceptional performance. Take a look at recent server benchmark results and you will see that Cisco, Oracle, IBM, HP and VMware have all been using Violin Memory flash memory arrays to set new records. This is fast stuff. But does a (normal) database running on flash memory meet our fundamental requirements to make it an IMDB?

First there is the idea of whether it is “memory”. As we saw before this is not such a simple question to answer. Some of us (I’m looking at you Kevin) would argue that if you cannot use memory functions to access and manipulate it then it is not memory. Others might argue that flash is a type of memory accessed using storage protocols in order to gain the advantages that come with shared storage, such as redundancy, resilience and high availability.

Luckily the whole question is irrelevant because of our second fundamental requirement, which is that the database software does not have complex code paths for dealing with blocks located on storage. Bingo. So running an Oracle database on flash memory does not make it an In Memory Database, it just makes it a database which runs at the speed of flash memory. That’s no bad thing – the main idea behind the creation of IMDBs was to remove the bottlenecks created by disk, so running at the speed of flash is a massive enhancement (hence those benchmarks). But using our definitions above, Oracle on flash does not equal IMDB.

On the other hand, running HANA or some other IMDB on flash memory clearly has some extra benefits because the checkpoint and transactional logs will be less of a bottleneck if they write data to flash than if they were writing to disk. So in summary, the use of flash is not the key issue, it’s the way the database software is written that makes the difference.

3. Database Accessing Remote DRAM and Flash Memory: Oracle Exadata X3

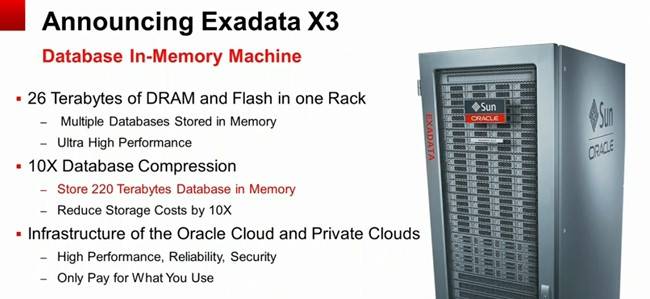

Why am I talking about Oracle Exadata now? Because at the recent Oracle OpenWorld a new version of Exadata was announced, with a new name: the Oracle Exadata X3 Database In-Memory Machine. Regular readers of my blog will know that I like to keep track of Oracle’s rebranding schemes to monitor how the Exadata product is being marketed, and this is yet another significant renaming of the product.

Why am I talking about Oracle Exadata now? Because at the recent Oracle OpenWorld a new version of Exadata was announced, with a new name: the Oracle Exadata X3 Database In-Memory Machine. Regular readers of my blog will know that I like to keep track of Oracle’s rebranding schemes to monitor how the Exadata product is being marketed, and this is yet another significant renaming of the product.

According to the press release, “[Exadata] can store up to hundreds of Terabytes of compressed user data in Flash and RAM memory, virtually eliminating the performance overhead of reads and writes to slow disk drives“. Now that’s a brave claim, although to be fair Oracle is at least acknowledging that this is “Flash and RAM memory”. On the other hand, what’s this about “hundreds of Terabytes of compressed user data”? Here’s the slide from the announcement, with the important bit helpfully highlighted in red (by Oracle not me):

Also note the “26 Terabytes of DRAM and Flash in one Rack” line. Where is that DRAM and Flash? After all, each database server in an Exadata X3-2 has only 128GB DRAM (upgradeable to 256GB) and zero flash. The answer is that it’s on the storage grid, with each storage cell (there are 14 in a full rack) containing 1.6TB flash and 64GB DRAM. But the database servers cannot directly address this as physical memory or block storage. It is remote memory, accessed over Infiniband with all the overhead of IPC, iDB, RDS and Infiniband ZDP. Does this make Exadata X3 an In Memory Database?

I don’t see how it can. The first of our fundamental requirements was that the database should fit entirely in memory. Exadata X3 does not meet this requirement, because data is still stored on disk. The DRAM and Flash in the storage cells are only levels of cache – at no point will you have your entire dataset contained only in the DRAM and Flash*, otherwise it would be pretty pointless paying for the 168 disks in a full rack – even more so because Oracle Exadata Storage Licenses are required on a per disk basis, so if you weren’t using those disks you’d feel pretty hard done by.

[*see comments section below for corrections to this statement]

But let’s forget about that for a minute and turn our attention to the second fundamental requirement, which is that the database is fast because it does not have complex code paths designed to manage data located both in memory or on disk. The press release for Exadata X3 says:

“The Oracle Exadata X3 Database In-Memory Machine implements a mass memory hierarchy that automatically moves all active data into Flash and RAM memory, while keeping less active data on low-cost disks”

This is more complexity… more code paths to handle data, not less. Exadata is managing data based on its usage rate, moving it around in multiple different levels of memory and storage (local DRAM, remote DRAM, remote flash and remote disks). Most of this memory and storage is remote to the database processes representing the end users and thus it incurs network and communication overheads. What’s more, to compound that story, the slide up above is talking about compressed data, so that now has to be uncompressed before being made available to the end user, navigating additional code paths and incurring further overhead. If you then add the even more complicated code associated with Oracle RAC (my feelings on which can be found here) the result is a multi-layered nest of software complexity which stores data in many different places.

Draw your own conclusions, but in my opinion Exadata X3 does not meet either of our requirements to be defined as an In Memory Database.

Conclusion

“In Memory” is a buzzword which can be used to describe a multitude of technologies, some of which fit the description better than others. Flash memory is a type of memory, but it is also still storage – whereas DRAM is memory accessed directly by the CPU. I’m perfectly happy calling flash memory a type of “memory”, even referring to it performing “at the speed of memory” as opposed to the speed of disk, but I cannot stretch to describing databases running on flash as “In Memory Databases”, because I believe that the only In Memory Databases are the ones which have been designed and written to be IMDBs from the ground up.

“In Memory” is a buzzword which can be used to describe a multitude of technologies, some of which fit the description better than others. Flash memory is a type of memory, but it is also still storage – whereas DRAM is memory accessed directly by the CPU. I’m perfectly happy calling flash memory a type of “memory”, even referring to it performing “at the speed of memory” as opposed to the speed of disk, but I cannot stretch to describing databases running on flash as “In Memory Databases”, because I believe that the only In Memory Databases are the ones which have been designed and written to be IMDBs from the ground up.

Anything else is just marketing…

“The HANA database runs entirely in DRAM on Intel x86 processors running SUSE Linux. ”

…doesn’t this quote say it all?

…Really. Is anyone confused by the difference between Flash accessed via libaio->Linux block layer->SCSI driver and a simple memory access (load/store) in DRAM?

…the screen shot in the following tweet shows the “effectiveness” of having data cached in flash and served up via iDB remote requests from database hosts. I encourage your readers to look at the photo. It shows ample idle CPU on both the hosts and the storage servers–and, recall, the storage servers is where the supposed “In-Memory” assets (flash) of the “new” “Database In-Memory Machine” reside.

…In the photo you’ll see storage is only delivering ~25GB/s yet idle CPU on both grids clearly marks the infiniband bottleneck for data flow from the “In-memory” Exadata assets to the hosts where SQL processing occurs (transactions, joins, agg, sort, cursor processing, etc).

…Exadata X3 has the *same* infiniband as X2. So I ask, even if we accept this ridiculous assertion that Exadata Smart Flash Cache qualifies as “Database In-Memory” who cares? In-Memory limited to 25GB/s is an absurdity.

https://twitter.com/kevinclosson/status/256802048778063872

Fantastic point as always. “In-Memory” limited by the bandwidth of an Infiniband network is patently absurd.

One wonders if the (excellent) people of Oracle Product Development are somewhat embarrassed by the claims made on their behalf by the people of Oracle Marketing?

“One wonders if the (excellent) people of Oracle Product Development are somewhat embarrassed by the claims made on their behalf by the people of Oracle Marketing?”

I know that is exactly how I felt whilst still in the Exadata development organization. I could not suffer the damage to my “personal brand” being so deeply associated with a product that quickly became the most absurdly over-marketed product Oracle ever produced. For me it all started with “The World’s First OLTP Machine.” Or should I say that was the beginning of the end for me.

How many of the original concept developers of SAGE / Exadata are left at Oracle? Rhetorical question – by asking it I’m sure the answer is obvious.

>>How many of the original concept developers of SAGE / Exadata are left at Oracle? Rhetorical question – by asking it I’m sure the answer is obvious.

…your wordpress theme doesn’t allow deeply nested comments…hmm…

…the answer to your question can be answered with information from LinkedIn so Oracle’s lawyers can slither back into their lair.

…the answer is, 1. Only 1 of the three “concept inventors” remain. One left and worked on vFabric Data Director at VMware the other is an executive at FusionIO. The latter being a ridiculously skilled platform engineer that I had the pleasure of working with in the mid 90s as we focused on Oracle exclusion primitive (latches) scalability on NUMA.

“The DRAM and Flash in the storage cells are only levels of cache ”

… I hate to point out that there is actually no caching done in DRAM in Exadata cells. The entirety of DRAM in cells is used for Storage Index context and buffering. The following might be helpful to your readers where the matter of cell DRAM caching (lack thereof) is concerned:

http://kevinclosson.wordpress.com/criticalthinking/#comment-39887

So, the cache model for X3 is host DRAM (2TB X3-2 and 4TB X3-8 aggregate) + the 22 TB Flash block devices in the storage grid.

Hmmm thanks for clarifying. That makes the claims even more ridiculous!

agree: to take full advantage of IM it has to be designed for IM

I think mike stonebraker has already analyzed the situation long ago (and some conclusion could still be valid):

http://voltdb.com/company/blog/use-main-memory-oltp

http://voltdb.com/company/blog/flash-or-not-flash-question

Thanks for the links, I hadn’t seen them before. Mike Stonebraker is a guy that knows a little bit about databases 🙂 so that’s valuable reading material.

I’m not sure that Mike makes it completely clear in the OLTP article that the source paper he is quoting is jointly authored by him. I’m not looking to criticise one of the brightest database scientists in the world, just making sure any of my readers who click through the link are aware.

I’m actually a big fan of Mike for his willingness to speak out about NoSQL and some people’s apparent eagerness to forgo ACID compliance and SQL. I’m not saying NoSQL is wrong in any way, just that sometimes it feels like organisations are adopting it because they think they should rather than because it is the correct technical choice.

But I guess that’s a post for another day.

you forgot another big player: microsoft!!

Do you know Excel? MS has evolved Excel into an IM columnar DB

The IM engine was formerly known as VertiPaq and is now called xVelocity

it is beind used in MS BI products like PowerView or PoverPivot: http://www.microsoft.com/en-us/bi/powerpivot.aspx

it is also part of MS SQL 2012: http://www.microsoft.com/sqlserver/en/us/solutions-technologies/data-warehousing/in-memory.aspx

MS can (of course) also provide these impressive customer quotes stating that query time reduces from xx minutes to a few seconds or so…

Please also consider smaller vendors like EXASOL.

They offer an in memory database with EXAsolution.

Check out their website: http://www.exasol.com/en/exasolution/technical-details.html

seem they recently introduced EXAPowerlytics, an in-database (and in-memory?) analytics language:

http://www.exasol.com/en/exasolution/exapowerlytics.html

Hmmm. What’s going on here then? Three seemingly-unrelated posts from Bolt, Ed and Steffen – all interesting, yet all from the same IP address… on a block of IP addresses owned by a certain large software vendor. To be fair, none of these posts have mentioned that vendor, so there is no suggestion of anything untoward happening – but there certainly seems to be an interesting pattern developing.

Of course I can’t reply privately to the poster or posters because all three posts gave invalid email addresses.

Welcome to the world of vetting blog comments 🙂

Reblogged this on All Things BOBJ BI and commented:

(Part 2) Must Read two part posting by @flashdba

Very good blog! I think flashdba clearly made the point, that IMDB are different to Non-IMDB’s because “they do not have complex code paths for dealing with data located on storage”.

But I believe that the performance increase due to this fact is a bit overestimated (still it’s a good feature of course).

Actually I believe that moving a classic database completely into memory (let’s says have a DRAM cache as big as the database and SSD as persistent cache) will already deliver a big portion of performance increase.

If then you would – just theoretically – remove the no longer required complex code paths for dealing with larger data amounts located on storage devices you would again gain some performance, but I don’t think it would be a huge gain this time.

It is worth to mention that even IMDBs need some code to synchronize DRAM with persistent cache, but it’s less complex of course.

My point is about the specific case of HANA being used in a BI environment: the biggest performance increase in this scenario actually comes from the columnar storage.

Because databases with column storage have a far greater compression rate they bring two major advantages for BI systems at the same time:

1. Much less I/O: one I/O request will read much more data from storage than classical row oriented databases.

2. Because the db size is much smaller customers might now afford to buy as much memory as the database size. And this is where in-memory databases come into play and further increase performance.

Because Hana has both features – columnar storage and IMDB coding – I think it is a very good database for BI environments. But it’s not just because of in-memory technology solely.

Arne

You may be right – it’s difficult to test and know for sure. But the point about the reduced levels of code (and associated reduction in complexity) is important because it explains why an IMDB is one which must be designed as such. In the same way that (for example) rebranding BP from British Petroleum to Beyond Petroleum doesn’t actually change anything about the company, I believe that rebranding a “traditional” RDBMS to be an “In-Memory” database is meaningless if the underlying code remains the same.

Your point about the columnar storage properties of HANA is a good one – since HANA allows for both possibilities it would be interesting to experiment with the two and see what performance benefits can be realised (although obviously it would depend on the data).

Thanks for commenting!

I absolutely agree with your definition of IMBD. I didn’t want to argue about this in my comment. I think it helps to distinguish marketing from true innovation.

I didn’t take it as arguing – I took it as healthy discussion! And on the topic of marketing versus innovation I believe we are completely aligned…