Flash vendors routinely quote “effective capacity” figures based on optimistic data reduction assumptions – understanding what those numbers really mean is essential before signing a purchase order.

Category: Database Economics

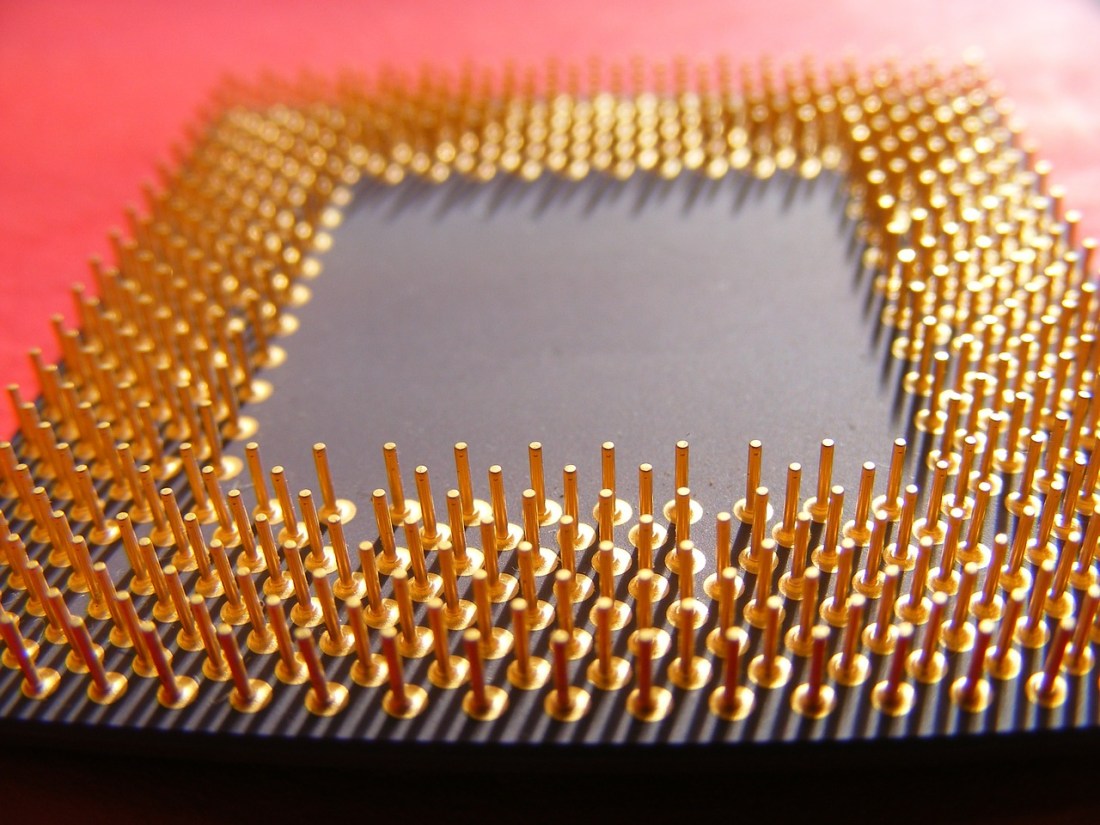

The Most Expensive CPUs You Own

Storage for DBAs: Take a look in your data centre at all those humming boxes and flashing lights. Ignore the storage and networking gear for now and just concentrate on the servers. You probably have many different models, with different types and numbers of CPUs and DRAM inside. My question is, which CPUs are the most … Continue reading The Most Expensive CPUs You Own

The Real Cost of Oracle RAC

Oracle RAC is sold as a high-availability solution, but its real cost – in licenses, complexity and the hidden assumption that losing a node doesn’t count as an outage – is rarely made explicit.

The Real Cost of Enterprise Database Software

Oracle database licensing is eye-wateringly expensive per CPU core – and storage costs, often seen as prohibitive, represent a surprisingly small fraction of the total three-year spend.