In this cookbook I will be installing Oracle Linux 6 Update 7 on two nodes and then setting up Oracle 11.2.0.4 Real Application Clusters. I’ll be using two HP ProLiant DL360 Gen9 servers and a Kaminario K2 All Flash Array. The servers both have 256GB of RAM as well as two CPU sockets, each populated with Intel Xeon E5-2667 v3 8-core CPUs. Note that everything I do below is happening twice – once each on both of the servers dbserver01 and dbserver02.

In this cookbook I will be installing Oracle Linux 6 Update 7 on two nodes and then setting up Oracle 11.2.0.4 Real Application Clusters. I’ll be using two HP ProLiant DL360 Gen9 servers and a Kaminario K2 All Flash Array. The servers both have 256GB of RAM as well as two CPU sockets, each populated with Intel Xeon E5-2667 v3 8-core CPUs. Note that everything I do below is happening twice – once each on both of the servers dbserver01 and dbserver02.

I’ve already installed the basic configuration of Oracle Linux 6 Update 7 on each server (snappily named dbserver01 and dbserver02). I’ve also configured two network adapters on each server so that one is on a public network and one on a private network (to act as the interconnect):

[root@dbserver01 ~]# cat /etc/hosts 127.0.0.1 localhost localhost.localdomain 192.168.7.54 dbserver01.local dbserver01 192.168.7.55 dbserver02.local dbserver02 192.168.7.56 dbserver01-vip.local dbserver01-vip 192.168.7.57 dbserver02-vip.local dbserver02-vip 10.1.1.54 dbserver01-priv.local dbserver01-priv 10.1.1.55 dbserver02-priv.local dbserver02-priv 192.168.7.58 db-scan.local dbscan 192.168.7.59 db-scan.local dbscan 192.168.7.60 db-scan.local dbscan

The VIP and SCAN addresses are for use with RAC later on.

I’m also going to disable selinux because it only ever causes me hassle:

[root@dbserver01 ~]# vi /etc/selinux/config

I’ll change the SELINUX value from “enforcing” to “disabled”:

# This file controls the state of SELinux on the system. # SELINUX= can take one of these three values: # enforcing - SELinux security policy is enforced. # permissive - SELinux prints warnings instead of enforcing. # disabled - No SELinux policy is loaded. SELINUX=enforcing # SELINUXTYPE= can take one of these two values: # targeted - Targeted processes are protected, # mls - Multi Level Security protection. SELINUXTYPE=targeted

I need to reboot the system to pick up that change, but I have a few other things to do first. Like disabling the iptables firewall:

[root@dbserver01 ~]# service iptables stop iptables: Setting chains to policy ACCEPT: filter [ OK ] iptables: Flushing firewall rules: [ OK ] iptables: Unloading modules: [ OK ] [root@dbserver01 ~]# chkconfig iptables off [root@dbserver01 ~]# chkconfig --list | grep iptables iptables 0:off 1:off 2:off 3:off 4:off 5:off 6:off

I’m also going to configure Network Time Protocol (NTP) so that the daemon is running. Oracle RAC requires that the -x flag is added to the config file to stop the time being adjusted backwards. RAC gets really unhappy if the time goes backwards.

[root@dbserver01 ~]# chkconfig --list | grep ntp ntpd 0:off 1:off 2:off 3:off 4:off 5:off 6:off ntpdate 0:off 1:off 2:off 3:off 4:off 5:off 6:off [root@dbserver01 ~]# vi /etc/sysconfig/ntpd

Let’s add the -x flag:

# Drop root to id 'ntp:ntp' by default.

OPTIONS="-x -u ntp:ntp -p /var/run/ntpd.pid -g"

And set NTPD to start automatically:

[root@dbserver01 ~]# chkconfig ntpd on [root@dbserver01 ~]# chkconfig --list | grep ntp ntpd 0:off 1:off 2:on 3:on 4:on 5:on 6:off ntpdate 0:off 1:off 2:off 3:off 4:off 5:off 6:off

I should also mention that I’ve already configured 200GB of memory to use Huge Pages:

[root@dbserver02 ~]# cat /etc/sysctl.conf | grep -i huge vm.nr_hugepages = 102400 [root@dbserver02 ~]# cat /proc/meminfo | grep -i huge HugePages_Total: 102400 HugePages_Free: 102400 HugePages_Rsvd: 0 HugePages_Surp: 0 Hugepagesize: 2048 kB

Now we can reboot the server:

[root@dbserver01 ~]# shutdown -r now

The reboot takes just long enough to get me concerned that it’s never going to come back online… and then it’s ready.

Using the Oracle Preinstallation RPMs with YUM

Installing and configuring all of the relevant packages and settings in Linux used to be a pain, but for some years now Oracle has offered a great solution: the preinstallation RPMs available from it’s own yum repository.

Oracle Linux 6 Update 7 arrives with the yum configuration already enabled, as we can see here:

root@dbserver01 ~]# yum repolist Loaded plugins: security, ulninfo repo id repo name status public_ol6_UEKR3_latest Unbreakable Enterprise Kernel Release 3 for Oracle Linux 6Server (x86_64) 577 public_ol6_latest Oracle Linux 6Server Latest (x86_64) 33,539

So all we need to do is install the relevant preinstallation package for 11gR2 or 12cR1:

[root@dbserver01 ~]# yum list oracle\*preinstall\* Loaded plugins: security, ulninfo Available Packages oracle-rdbms-server-11gR2-preinstall.x86_64 1.0-12.el6 public_ol6_latest oracle-rdbms-server-12cR1-preinstall.x86_64 1.0-14.el6 public_ol6_latest

I’m using 11g today:

[root@dbserver01 ~]# yum install oracle-rdbms-server-11gR2-preinstall -y

I’m not going to cut and paste all the output from the yum command because it’s pretty verbose.

The Oracle preinstallation package does many things, one of which is to create the Oracle user account. So we now need to set the password for that account:

[root@dbserver01 ~]# passwd oracle Changing password for user oracle. New password: Retype new password: passwd: all authentication tokens updated successfully. [root@dbserver01 ~]# su - oracle [oracle@dbserver01 ~]$ exit

While I’m in the mood for using yum I’ll also add a few other packages that will come in handy:

[root@dbserver01 ~]# yum install screen -y [root@dbserver01 ~]# yum install sg3_utils -y [root@dbserver01 ~]# yum install ftp -y [root@dbserver01 ~]# yum install vsftpd -y

Screen is a tool that allows me to leave processes running and disconnect by session – it’s incredibly powerful. The sg3_utils package contains lots of useful SCSI utilities such as the rescan-scsi-bus.sh script. I’m installing ftp and the vsftpd daemon so that I can do simple things like transfer my Oracle installation files over to the servers later on. Note that I haven’t enabled vsftpd to run by default (because that would be a security risk), so I’ll have to start it up every time I want to use it. I’m going to use it right now to FTP over those installation files into the Oracle account, so I will start it up:

[root@dbserver01 ~]# service vsftpd start Starting vsftpd for vsftpd: [ OK ]

I can now FTP onto the server from the remote host where I keep all of my installation files. I’ve placed them in a subfolder under the oracle user’s home directory and will now unzip them like so:

[oracle@dbserver01 ~]$ cd Install/11204/ [oracle@dbserver01 11204]$ ls -l total 3664216 -rw-r--r-- 1 oracle oinstall 1395582860 Mar 30 14:42 p13390677_112040_Linux-x86-64_1of7.zip -rw-r--r-- 1 oracle oinstall 1151304589 Mar 30 14:42 p13390677_112040_Linux-x86-64_2of7.zip -rw-r--r-- 1 oracle oinstall 1205251894 Mar 30 14:43 p13390677_112040_Linux-x86-64_3of7.zip [oracle@dbserver01 11204]$ for file in `ls -1`; do unzip $file; done

One of the files that exists within the Grid Infrastructure binaries is the cvuqdisk package, so we now need to install this on all nodes (which might mean copying it over from the source node). Here’s the installation, including the line to set the CVUQDISK environment variable to the OS group which will own it:

[root@dbserver01 ~]# ls -l ~oracle/Install/11204/grid/rpm/cvuqdisk-1.0.9-1.rpm -rw-r--r-- 1 oracle oinstall 8288 Aug 26 2013 /home/oracle/Install/11204/grid/rpm/cvuqdisk-1.0.9-1.rpm [root@dbserver01 ~]# CVUQDISK_GRP=oinstall; export CVUQDISK_GRP [root@dbserver01 ~]# rpm -iv ~oracle/Install/11204/grid/rpm/cvuqdisk-1.0.9-1.rpm Preparing packages for installation... cvuqdisk-1.0.9-1

Another thing I need to do before the installation is create the relevant directory structures in which the various Oracle Homes will reside. By default, Oracle likes to place stuff in /u01/app/oracle but on this newly-installed system I don’t have a /u01 directory. I could just create one, but that would result in my Oracle stuff all residing in the root filesystem – and that’s not where the majority of my available capacity is:

[root@dbserver01 ~]# df -h

Filesystem Size Used Avail Use% Mounted on

/dev/mapper/vg_dbserver01-lv_root

50G 2.8G 44G 6% /

tmpfs 126G 0 126G 0% /dev/shm

/dev/sda2 477M 78M 370M 18% /boot

/dev/sda1 200M 268K 200M 1% /boot/efi

/dev/mapper/vg_dbserver01-lv_home

497G 7.3G 464G 2% /home

As you can see, most of my free space is in /home. So with the help of some symbolic links I’m going to put my /u01/app directory in there (notice that I’m back as the root user now):

[root@dbserver01 ~]# ls -l /home total 20 drwx------. 2 root root 16384 Mar 24 11:05 lost+found drwx------ 3 oracle oinstall 4096 Mar 30 14:27 oracle [root@dbserver01 ~]# mkdir /home/OracleHome [root@dbserver01 ~]# chown oracle:oinstall /home/OracleHome [root@dbserver01 ~]# mkdir /u01 [root@dbserver01 ~]# cd /u01 [root@dbserver01 u01]# ln -s /home/OracleHome app [root@dbserver01 u01]# ls -l total 0 lrwxrwxrwx 1 root root 16 Mar 30 14:57 app -> /home/OracleHome

The result of this is that Oracle can continue using its favoured /u01/app/ora* naming conventions but that all of its files will actually reside under /home/OracleHome.

Setting Up The SCAN Listener with DNSMASQ

Note: This section is not to be followed for production environments!

One of the more irritating requirements of Oracle RAC is the need for a Single Client Access Name (or “SCAN”). This basically means lots of messing around with DNS servers in order to create a single DNS name (such as the “db-scan” I use here) which resolves to multiple IP addresses on a round robin basis. It’s basically a cluster alias for the Oracle database.

On a production environment you would set this up as part of your resilient network infrastructure, but I’m building a test platform here and I don’t have access to our dedicate corporate DNS server. So I’m going to do what Oracle DBAs always do: I’m going to frig it.

To keep things nice and easy I’m going to use the very simple dnsmasq tool. Installation couldn’t be easier, particularly when you consider that Tim Hall has already documented it perfectly.

Download and install from yum:

[root@dbserver01 ~]# yum install dnsmasq -y

Then configure it to run at boot time:

[root@dbserver01 ~]# service dnsmasq start Starting dnsmasq: [ OK ] [root@dbserver01 ~]# chkconfig dnsmasq on

It will now pick up the entries I placed in my /etc/hosts file earlier on – as long as I’ve configured the networking to use the local IP address as its primary DNS server, which I have:

[root@dbserver01 ~]# cat /etc/resolv.conf search local nameserver 192.168.7.54 nameserver 192.168.7.1

The first one is this server, which runs DNSMASQ, while the second one is the real DNS server on this network. I can check this now by running an nslookup on the SCAN address – it should resolve three different IPs:

[root@dbserver01 ~]# nslookup dbscan Server: 192.168.7.54 Address: 192.168.7.54#53 Name: dbscan Address: 192.168.7.58 Name: dbscan Address: 192.168.7.59 Name: dbscan Address: 192.168.7.60

And it does – we’re ready to go.

Installing and Configuring Device Mapper Multipath

For this installation I am using shared storage which is presented over fibre channel. As you would expect there are many paths from the server to the storage in order to provide resilience. So we need to install some sort of multipathing daemon in order to aggregate all of the duplicate paths and present them as single, virtualised paths for use with Oracle ASM. For this we will use the Linux Device Mapper Multipath daemon.

First let’s check if it is installed:

[root@dbserver01 ~]# rpm -qa device-mapper-multipath

It isn’t (there was no output), so let’s install it:

[root@dbserver01 ~]# yum install device-mapper-multipath -y

Again, the yum command is too verbose for me to both cut and pasting the output here. Before I start up the daemon I need to create a configuration file:

[root@dbserver01 ~]# vi /etc/multipath.conf

This file will contain:

defaults {

polling_interval 1

}

blacklist {

devnode "^(ram|raw|loop|fd|md|dm-|sr|scd|st)[0-9]*"

}

devices {

device {

vendor "KMNRIO"

product "K2"

path_grouping_policy multibus

getuid_callout "/lib/udev/scsi_id --whitelisted --device=/dev/%n"

path_checker tur

path_selector "queue-length 0"

no_path_retry fail

hardware_handler "0"

rr_weight priorities

rr_min_io 1

failback 15

fast_io_fail_tmo 5

dev_loss_tmo 8

}

}

Now I can start up the daemon and make sure it’s set to autostart:

[root@dbserver01 ~]# service multipathd start Starting multipathd daemon: [ OK ] [root@dbserver01 ~]# chkconfig multipathd on

At this point I will go away and create a number of volumes on my Kaminario K2 array then present them to the servers. I’ll also reboot the servers once this is done, to show all of the new devices. I could save myself the trouble of a reboot by running the rescan-scsi-bus.sh script and then restarting the multipathing daemon, but the reboot gives me the chance to go and make a cup of tea.

<Cup of Tea>

Ok I’m back – and, mercifully, so are my servers. Now I can see the newly-mapped volumes from my array:

[root@dbserver01 ~]# multipath -ll | egrep "KMNRIO|size" 20024f4005396015f dm-11 KMNRIO,K2 size=50G features='0' hwhandler='0' wp=rw 20024f4005396015e dm-9 KMNRIO,K2 size=50G features='0' hwhandler='0' wp=rw 20024f4005396015d dm-10 KMNRIO,K2 size=50G features='0' hwhandler='0' wp=rw 20024f40053960000 dm-2 KMNRIO,K2 size=512K features='0' hwhandler='0' wp=rw 20024f4005396015c dm-8 KMNRIO,K2 size=50G features='0' hwhandler='0' wp=rw 20024f4005396015b dm-7 KMNRIO,K2 size=50G features='0' hwhandler='0' wp=rw 20024f4005396015a dm-6 KMNRIO,K2 size=50G features='0' hwhandler='0' wp=rw 20024f40053960161 dm-13 KMNRIO,K2 size=50G features='0' hwhandler='0' wp=rw 20024f40053960160 dm-12 KMNRIO,K2 size=50G features='0' hwhandler='0' wp=rw 20024f40053960158 dm-5 KMNRIO,K2 size=512G features='0' hwhandler='0' wp=rw 20024f40053960159 dm-3 KMNRIO,K2 size=512G features='0' hwhandler='0' wp=rwA

There are eight LUNs of 50GB and two more of 512GB. The eight are for my +DATA diskgroup and the two are for my +RECO diskgroup. The numbers at the start of the lines there (beginning with 2002) are the unique world wide names (or WWIDs) for the LUNs.

I’m now going to take the WWIDs and plug them into the multipath.conf in the “multipaths” section so I can give them meaningful aliases:

multipaths {

multipath {

wwid 20024f4005396015f

alias oradata01

}

multipath {

wwid 20024f4005396015e

alias oradata02

}

multipath {

wwid 20024f4005396015d

alias oradata03

}

multipath {

wwid 20024f40053960160

alias oradata04

}

multipath {

wwid 20024f4005396015c

alias oradata05

}

multipath {

wwid 20024f4005396015b

alias oradata06

}

multipath {

wwid 20024f4005396015a

alias oradata07

}

multipath {

wwid 20024f40053960161

alias oradata08

}

multipath {

wwid 20024f40053960158

alias orareco01

}

multipath {

wwid 20024f40053960159

alias orareco02

}

}

Now I force the multipath daemon to flush its devices and reexamine them based on these new aliases:

[root@dbserver01 ~]# multipath -F [root@dbserver01 ~]# multipath -v1 20024f40053960000 orareco01 orareco02 oradata07 oradata06 oradata05 oradata03 oradata02 oradata01 oradata04 oradata08

There they are. (You can ignore the first one which still has a numeric label.) I can also see them in the /dev/mapper directory as symbolic links:

[root@dbserver01 ~]# ls -l /dev/mapper total 0 lrwxrwxrwx 1 root root 7 Apr 1 23:05 20024f40053960000 -> ../dm-2 crw-rw---- 1 root root 10, 236 Apr 1 23:04 control lrwxrwxrwx 1 root root 8 Apr 1 23:05 oradata01 -> ../dm-11 lrwxrwxrwx 1 root root 8 Apr 1 23:05 oradata02 -> ../dm-10 lrwxrwxrwx 1 root root 7 Apr 1 23:05 oradata03 -> ../dm-9 lrwxrwxrwx 1 root root 8 Apr 1 23:05 oradata04 -> ../dm-12 lrwxrwxrwx 1 root root 7 Apr 1 23:05 oradata05 -> ../dm-8 lrwxrwxrwx 1 root root 7 Apr 1 23:05 oradata06 -> ../dm-7 lrwxrwxrwx 1 root root 7 Apr 1 23:05 oradata07 -> ../dm-6 lrwxrwxrwx 1 root root 8 Apr 1 23:05 oradata08 -> ../dm-13 lrwxrwxrwx 1 root root 7 Apr 1 23:05 orareco01 -> ../dm-3 lrwxrwxrwx 1 root root 7 Apr 1 23:05 orareco02 -> ../dm-5 lrwxrwxrwx 1 root root 7 Apr 1 23:04 vg_dbserver01-lv_home -> ../dm-4 lrwxrwxrwx 1 root root 7 Apr 1 23:04 vg_dbserver01-lv_root -> ../dm-0 lrwxrwxrwx 1 root root 7 Apr 1 23:04 vg_dbserver01-lv_swap -> ../dm-1

Those symbolic links point to block device files in /dev:

[root@dbserver01 ~]# ls -l /dev/dm* brw-rw---- 1 root disk 252, 0 Apr 1 23:04 /dev/dm-0 brw-rw---- 1 root disk 252, 1 Apr 1 23:04 /dev/dm-1 brw-rw---- 1 root disk 252, 10 Apr 1 23:05 /dev/dm-10 brw-rw---- 1 root disk 252, 11 Apr 1 23:05 /dev/dm-11 brw-rw---- 1 root disk 252, 12 Apr 1 23:05 /dev/dm-12 brw-rw---- 1 root disk 252, 13 Apr 1 23:05 /dev/dm-13 brw-rw---- 1 root disk 252, 2 Apr 1 23:05 /dev/dm-2 brw-rw---- 1 root disk 252, 3 Apr 1 23:05 /dev/dm-3 brw-rw---- 1 root disk 252, 4 Apr 1 23:04 /dev/dm-4 brw-rw---- 1 root disk 252, 5 Apr 1 23:05 /dev/dm-5 brw-rw---- 1 root disk 252, 6 Apr 1 23:05 /dev/dm-6 brw-rw---- 1 root disk 252, 7 Apr 1 23:05 /dev/dm-7 brw-rw---- 1 root disk 252, 8 Apr 1 23:05 /dev/dm-8 brw-rw---- 1 root disk 252, 9 Apr 1 23:05 /dev/dm-9

But the problem is, those device files are owned by root. Since Oracle ASM is going to run as the user oracle I will need to add a UDEV rule to change the ownership and group of these block device files:

[root@dbserver01 ~]# vi /etc/udev/rules.d/12-oracle-asmdevices.rules

This new file will contain the following rule, based on the names I specified as aliases earlier:

ENV{DM_NAME}=="ora*", OWNER:="oracle", GROUP:="dba", MODE:="660"

Of course, it would be much safer to name each individual device in the above file rather than use a wildcard. But I’m being lazy today. Now, if I restart multipathing, I will see the relevant devices owned by oracle:

[root@dbserver01 ~]# service multipathd restart ok Stopping multipathd daemon: [ OK ] Starting multipathd daemon: [ OK ] [root@dbserver01 ~]# ls -l /dev/dm* brw-rw---- 1 root disk 252, 0 Apr 1 23:15 /dev/dm-0 brw-rw---- 1 root disk 252, 1 Apr 1 23:15 /dev/dm-1 brw-rw---- 1 oracle dba 252, 10 Apr 1 23:15 /dev/dm-10 brw-rw---- 1 oracle dba 252, 11 Apr 1 23:15 /dev/dm-11 brw-rw---- 1 oracle dba 252, 12 Apr 1 23:15 /dev/dm-12 brw-rw---- 1 oracle dba 252, 13 Apr 1 23:15 /dev/dm-13 brw-rw---- 1 root disk 252, 2 Apr 1 23:15 /dev/dm-2 brw-rw---- 1 oracle dba 252, 3 Apr 1 23:15 /dev/dm-3 brw-rw---- 1 root disk 252, 4 Apr 1 23:15 /dev/dm-4 brw-rw---- 1 oracle dba 252, 5 Apr 1 23:15 /dev/dm-5 brw-rw---- 1 oracle dba 252, 6 Apr 1 23:15 /dev/dm-6 brw-rw---- 1 oracle dba 252, 7 Apr 1 23:15 /dev/dm-7 brw-rw---- 1 oracle dba 252, 8 Apr 1 23:15 /dev/dm-8 brw-rw---- 1 oracle dba 252, 9 Apr 1 23:15 /dev/dm-9

Now it’s finally time to run the Oracle Installer.

Installing Oracle Grid Infrastructure 11.2.0.4

Now that everything is in place, I’ll run the OUI and kick off the installation of Grid Infrastructure. I’m not going to show every screen here but if you want to see literally everything, you can click this link:

Screenshots of Oracle Grid Infrastructure 11.2.0.4 Installation

If you did decide to look at that last link you can skip the next few steps and move straight to the heading marked “Post-Installation”.

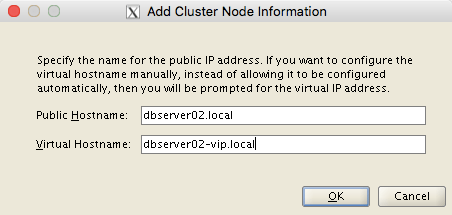

At the Specify Cluster Configuration screen, the SCAN Name needs to be specified as dbscan.local and the second node needs to be added using the Add button:

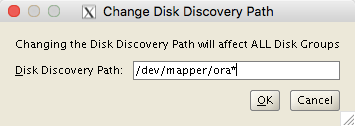

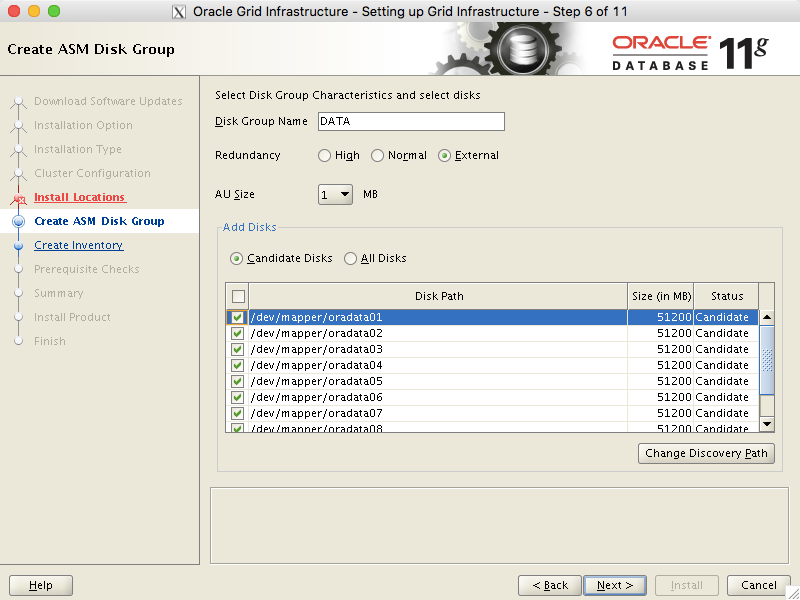

At the Create ASM Disk Group screen, the Change Discovery Path button must be used to change the ASM_DISKSTRING parameter to find my devices located at /dev/mapper/ora*:

This then allows an ASM diskgroup called +DATA to be created on the eight devices I named oradata*:

Once I’ve been through the configuration process and kicked off the installation, I’ll be prompted to run various scripts as root. Again I’ve captured the output from this process on the other page if you need it. Finally, the install completes and we have a working cluster in place as well as a +DATA diskgroup.

Post-Installation

By setting the environment correctly and then running the ASMCMD utility we can see the details of the ASM diskgroup and its underlying disks:

[oracle@dbserver01 ~]$ . oraenv

ORACLE_SID = [oracle] ? +ASM1

The Oracle base has been set to /u01/app/oracle

[oracle@dbserver01 ~]$ asmcmd

ASMCMD> lsdg

State Type Rebal Sector Block AU Total_MB Free_MB Req_mir_free_MB Usable_file_MB Offline_disks Voting_files Name

MOUNTED EXTERN N 512 4096 1048576 409600 409190 0 409190 0 Y DATA/

ASMCMD> lsdsk -p

Group_Num Disk_Num Incarn Mount_Stat Header_Stat Mode_Stat State Path

1 7 3957210117 CACHED MEMBER ONLINE NORMAL /dev/mapper/oradata01

1 6 3957210118 CACHED MEMBER ONLINE NORMAL /dev/mapper/oradata02

1 5 3957210119 CACHED MEMBER ONLINE NORMAL /dev/mapper/oradata03

1 4 3957210116 CACHED MEMBER ONLINE NORMAL /dev/mapper/oradata04

1 3 3957210120 CACHED MEMBER ONLINE NORMAL /dev/mapper/oradata05

1 2 3957210121 CACHED MEMBER ONLINE NORMAL /dev/mapper/oradata06

1 1 3957210122 CACHED MEMBER ONLINE NORMAL /dev/mapper/oradata07

1 0 3957210115 CACHED MEMBER ONLINE NORMAL /dev/mapper/oradata08

Now that the installation is complete there are a couple of tasks to take care of before attempting the installation of the database.

Configuring ASM Prior To Installing Oracle RAC

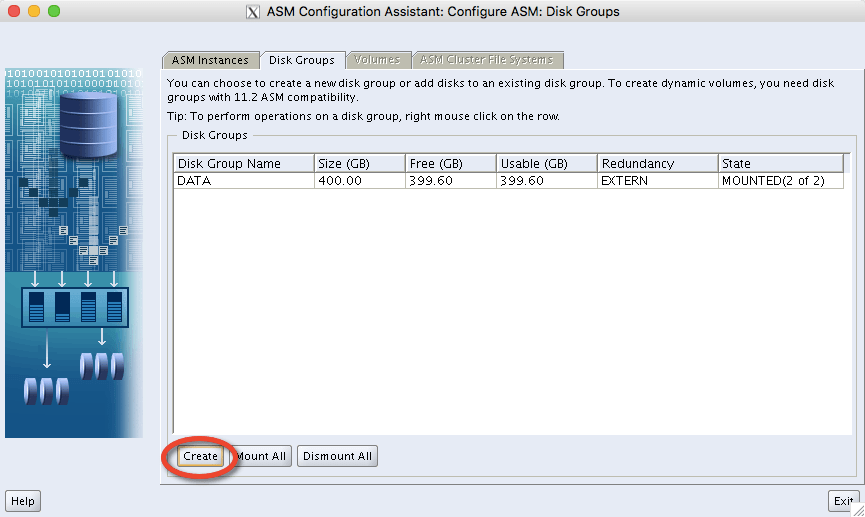

So far we’ve installed Grid Infrastructure, which has created an ASM instance and a +DATA diskgroup. But before we install the database, I also want another ASM diskgroup called +RECO in which I can place the database’s Fast Recovery Area (FRA). So let’s create that now. There are a lot of different ways to do this – for example, we could use SQL*Plus or the ASMCMD utility. But the easiest and dullest way is simply to run the ASM Configuration Assistant (ASMCA) after first ensuring that the environment is correctly configured for ASM1:

[oracle@dbserver01 ~]$ . oraenv ORACLE_SID = [+ASM1] ? ASM1 The Oracle base remains unchanged with value /u01/app/oracle [oracle@dbserver01 ~]$ asmca

When the ASMCA window appears we can click on the Create button:

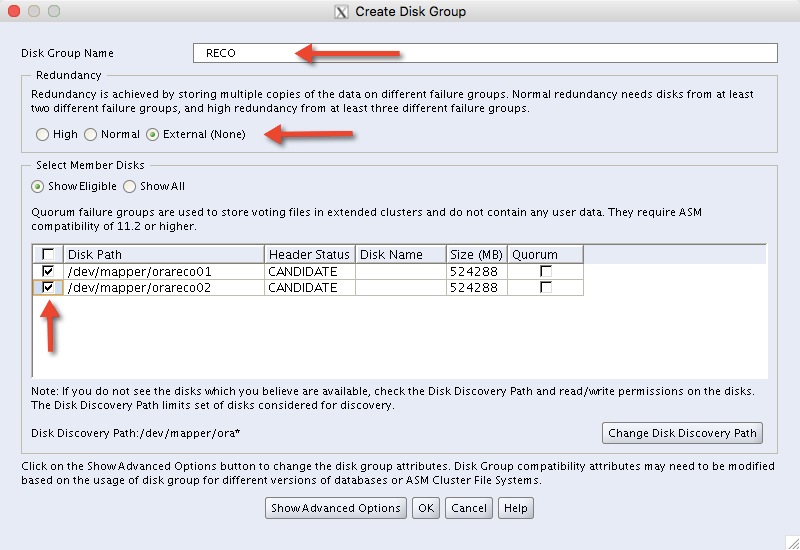

This brings up a new window where we specify the diskgroup name RECO and set it to use EXTERNAL redundancy. We also select the two remaining ASM disks and then click on OK:

Once the message Disk Group RECO created successfully is seen we can quit out of ASMCA again. The new +RECO diskgroup shows up along with +DATA in ASMCMD:

[oracle@dbserver01 ~]$ asmcmd ASMCMD> lsdg State Type Rebal Sector Block AU Total_MB Free_MB Req_mir_free_MB Usable_file_MB Offline_disks Voting_files Name MOUNTED EXTERN N 512 4096 1048576 409600 409190 0 409190 0 Y DATA/ MOUNTED EXTERN N 512 4096 1048576 1048576 1048471 0 1048471 0 N RECO/

Just as a clarification point, when we do the initial installation of Oracle RAC, we don’t get the opportunity to configure the FRA – but I like to have it there for subsequent use.

Installing Oracle Real Application Clusters 11.2.o.4

Now that everything else is in place we can go ahead with the actual database installation. As with the Grid Infrastructure installation, I’m not going to show all 26 screenshots here, but if you want to see screenshots of the entire installation you can follow this link:

Screenshots of Oracle RAC 11.2.0.4 Installation

The only bit worth mentioning here is to obviously choose the +DATA diskgroup as the location of the database.

At the end of the process we have a fully functional two-node RAC installation residing on our oradata LUNs.

Post Installation Stuff

Because I don’t want to mess around running oraenv every time I log in, I’ll add my newly-created Oracle environment to the end of the .bash_profile file in my oracle users’ home directories. Here is what I’m placing at the end of those files:

[oracle@dbserver01 ~]$ tail -5 .bash_profile

export ORACLE_SID=orcl1

export ORACLE_BASE=/u01/app/oracle

export ORACLE_HOME=${ORACLE_BASE}/product/11.2.0/dbhome_1

export PATH=$PATH:${ORACLE_HOME}/bin

export LD_LIBRARY_PATH=${ORACLE_HOME}/lib

I also, because I’m a creature of habit, like to modify the glogin.sql files in my Oracle homes to set the linesize and pagesize settings to something more meaningful than the defaults…. but I’m not going to share that here 🙂

Performance Testing with Oracle SLOB

That’s the end of this installation cookbook. But it’s not the end of this journey, because now that I’ve built this system I’m going to put it through its paces with some synthetic workloads. And the best tool for the job is Oracle SLOB. For more information on that, I encourage you to go and read about it here.