Guest Post

Nate Fuzi is my friend and collegue, based out in the US fulfilling the same role that I perform in EMEA. He is also the person with which I have drunk more sake jello shots than I ever thought probable / sensible / acceptable. Nate recently wrote this note regarding the use of Oracle’s Automatic Degree of Parallelism with Violin Memory flash storage – and I liked it so much I asked him if I could re-blog it for the Internet community. I suspect I will have to offer him some sort of sake jello shot-based payment, but I am prepared to suffer this so that you, the reader, do not have to. My pain is your gain. Over to you Nate…

Who among us is not a fan of Auto DOP (Automatic Degree of Parallelism) in Oracle 11gR2? This easy button was supposed to take all the stress out of handling parallelism inside the database: no more setting non-default degrees on tables, no need to put parallel hints in SQL, etc. According to so many blogs, all you had to do was set PARALLEL_DEGREE_POLICY to AUTO, and the parallelism fairy sprinkled her dust in just the right places to make your multi-threaded dreams come true.

Who among us is not a fan of Auto DOP (Automatic Degree of Parallelism) in Oracle 11gR2? This easy button was supposed to take all the stress out of handling parallelism inside the database: no more setting non-default degrees on tables, no need to put parallel hints in SQL, etc. According to so many blogs, all you had to do was set PARALLEL_DEGREE_POLICY to AUTO, and the parallelism fairy sprinkled her dust in just the right places to make your multi-threaded dreams come true.

[If you don’t care to read ALL about my pain and suffering, skip down to #MEAT]

But she vexed me time and again—in multiple POCs where high core count systems failed to launch more than a couple oracle processes at a time and where I finally resorted back to the old ways to achieve what I knew the Violin array was capable of delivering in terms of IOPS/bandwidth, but more importantly application elapsed times. And so it was with this customer, a POC where we had to deliver a new platform for their QA system comprised of a large 80-core x86 server and a single 3000 series Violin Memory flash memory array. Full table scans (single-threaded) could drive the array over 1300 MB/s, so the basic plumbing seemed to be in order. Yet having loaded a copy of their production database onto the array and run standard application reports against it, it actually yielded worse results than the production system, an older Solaris box with fewer processors, less RAM (and less SGA/PGA to Oracle), and a spinning-disk based SAN behind it. What the…? In the mix also were lots of small and not-so-small differences: parameter values derived from such physical differences as the core count, the processes setting, the size of the PGA, etc.; the fact that they wanted to test 11.2.0.3 as part of the overall testing (production was still on 11.2.0.1); “system stats” being a term their DBAs had never heard; all kinds of differences in object stats; the list went on. So the new system isn’t keeping up with prod, let alone beating it, you say? Where do I start?

I arrived on site Monday morning with 2 days scheduled to determine the problem and get this engine cranking the kind of horsepower we knew it could. The owner of the POC made it clear his concern was getting maximum performance out of the rig without touching the application code. Standard reports were showing spotty improvements: some were down to 5 minutes from 17 minutes, others were unchanged at 12 minutes, and still others had worsened from 18 minutes to 22 minutes. A batch job that ran 2 hours in production twice a day (more times would be lovely, of course) had run out of TEMP space after 5 hours on the test system. Clearly something was afoot. He had already tried everything I could suggest over email: turning OPTIMIZER_FEATURES_ENABLE back to 11.2.0.1, gathering object stats, gathering workload system stats, trying auto DOP, enabling and then disabling hyper-threading, and more. The only consistent result was an increase in his frustration level.

I asked how he wanted to run this rig, assuming there were no inhibitors. He wanted most of the memory allocated to Oracle, taking advantage of 11.2.0.3’s features and fixes. OK, then let’s set those and start diagnosing from there; no sense fixing a system running in a config you don’t want—especially since efforts to make the test system look and act like prod only with faster storage had all failed. A quick run through of the report test battery showed results similar to what they’d seen before. We broke it down to the smallest granule we could: run a single report, see the SQL it generates on the test system, compare its explain plan there to what production would do with it. This being my first run-in with MicroStrategy reports, I had the fun of discovering every report run generates a “temporary” table with a unique name, inserts its result set into that table, and then returns that result set to the report server. Good luck trending performance of a single SQL ID while making your tuning changes. Well, let’s just compare the SELECT parts for each report’s CTAS and subsequent INSERTs then. What we saw was that the test system was consistently doing more work—a lot more work—to satisfy the same query. More buffer gets, more looping, more complicated plans. Worse, watching it run from the top utility, one oracle process had a single processor at about a trot and was driving unimpressive IO. Here we have this fleet of Porsches to throw at the problem, and we’re leaving all but one parked—and that one we’re driving like we feel guilty for not buying a Prius.

I asked how he wanted to run this rig, assuming there were no inhibitors. He wanted most of the memory allocated to Oracle, taking advantage of 11.2.0.3’s features and fixes. OK, then let’s set those and start diagnosing from there; no sense fixing a system running in a config you don’t want—especially since efforts to make the test system look and act like prod only with faster storage had all failed. A quick run through of the report test battery showed results similar to what they’d seen before. We broke it down to the smallest granule we could: run a single report, see the SQL it generates on the test system, compare its explain plan there to what production would do with it. This being my first run-in with MicroStrategy reports, I had the fun of discovering every report run generates a “temporary” table with a unique name, inserts its result set into that table, and then returns that result set to the report server. Good luck trending performance of a single SQL ID while making your tuning changes. Well, let’s just compare the SELECT parts for each report’s CTAS and subsequent INSERTs then. What we saw was that the test system was consistently doing more work—a lot more work—to satisfy the same query. More buffer gets, more looping, more complicated plans. Worse, watching it run from the top utility, one oracle process had a single processor at about a trot and was driving unimpressive IO. Here we have this fleet of Porsches to throw at the problem, and we’re leaving all but one parked—and that one we’re driving like we feel guilty for not buying a Prius.

New object stats, workload system stats, optimizer features, hyper-threading—all make negligible difference. Histogram bucket counts and values are too close to be a factor. Let’s try hinting some parallelism. BANG. 2 minutes goes down to 15 seconds. Awesome. But we can’t make changes to the code, and certainly not when the SQL is generated by the report server each time. This also means no SQL profiles or baselines. And setting degrees on their tables might open floodgates I don’t want to open. Plus I have DOP set to AUTO. Why isn’t that thing doing anything when it clearly helps the SQL to run in parallel?! Enter the Google.

#MEAT

Ah, the rave reviews of auto DOP. Oh, the ease with which it operates. My, the results you’ll get. But we aren’t getting it. Auto DOP isn’t doing anything for us. How can we make it see parallel execution as a more viable option? Turns out there are several knobs for turning “auto” parallelism up or down, and I was unaware of the combination all them.

Sure there’s your PARALLEL_DEGREE_LIMIT, possibly affected by your core count and PARALLEL_THREADS_PER_CPU, and of course your options for PARALLEL_DEGREE_POLICY, plus PARALLEL_MAX_SERVERS which is derived from some unspecified mix of CPU_COUNT, PARALLEL_THREADS_PER_CPU and the PGA_AGGREGATE_TARGET. But have you run DBMS_RESOURCE_MANAGER.CALIBRATE_IO? Have a look over Automatic Degree of Parallelism in 11.2.0.2 [ID 1269321.1] to see if you might be limiting yourself parallel-wise because the database has no idea what your IO subsystem is capable of. Note that they do not mention workload system stats in this context. I couldn’t find verification that these play no part in DOP, but this note seems to suggest the values in DBA_RSRC_IO_CALIBRATE play a much more significant role now. Following a link about a bug (10180307) in 11.2.0.2 and below, older versions of the CALIBRATE_IO procedure could produce unpredictable results. But more important was the comment that “The per process maximum throughput (MAX_PMBPS) value [might be] too large, resulting in a low DOP while running AutoDOP.” We confirmed this by allowing CALIBRATE_IO to run for about 10 minutes and checking the results. What we saw was 31K IOPS, 328 MB/s total, 334 MB/s per process, and a latency of 0. Interesting numbers, but the explain plan still said the computed DOP was 1. So what does Oracle suggest you do if you don’t like the parallelism? Cheat and set the values manually. According to the same note, start with:

Sure there’s your PARALLEL_DEGREE_LIMIT, possibly affected by your core count and PARALLEL_THREADS_PER_CPU, and of course your options for PARALLEL_DEGREE_POLICY, plus PARALLEL_MAX_SERVERS which is derived from some unspecified mix of CPU_COUNT, PARALLEL_THREADS_PER_CPU and the PGA_AGGREGATE_TARGET. But have you run DBMS_RESOURCE_MANAGER.CALIBRATE_IO? Have a look over Automatic Degree of Parallelism in 11.2.0.2 [ID 1269321.1] to see if you might be limiting yourself parallel-wise because the database has no idea what your IO subsystem is capable of. Note that they do not mention workload system stats in this context. I couldn’t find verification that these play no part in DOP, but this note seems to suggest the values in DBA_RSRC_IO_CALIBRATE play a much more significant role now. Following a link about a bug (10180307) in 11.2.0.2 and below, older versions of the CALIBRATE_IO procedure could produce unpredictable results. But more important was the comment that “The per process maximum throughput (MAX_PMBPS) value [might be] too large, resulting in a low DOP while running AutoDOP.” We confirmed this by allowing CALIBRATE_IO to run for about 10 minutes and checking the results. What we saw was 31K IOPS, 328 MB/s total, 334 MB/s per process, and a latency of 0. Interesting numbers, but the explain plan still said the computed DOP was 1. So what does Oracle suggest you do if you don’t like the parallelism? Cheat and set the values manually. According to the same note, start with:

delete from resource_io_calibrate$;

insert into resource_io_calibrate$ values(current_timestamp, current_timestamp, 0, 0, 200, 0, 0);

commit;

This tells the database a single process can drive at most 200 MB/s from the storage system. If you want more parallelism, tell Oracle each process drives less IO, and the database suggests creating more processes to go after that data. I had already seen a single process driving the array to maximum bandwidth, so ~330 MB/s seemed low, but still it was hindering our parallelism efforts. Even the 200 MB/s setting drove no parallelism in our test. We dropped the value to 50 MB/s and finally parallelism picked itself up off the floor, suggesting a degree of 8. We cranked that puppy down to 5 MB/s and suddenly Oracle wanted to throw all 80 cores at our little query. Booya. We fell back to 50 MB/s, and ran the tests again. We hit a record time of 49s on a report that took 21 minutes in prod. 28 minutes went to 59s for another report. 22 minutes went to 44s. Not everything was <1m. Some only went from 17m to 5m, but this was still enough to make the testers ask if something was wrong. And the 2 hour report that wasn’t finishing in 5 hours completed in 28 minutes.

Maybe you already knew all this, and you’ve loved auto DOP long time. But if you didn’t, I thought I would share my experience in hopes you won’t lose as much time or hair on it. I find potential customers frequently ask whether tuning is required with a flash memory solution, and my honest answer is “sometimes”. This was one of those sometimes.

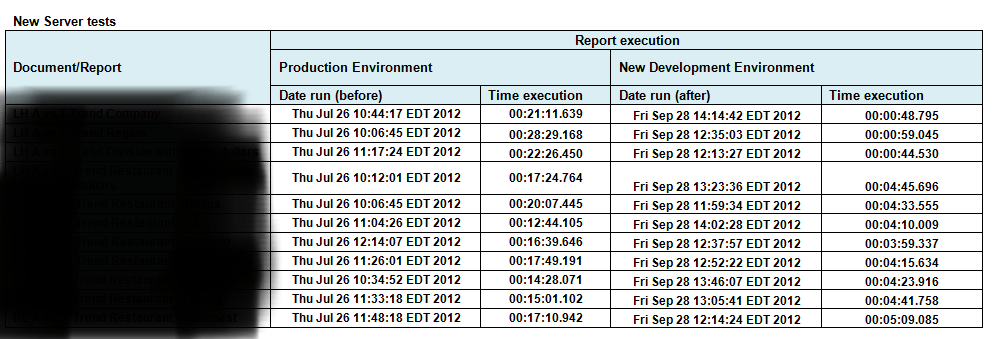

Addendum: Here are the final results from the tuning by Nate – with the report names removed to protect confidentiality:

this is gold

why buy an violin 3000 array that is SAS directly attached http://www.violin-memory.com/wp-content/uploads/Violin-Datasheet-3000.pdf?d=1 , when you can buy a 3.2TB of ocz z-drive r4 (http://www.amazon.com/OCZ-Z-Drive-Series-Maximum-ZD4CM84-HH-300G/dp/B005HU0KCG/ref=sr_1_1?ie=UTF8&qid=1355069406&sr=8-1&keywords=ocz+z-drive+r4 ) ?

It is also pci attached ( a single point of failure ).

I really don’t see why people do not use 1, or 2 or 4 of these pcie cards in order to speed up their database.

It’s cheaper and the performance is greater ( the card is on pcie ) .

There are a lot of alternatives … the ssd on pcie is a huge market. It evolves faster than everything else .

And the price is 3x-20x smaller ( comparative with dedicated storages on flash ) .

Hi Alex

The question is: why buy a flash memory array when you can buy PCIe flash cards. There are a few answers but the main ones come down to performance and availability.

First of all, any PCIe card supplying persistent storage is a potential issue to availability. If you have a PCIe flash card and it fails (as many of them do) you are almost always going to have to take the server down to replace it. That’s not enterprise class storage. This is why companies like Fusion IO make good business out of putting temporary tablespaces and database smart flash caches on their cards, because those are the parts of a database you can “afford” to lose. Networked flash arrays such as those from Violin Memory bring the high availability characteristics of enterprise storage, by allowing multiple connectivity options such as fibre-channel, Infiniband and iSCSI. This means your data is available over multiple paths to (potentially) multiple target servers. We also supply PCIe connectivity options, which I think is what you were getting at… but even then, HA is an option. For example you can connect a Violin array to two different servers using PCIe and then run Oracle ASM and RAC to provide high availability.

Then there is the performance. Sure PCIe has the lowest latency, but once you start ramping up the I/O that becomes less obvious. Flash cannot be written to until it has been erased first – this means that erase operations are happening in the background all the time to try and ensure blocks are ready for writes; we call this Garbage Collection. In a flash array, all of the internal flash cards can be managed holistically, i.e. if one flash die is unavailable due to garbage collection another one can be used. That is not the case with the PCIe cards, which are managed as completely separate units. The result, which has been proven by many independent tests, is that the PCIe card hits the “write cliff”, where reads and writes have to be queued behind erases, taking performance back up to the level of disk.

There’s more… Flash arrays have the opportunity to perform “wear levelling”, where flash is used evenly to maximise the lifetime of the product; PCIe cards cannot do this. Flash arrays can have multiple, redundant and resilient components. Flash arrays can have enterprise-level management software. In the case of Violin, the flash arrays can even run software natively, such as the Symantec Foundation Suite tools we announced a few months ago. PCIe cards have no ability to do any of this.

Don’t get me wrong: PCIe cards have their place. They are a very low latency way of storing data that you don’t need to safeguard: caches and temp data being ideal examples. But you would be insane to put your entire tier one database on PCIe cards when you can have pretty much the same latency using a flash array with Infiniband connectivity.

As proof of this argument I offer you the lesson from history that is the Storage Area Network. Disks used to be local, they used to be direct-attached… but with the advent of SANs came the benefits of availability, maintainability, manageability, consolidation and to some extent performance increases (through wide striping etc). All for the trade off of a slight increase in latency. There is nothing different in the flash story – and this is why Fusion have their ION array product, why IBM bought TMS, why EMC bough Xtreme-IO and why Violin Memory is growing exponentially. The flash array market is only going in one direction.

分析的很透彻,很欣赏你的看法,学习了。

读万卷书不如行万里路

I recently came across this blog post by the very brilliant Gwen Shapira so thought I’d link to it here:

http://www.pythian.com/blog/secrets-of-oracles-automatic-degree-of-parallelism/

thanks for this blog gave me some kl ideas for min