Analytics. Apparently it’s “the discovery and communication of meaningful patterns in data“. Allegedly it’s the “Next Holy Grail“. By definition it’s “the science of logical analysis“. But what is it really?

Analytics. Apparently it’s “the discovery and communication of meaningful patterns in data“. Allegedly it’s the “Next Holy Grail“. By definition it’s “the science of logical analysis“. But what is it really?

We know that it is considered a type of Business Intelligence. We know that when applied to massive volumes of information it is often described as a Big Data problem. And we know that companies and organisations are using it for tasks like credit risk analysis, fraud detection and complex event processing.

Above all else we know that Oracle, IBM, SAP etc are all banging on about analytics like it’s the most important thing in the world. Which must mean it is. Maybe. But what did you say it was again?

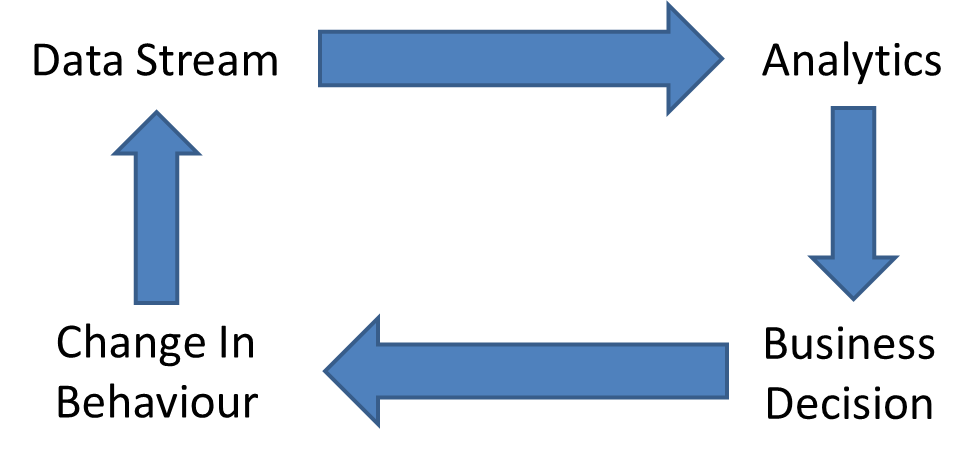

Here’s my view: analytics is the section of a feedback loop in which data is analysed and condensed. If you are using analytics the chances are you have some sort of data stream which you want to process. Analytics is a way of processing that (often large) data in order to produce (smaller) summary information which can then be used to make business decisions. The consequence of these business decisions is likely to affect the source data stream, at which point the whole loop begins again.

Something else that is often attributed to analytics is data visualisation, i.e. the representation of data in some visual form that offers a previously unattainable level of clarity and understanding. But don’t be confused – taking your ugly data and presenting it in a pretty picture or as some sort of dashboard isn’t analytics (no matter how real-time it is). You have to be using that output for some purpose – the feedback loop. The output from analytics allows you to change your behaviour in order to do … something. Maximise your revenue, increase your exposure to customers, identify opportunities or risks, anything…

Something else that is often attributed to analytics is data visualisation, i.e. the representation of data in some visual form that offers a previously unattainable level of clarity and understanding. But don’t be confused – taking your ugly data and presenting it in a pretty picture or as some sort of dashboard isn’t analytics (no matter how real-time it is). You have to be using that output for some purpose – the feedback loop. The output from analytics allows you to change your behaviour in order to do … something. Maximise your revenue, increase your exposure to customers, identify opportunities or risks, anything…

Two Types of Analytics… and Now a Third

Until recently you can sort of consider two realms of analytics based on the available infrastructure on which they could run:

Real-Time Analytics

The processing of data in real-time requires immense speed, particularly if the data volume is large. A related but important factor is the use of filtering. If you are attempting to glean new and useful information from massive amounts of raw data (let’s say for example the data produced by the Large Hadron Collider) you need to filter at every level in order to have a chance of being able to handle the dataset – there simply isn’t enough storage available to keep a historical account of what has happened.

And that’s the key thing to understand about real-time analytics: there is no history. You cannot afford to keep historical data going back months, days or even minutes. Real-time means processing what is happening now – and if you cannot get the answer instantly you are too late, because the opportunity to benefit from a change in your behaviour has gone.

So what storage media would you use for storing the data involved in real-time analytics? There is only one answer: DRAM. This is why products such as Oracle Exalytics and SAP HANA make use of large amounts of DRAM – but while this offers excellent speed it suffers from other issues such as scalability and a lack of high availability options. Nevertheless DRAM is the only way to go if you want to process your data in real time.

Batch Analytics

This is the other end of the field. In batch analytics we take (usually vast) quantities of data and load them into an analytics engine as a batch process. Once the data is in place we can run analytical processes to look for trends, anomalies and patterns. Since the data is at rest there are ample opportunities to change or tweak the analytical jobs to look for different things – after all, in a true analytical process the chances are you do not know what you are looking for until you’ve found it.

Clearly there is a historical element to this analysis now. Data may span timescales of days, months or years – and consequently the data volume is large. However, the speed of results is usually less important, with jobs taking anything from ten minutes to days.

What storage media would you use here then? Let’s be honest, the chances are you will use disk. Slow, archaic, spinning magnetic disks of rusty metal. Ugh. But I don’t blame you, SATA disks will inevitably be the most cost efficient means of storing the data if you don’t need your results quickly.

Human-Time Analytics

Human-Time Analytics

So with flash memory taking the data centre by storm, what does this new storage technology allow us to do in the world of analytics that was previously impossible? The answer, in a phrase I’m using courtesy of Jonathan Goldick, Violin Memory’s CTO of Software, is human-time analytics. Let me explain by giving one of Jonathan’s examples:

Imagine that you are walking into a shopping mall in the United States. Your NFC-enabled phone is emitting information about you which is picked up by sensors in the entrance to the mall. Further in there are a set of screens performing targeted advertising – and the task of the advertiser or mall-owner is to display a targeted ad on that screen within ten seconds of finding out that you are inbound.

The analytical software has no possible way of knowing that you are about to enter that mall. As such it cannot use any sort of pre-fetching to get your details – which means those ten seconds are going to have to suffice. How can your details be fetched, parsed and a decision made within just ten seconds?

DRAM – From a technical perspective, one solution is to have your details located in DRAM somewhere. But with over 300 million people living in the US that is going to require an enormous and financially-impractical amount of DRAM. It just isn’t feasible.

DISK – A much less expensive option is clearly going to be the use of disk storage. However, even with the best high-performance disk array (with the highest cost) the target of finding and acting upon that data within ten seconds is just not going to happen.

FLASH – Here we have the perfect answer. Extremely fast, with sub-millisecond response times, flash memory allows for data to be retrieved orders-of-magnitude faster than disk and yet with cost far lower than DRAM (in fact the cost is now approaching that of disk).

Flash is a new way of thinking – and it allows for new opportunities which were previously unattainable. It’s always tempting to think about how much better we could enhance existing solutions with flash, but the real magic lies in thinking about the new heights we can scale which we couldn’t reach before…