I recently posted a test harness for generating physical I/O using the new version of SLOB (the Silly Little Oracle Benchmark) known as SLOBv2. This test harness can be used for driving varying workloads and then processing the results for use in … well, wherever really. Some friends of mine are getting very adept with R recently, but I have yet to board that train, so I’m still plugging my data into Excel. Here’s an example.

We know that Oracle allows varying database block sizes with the parameter DB_BLOCK_SIZE, which typically has values of 4k, 8k (the default), 16k or 32k. Do you ever change this value? In my experience the vast majority of customers use 8k and a small number of data warehouse users choose 32k. I almost never see 16k and absolutely never see 4k or lower. Yet the choice of value can have a big effect on performance…

Simple Test with 8k Block Size

In the storage world we like to talk about IOPS and throughput as well as latency (see this article for a description of these terms). IOPS and throughput are related: multiply the number of IOPS by the block size and you get the throughput as a result.

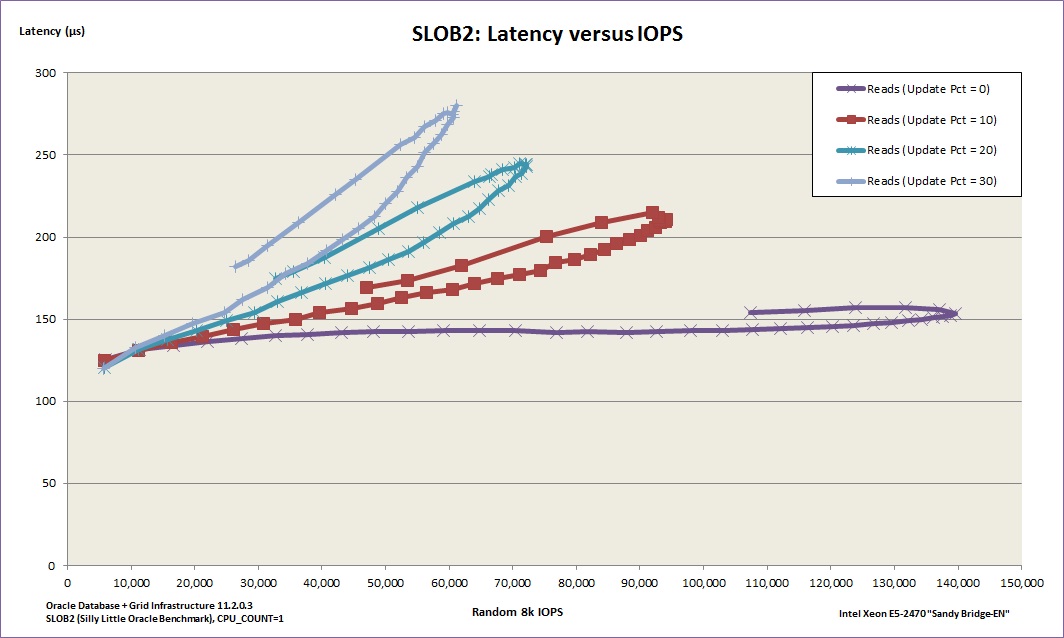

So let’s see what happens if we run SLOB PIO tests using the test harness for the default 8k block size, testing workloads with 0% update DML, 10%, 20% and 30%:

You can see four loops or “petals”, with each loop starting at the bottom left and working out towards the upper right, moving anti-clockwise before coming back down. This is expected behaviour, because each line tracks an increasing number of SLOB processes from 1 to 64. As the number of processes increases, so does the number of IOPS – and as a result there is a small increase in the latency. At some point near the high end of 64 the server CPU gets saturated, causing the number of IOPS to drop and therefore resulting in the latency coming down again – this is because the compute resource is exhausted while the storage has plenty left to spare (the server has 2 sockets each with an E5-2470 8-core processor, but crucially I am pinning* Oracle to one core with CPU_COUNT=1). To put that even more simply, there is not enough CPU available to drive the storage any harder (and the storage can go a LOT faster – after all, it’s the same storage used during this). [* This is really bad wording from me – see the comments section below]

From this graph we can deduce the optimum number of SLOB processes needed to drive the maximum possible I/O through Oracle for each workload: the point where the line bends back on itself marks this spot. We can also see, in graphical form, evidence of what we already know: read-only workloads can drive much higher amounts of IOPS at a lower latency than mixed workloads.

Multiple Tests with Varying Block Sizes

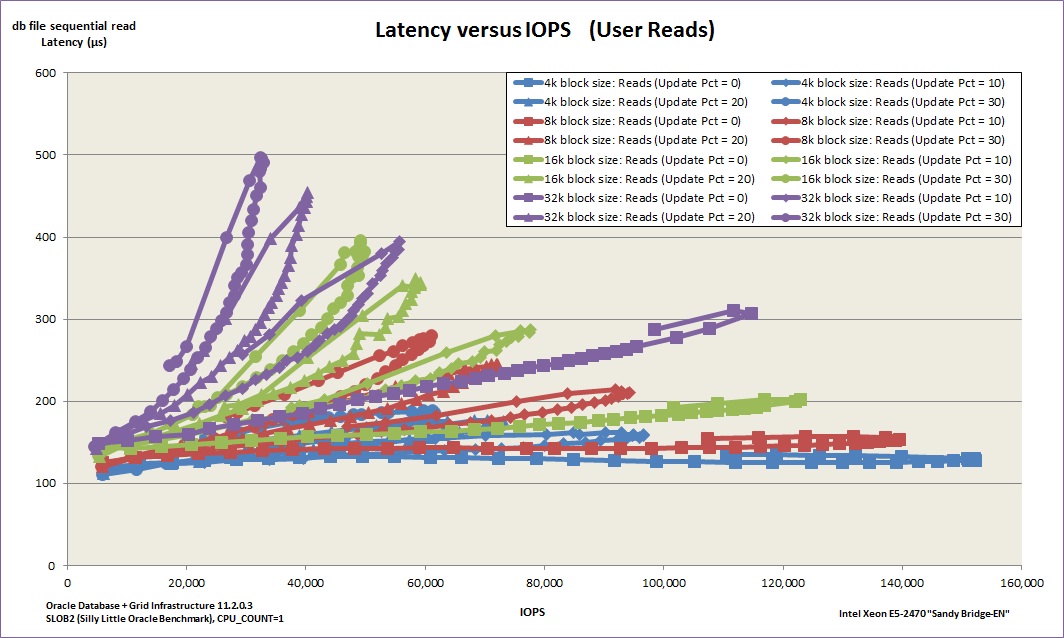

Now let’s take that relatively simple graph and cover it in cluttered “petal-shaped” lines like the ones above, but with a set for each of the following block sizes: 4k, 8k, 16k and 32k.

Ok so it’s not easy on the eyes – but look closely because there’s a story in there. In this graph, each block size has the same four-petal pattern as above, with the colour used to denote the block size. The 32k block size, for example, is in purple – and quite clearly exhibits the highest latencies at its peaks. The blue 4k blocksize line, on the other hand, has very low latencies and extends the furthest to the right – indicating that 4k would be the better choice if you were aiming to drive as many IOPS as possible.

So 4k has lower latency and more IOPS… must be the way to go, right?

Two Sides To Every Story

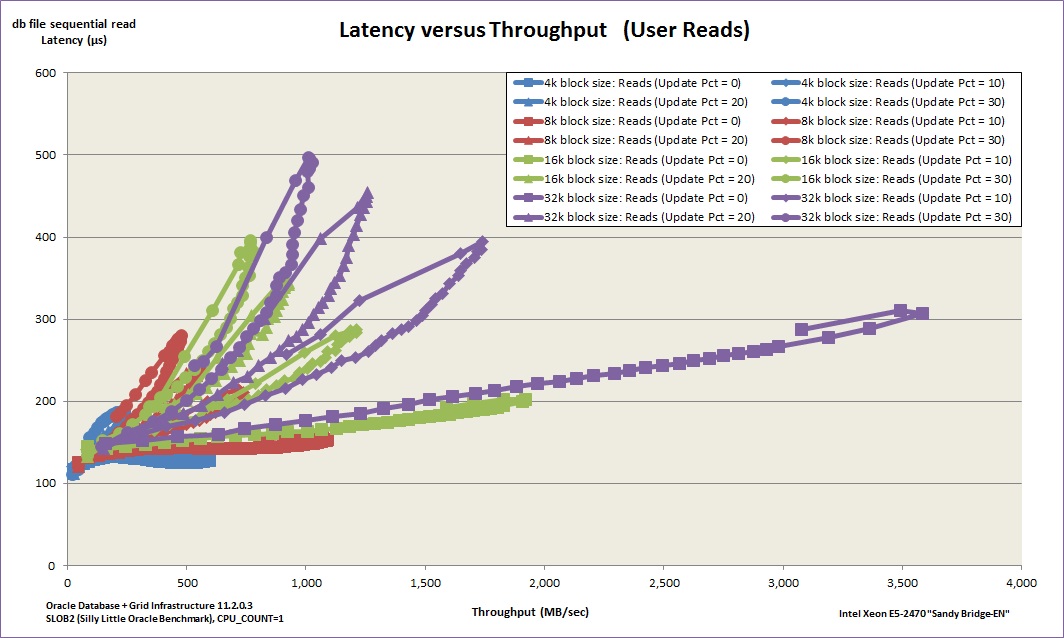

What happens if we stop thinking about IOPS and start thinking about throughput? By multiplying the IOPS by the block size we can draw up the same graph but with throughput on the horizontal axis instead:

Well now. The blue 4k line may indeed have the lowest latency figures but if throughput is important it’s nowhere on this scale. The purple 32k line, on the other hand, is able to drive over 3,500MB/sec of throughput at its peak (and still stay around the 300 microsecond latency mark). Maybe 32k is the way to go then?

Conclusion

As always, the truth lies somewhere in between. In the case of SLOB the workload is extremely random, meaning that each update is probably only affecting one row per block. It therefore makes no sense to have large 32k blocks as this is just an overhead – the throughput may be high, but the majority of the data being read is waste. Your real life workload, on the other hand, is likely to be more diverse and unpredictable. SLOB is a brilliant tool for using Oracle itself to generate load, but not intended as a substitute for proper testing. What it is great for though is learning, so use the test harness (or write your own) and get testing.

Also, don’t overlook the impact of DB_BLOCK_SIZE when building your databases – as you can see above it has a potentially dramatic effect on I/O.

CPU_COUNT=1 in init.ora does not “pin” anything. I must be misunderstanding that part of your post?

Oh man! Ok I hold my hands up to that one, “pin” is a bad term to use. In fact, it’s a *terrible* term to use.

I was trying to knock out a quick blog post prior to going on holiday and my haste has bitten me.

Readers, don’t listen to me, read this instead:

http://docs.oracle.com/cd/E11882_01/server.112/e25513/initparams040.htm#REFRN10023