The people deploying AI agents are not the people responsible for the systems they stress – and the governance structures that might close that gap don't yet exist.

Category: Cloud

The cloud is built on abstraction, standardisation, and scale. It works because most workloads can be made to look the same.

Databases are the exception. Their performance characteristics, failure modes, and architectural constraints are deeply tied to how they are built and deployed.

As AI increases the pressure on data systems, the tension between cloud uniformity and database specificity becomes more visible.

You Won’t See Failure First. You’ll See Cost

Agentic AI introduces a failure mode that doesn't announce itself. The first signal isn't a red dashboard or a paged engineer – it's a line in the cloud bill.

The Application Layer Used to Protect You. Now It Can’t

The application layer was never designed as a database security boundary – but it acted as one. Agentic AI removes that protection, via bypass or overwhelm, and the database is left exposed.

Your Database Was Sized for Humans. The Bill Arrives When Agents Connect

Every enterprise database capacity model rested on assumptions about human behaviour. Agents remove those assumptions – and in the cloud, that gap renews every month.

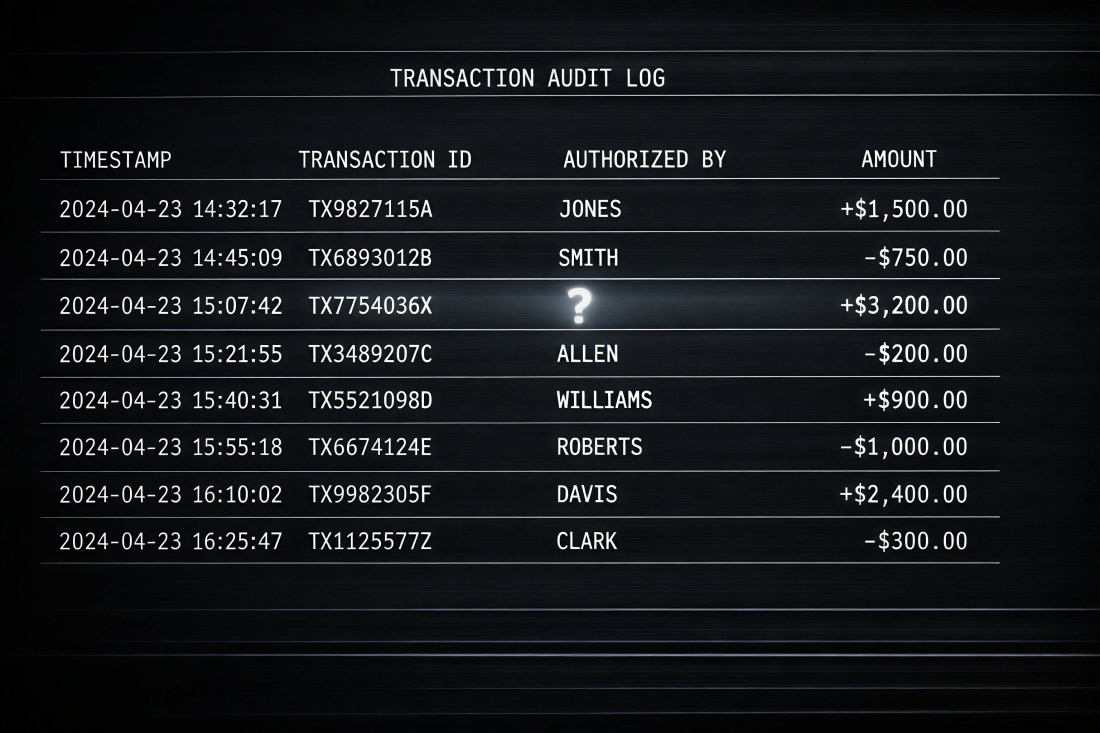

The Audit Trail Was Your Ground Truth. It Isn’t Anymore

The audit trail still runs. Every commit is recorded. But agentic AI has broken the two assumptions it was built on – and the incompleteness is invisible until you need it.

The Brake Was Human. Now It’s Gone

Classic enterprise data architecture had an implicit safeguard built into it. The human in the loop provided error absorption, audit accretion and natural rate-limiting – none of which were ever specified. Agentic AI removes the human. It removes all of those protections simultaneously.

Your Database Doesn’t Know What an Agent Is

Enterprise databases were built around a social contract: every action has a human author. AI agents inherit that identity model without satisfying its assumptions – and the audit log cannot say who made it happen.

Transactions Assume Intent. Agents Don’t Guarantee It

ACID assumes human intent behind every commit. AI agents expose this as an architectural gap – and the enterprise transaction model has no mechanism to detect it.

AI Agents Don’t Just Add Load. They Change Its Shape

AI agents don’t just add database load – they change its shape. This article explains the three new patterns: compression, expansion and recursion.

AI Can Replace Interfaces, Not Systems of Record

AI can replace interfaces but not systems of record. The database commit is where intent becomes enterprise reality – and that boundary matters more than ever.