What Happens When Agentic AI Closes the Data Loop

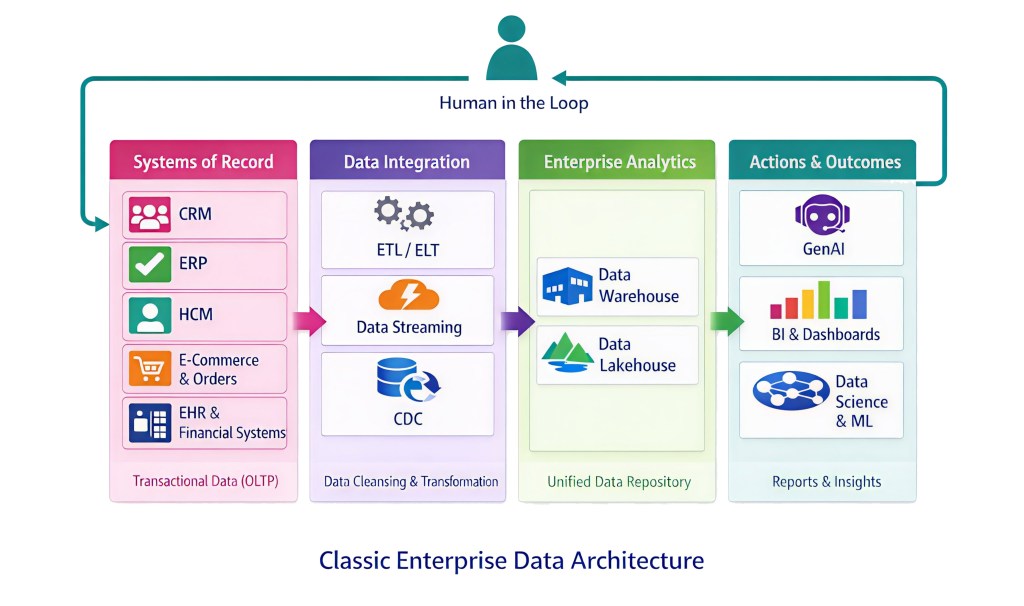

Everyone reading this has seen some version of the diagram below.

It shows up in vendor decks, architecture reviews, strategy offsites. The labels change with the decade – warehouse becomes lakehouse, ETL becomes streaming, reports become dashboards – but the shape stays the same.

Systems of record on the left. Integration in the middle. Analytics consolidating into something “unified”. Outputs on the right, consumed by humans who decide what to do next. A feedback loop, but a slow one, mediated by the figure at the top.

This is not just a common pattern. It is the pattern. Two decades of data engineering have optimised it without ever really questioning it.

And because the shape never changed, something embedded in it went largely unnoticed.

The human in that diagram was never decorative. The human was the brake.

What the Brake Was Actually Doing

The delay between data being created and action being taken was never designed. It just existed.

Data moved. It landed. It was surfaced. Someone noticed it. It was discussed, approved, acted on. In most organisations, that cycle took days or weeks. Even in the best ones, it took long enough to be annoying.

That delay looked like inefficiency.

It wasn’t.

It absorbed errors. Bad data entering the pipeline had multiple chances to be caught – reconciliation jobs, broken reports, someone noticing a number didn’t look right. Not by design. By delay.

It created audit points. Every handoff – source to staging, staging to warehouse, warehouse to report – was a moment where state was captured and could be inspected. Compliance frameworks were written assuming those moments existed. The audit trail wasn’t bolted on. It fell out of the flow.

It rate-limited the system. When a human made a decision and wrote it back to a system of record, it happened at the speed of human work. Meetings. Approvals. Change windows. That pace was slow enough to frustrate and slow enough to be safe. A bad decision did not immediately reshape the environment for the next one.

None of this appears in architecture diagrams. None of it is in SLAs or capacity models.

But it was load-bearing.

Remove the Human. Keep Everything Else.

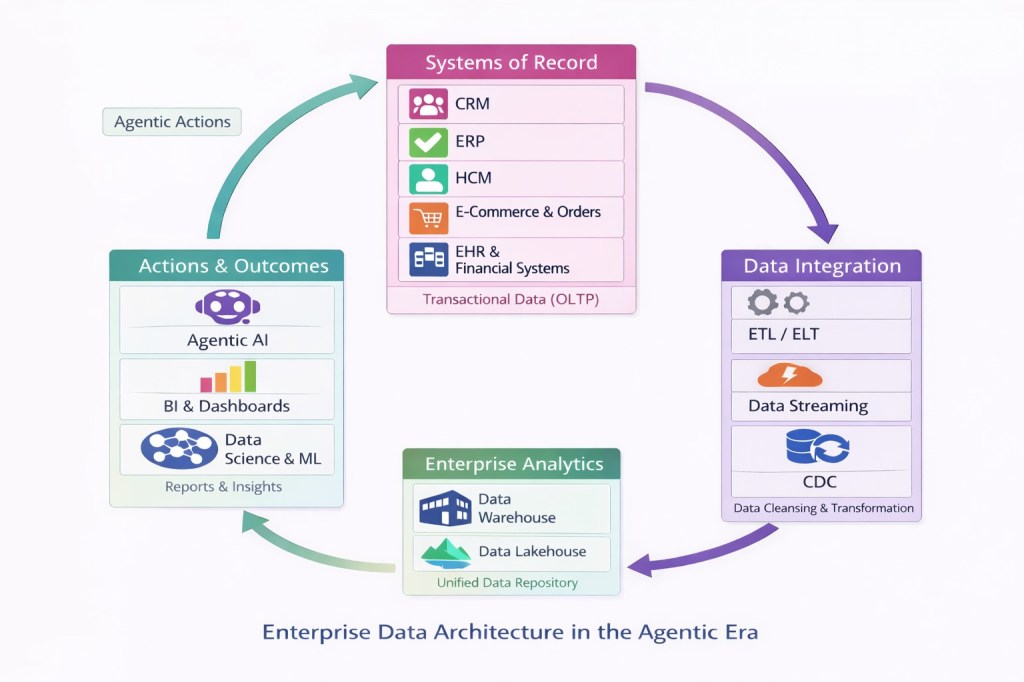

Now look at the second diagram.

Same components. Systems of record, integration, analytics, outcomes. What changes is the geometry.

The feedback loop is no longer a slow arc. It is closed, continuous, and running at machine speed. Outputs from the outcomes layer – now driven by agents – become inputs to the systems of record immediately, on the same operational cycle.

This is what falls out when agents can read, reason and write back inside a single automated workflow. Earlier articles in this series have examined pieces of this in isolation: agents querying live transactional systems, the commit moment where intent becomes durable state, load shapes that don’t look like human behaviour, the gap between transactional semantics and agent intent, the identity problem at the point of write.

Individually, those are pressures on components. Composed together, they describe a structurally different kind of system.

When the Loop Has No Damping

In control systems engineering, a feedback loop without damping doesn’t just run faster. It becomes capable of oscillation, resonance and runaway. Enterprise data architectures are not control systems in the formal sense, but the logic transfers with uncomfortable precision.

In the classic architecture, errors had a natural decay rate. A bad decision entered the environment slowly, was caught somewhere downstream, and was corrected before it fed back to its origin. The human wasn’t the only error filter – but the human was the final one, and the time it took to reach that point was itself a filter.

In the agentic architecture, that decay disappears. An agent writes a bad decision into a system of record. The next agent reads it as fact. Its output compounds the error. The one after that compounds it again. There is no natural interruption point – no pause, no moment where someone looks and says “that doesn’t feel right.”

The system doesn’t correct the error. It transmits it.

This is not about agent quality. Even a well-calibrated agent makes mistakes. What has changed is that the architecture now amplifies those mistakes rather than damping them. The classic architecture could not produce this failure mode – not because anyone designed it out, but because the human in the loop suppressed it.

Remove the human, and you remove error absorption, audit accretion and rate-limiting simultaneously. Because they were never separate features. They were side effects of the same thing.

What the Industry Has – and What It Doesn’t

The industry can see this, at least partially. You can see it in the language: guardrails, observability, approval gates, output validation, constrained tool use. All of these are attempts to reintroduce control into the loop. The instinct is right. The execution is early.

Most of what exists today are compensating controls – a human approval here, a logging layer there, a constraint on a particular write path. They recreate fragments of what the human used to do. They do not recreate the underlying property the human provided: continuous, pervasive mediation across every interaction between outputs and inputs.

And they don’t scale. An approval gate on every agent write is not a control mechanism. It’s just the human in the loop again – which is exactly what the architecture was supposed to remove.

The classic system didn’t need explicit governance of its feedback loop because the loop ran at human speed. The agentic architecture runs faster than governance was ever designed to operate. That mismatch isn’t a tooling gap. It’s structural.

The Question the Diagrams Ask

The two diagrams describe the same enterprise. Same systems, same data, same analytics, same outcomes. One has a human at the top. One doesn’t.

Everything that changes between them was never written down, never specified, never priced and never audited – because while the human was there, none of it needed to be.

The next articles in this series will explore what follows: how governance is being reconstructed at machine speed, whether systems of record can survive inside a closed loop, and what happens economically when protections that were always free turn out not to be.

For now, the question is simpler.

If the brake was always the human – and the human is now gone – what is holding this system together?

Nothing yet. And that’s the problem.

This article is part of the Databases in the Age of AI series.