SLC, MLC and TLC NAND flash differ in how many bits each cell stores. More bits per cell means lower cost and higher density – but slower performance and reduced endurance. This article explains the trade-offs for enterprise storage.

Tag: Storage for DBAs

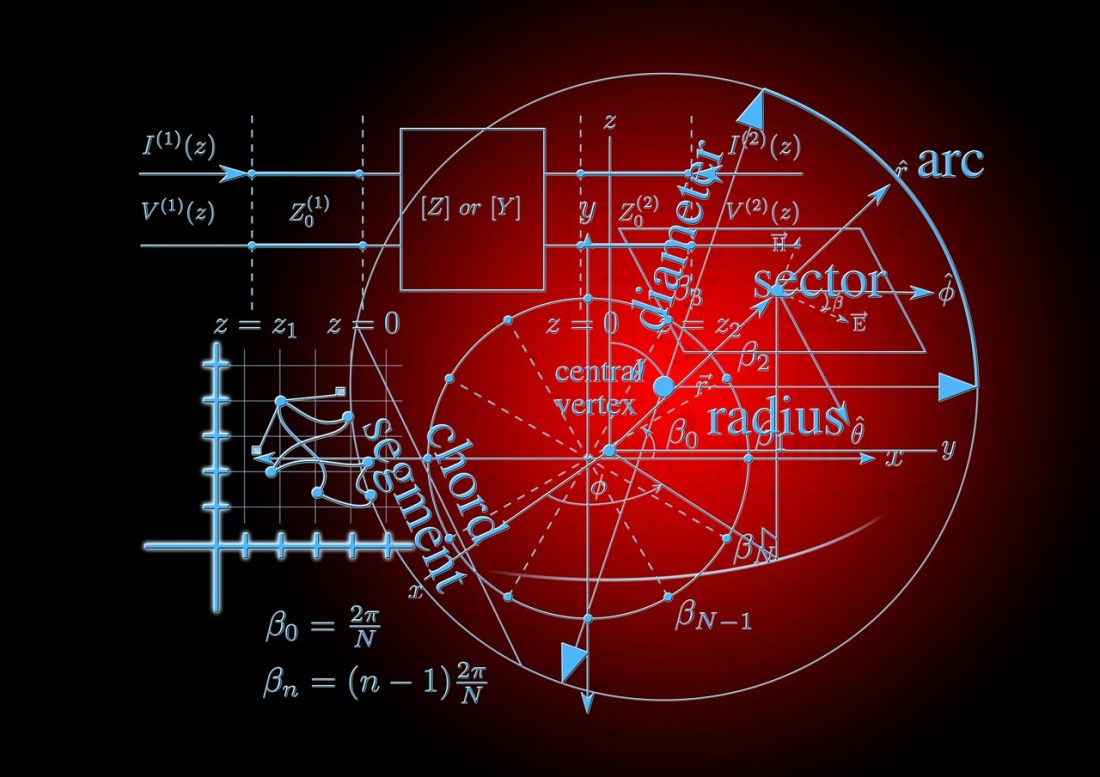

Understanding Flash: Blocks, Pages and Program / Erases

NAND flash memory stores data in pages but erases in blocks – a fundamental asymmetry that shapes everything from SSD performance to all-flash array design. This article explains the program/erase cycle and why it matters for database storage.

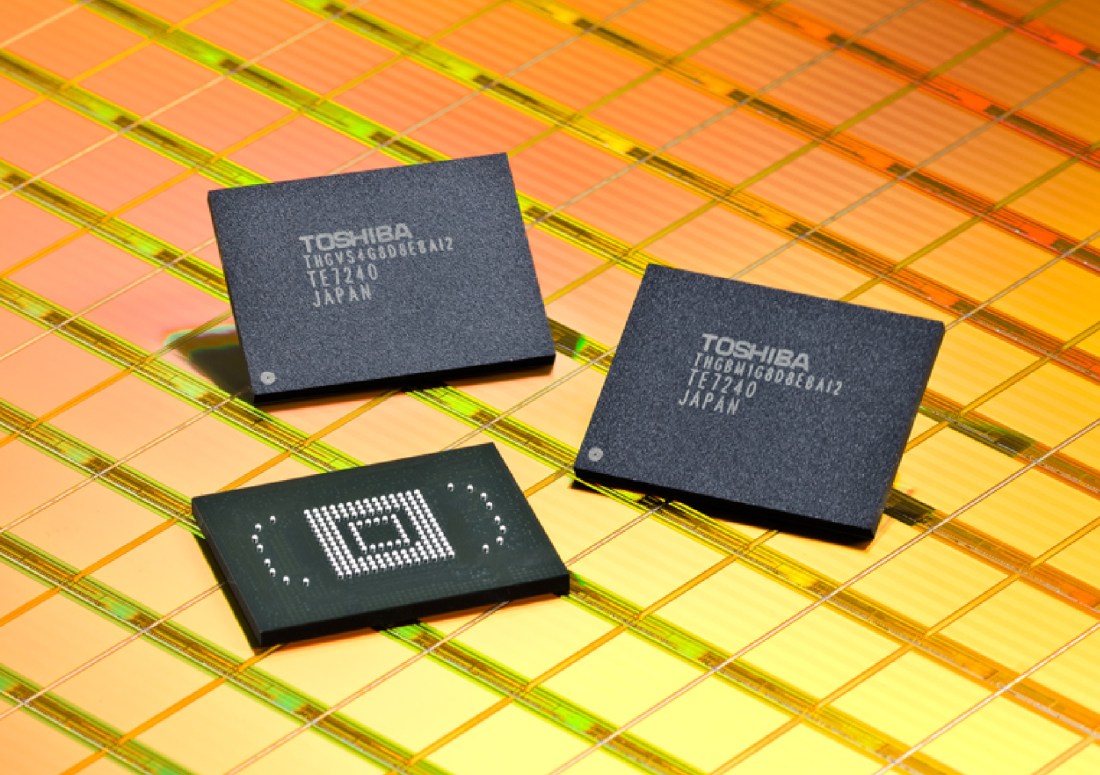

Understanding Flash: What Is NAND Flash?

NAND flash memory was invented in 1981 by Dr Fujio Masuoka at Toshiba. This article explains how flash works, why it differs from EPROM and EEPROM, and why program and erase operations behave differently.

Understanding Disk: Caching and Tiering

Caching and tiering promise to hide the performance cost of spinning disk – but both rely on predicting the unpredictable, and the slowest tier always waits.

Playing The Data Reduction Lottery

Flash vendors routinely quote “effective capacity” figures based on optimistic data reduction assumptions – understanding what those numbers really mean is essential before signing a purchase order.

Storage Myths: Storage Compression Has No Downside

Storage-level compression trades capacity for CPU cycles and read latency – understanding that trade-off matters more than the headline reduction ratios vendors put in their datasheets.

Storage Myths: Dedupe for Databases

Data deduplication promises capacity savings, but databases are one of the worst use cases for it – the technology’s assumptions break down against the entropy and I/O patterns of database workloads.

Understanding Disk: Over-Provisioning

When performance capacity drives storage decisions, waste is the inevitable result – stranded terabytes, short-stroking, and a growing gap between what disk holds and what it can actually deliver.

Understanding Disk: Mechanical Limitations

Spinning disk hit its performance ceiling at 15k RPM over two decades ago – and physics, aerodynamics and economics mean it’s staying there. The consequences for latency are unavoidable.

Understanding Disk: Superpowers

Disk drives displaced tape by giving the read/write head the freedom to move – trading sequential dominance for random I/O capability. Understanding that physical design is where disk's performance story begins.