In part one of this article I talked about Database Virtualisation and how I believe that it is the next trend in our industry. Databases – particularly Oracle databases – have held out against the rise of virtualisation for a long time, but as virtualisation products have matured and the drive to consumerise and consolidate IT services has increased, the idea of running production databases inside virtual machines has started to make real business sense. And to complete the perfect storm of conditions that make this not just a viable solution but a seriously attractive one, flash memory now enters the picture.

In part one of this article I talked about Database Virtualisation and how I believe that it is the next trend in our industry. Databases – particularly Oracle databases – have held out against the rise of virtualisation for a long time, but as virtualisation products have matured and the drive to consumerise and consolidate IT services has increased, the idea of running production databases inside virtual machines has started to make real business sense. And to complete the perfect storm of conditions that make this not just a viable solution but a seriously attractive one, flash memory now enters the picture.

Why has it taken so long for virtualisation to be adopted with production databases? Oracle’s support policy is a factor, of course, along with their license policy (discussed later). But the primary reason I’ll wager is risk. And the risk is all around performance – how can you be sure that the addition of a hypervisor will not affect system performance? In particular, how can you ensure that performance remains predictable. It’s primarily a latency thing, you do not want to be adding extra code paths to the application calls where speed is of the essence. You cannot afford to be adding nanoseconds to your CPU calls and milliseconds to your I/O operations, because it’s all wait time – and it all adds up.

This is compounded because one of the most obvious goals in virtualisation is to run multiple different virtual databases on top of the same physical infrastructure. In the virtualisation world (whether looking at databases or not), each virtualised guest has its own workload pattern which includes the pattern of I/O it performs. However, as you overlay each different guest onto the same physical host, something interesting happens: the I/O pattern tends towards randomness.

Latency Matters

Latency is measured in units of time: nanoseconds for CPU cycles, microseconds for flash memory arrays, milliseconds for disk arrays, seconds for networks, but always units of time. And it’s lost time, it’s time spent waiting instead of doing the thing we want to do. We care about latency because the operations for which latency is measured (e.g. reads and writes) happen frequently, perhaps thousands of times per second. Although those units of time may appear quite small, when you multiply them by their frequency you discover that they turn out to be significant portions of the total available time. And time is what we care about most, it’s the reason we upgrade computer equipment to faster models, why we drive too fast or complain bitterly about the UK’s slow progress in adopting LTE (or is that just me?)

Disk arrays have horrible latency figures. If a CPU cycle takes only a nanosecond and accessing DRAM takes 100ns, waiting 10ms (so that’s 10,000,000ns) for a single block to be read from disk is like waiting a lifetime. Disk manufacturers can do little about this because somewhere a little metal arm has to move over a little spinning disk (seek time) and wait for it to rotate to the right place (rotational latency) before you can have your data. They have done their best to make that disk move as fast as possible (which is why it uses so much power and creates so much heat), but there are laws of physics which cannot be broken. Of course, one thing that disk does have in its favour is that once the disk head is in the correct place to read or write that data to or from the platter, it can access the following block really quickly. This is sequential I/O and it’s something that disks do much better than random I/O, for the obvious reason that every subsequent block read in a sequential I/O avoids the seek time and rotational latency thereby reducing the total average read or write time.

Disk arrays have horrible latency figures. If a CPU cycle takes only a nanosecond and accessing DRAM takes 100ns, waiting 10ms (so that’s 10,000,000ns) for a single block to be read from disk is like waiting a lifetime. Disk manufacturers can do little about this because somewhere a little metal arm has to move over a little spinning disk (seek time) and wait for it to rotate to the right place (rotational latency) before you can have your data. They have done their best to make that disk move as fast as possible (which is why it uses so much power and creates so much heat), but there are laws of physics which cannot be broken. Of course, one thing that disk does have in its favour is that once the disk head is in the correct place to read or write that data to or from the platter, it can access the following block really quickly. This is sequential I/O and it’s something that disks do much better than random I/O, for the obvious reason that every subsequent block read in a sequential I/O avoids the seek time and rotational latency thereby reducing the total average read or write time.

But hang on, what did we say before about virtualisation? The more virtual databases you fit onto the physical infrastructure (i.e. the density), the more random the I/O becomes. So as you increase the density, you get increasingly bad performance. Yet increasing the density is exactly what you want to do in order to achieve the cost savings associated with virtualising your databases… it’s one of the primary drivers of the whole exercise. Doesn’t that mean that disks are completely the wrong technology for virtualisation?

Luckily we have our new friend flash technology to help us, with its ultra-low latency. Flash doesn’t care whether I/O is random or sequential because it does not have any seek time or rotational latency – why would it, there are no moving parts. A Violin Memory flash array can read a 4k block in under 100 microseconds. Even if you add a fibre-channel layer that still won’t take you much over 300 microseconds – and if you care that much about latency then Infiniband is here to help, bringing the figure back down to 100ms again. Only flash memory has the ultra-low latency necessary for database virtualisation.

IOPS – The Upper Limit of Storage

One thing you do not want to happen when you virtualise your databases onto a consolidated physical platform is to find the ceiling of your I/O capabilities. Every storage system has an upper limit of the number of I/O operations that it can perform per second (known as IOPS) and when that ceiling is reached (known as saturation) things can get painful.

One thing you do not want to happen when you virtualise your databases onto a consolidated physical platform is to find the ceiling of your I/O capabilities. Every storage system has an upper limit of the number of I/O operations that it can perform per second (known as IOPS) and when that ceiling is reached (known as saturation) things can get painful.

Why is this relevant to database virtualisation? Because when you virtualise, you overlay virtual images of databases onto a single physical host system. It’s like taking a load of pictures of your databases and superimposing them on top of each other. Your underlying infrastructure has to be able to deliver the sum of all of that demand, or everything on it will suffer.

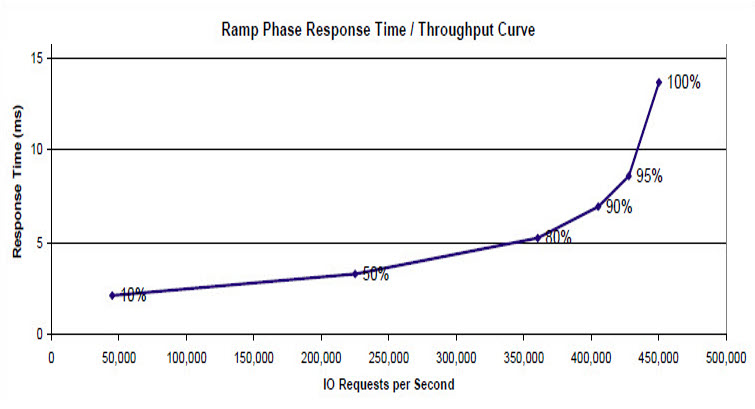

Worse still, the latency you experience from an underutilised storage system will not be the same latency you will experience when pushing it to its peak capacity. As the number of IOPS increases, so will the latency of each operation. Disk systems saturate far quicker than flash systems because of the cost of all that seek time and rotational latency discussed earlier. However, disk array vendors know a few tricks to try and avoid this – the most obvious being overprovisioning (using far more physical disks / spindles than are required for the usable capacity) and short stroking (only using the outer edge of each disk’s platter in order to reduce seek time and increase the throughput – the outer edge of the platter has a larger circumference and has a greater bit density meaning more data can be delivered per rotation). They are great tricks to increase the number of IOPS a disk array can deliver… great, that is, if you are the vendor, because it means you get to sell more disks. For the customer though, this means a bigger disk array using more power, requiring more cooling, taking up more valuable data centre space and – here’s the punchline – costing more but wasting huge amounts of raw capacity.

This is why flash memory makes the ideal solution for virtualisation. For a start the maximum IOPS figures for disks versus flash are in different neighbourhoods: a single 15k RPM SAS disk can deliver around 175 to 210 IOPS. Admittedly you would expect to see more than one disk in an array, but let’s face it there would have to be a lot of those disks to get up to the 1,000,000 IOPS that a Violin Memory 6616 memory array can deliver (around 5,000 disks assuming a figure of 200 for the HDD). The Violin array is only 3U high and uses a fraction of the power that you would need from the equivalent monster of a disk array.

Surely that makes flash a default choice, but there’s an additional consideration – the predictable latency. At high levels of IOPS flash performs exactly as predicted – latency rises in a linear fashion. But with a disk array latency rises exponentially, resulting in a “hockey-stick” style graph. Let’s have a look at the recent disk array vendor’s SPC1 benchmark for an example of this (and remember this set a world-record SPC benchmark so it’s a top of the range system):

[I’ll post more on this subject in a separate series as I want to share some more in-depth information on it, but I am kind of stuck at the moment waiting for more powerful lab gear… the servers I have had up until just aren’t powerful enough to make my Violin arrays break into a sweat…]

So flash memory gives you the IOPS capabilities you need for virtualisation – with the additional advantage of protecting you against unpredictable latency when running at high utilisation.

Oracle Licensing

The other major topic to talk about with database virtualisation is Oracle licensing. As everyone who has ever bought one will testify, Oracle licenses are very expensive. Since Oracle licenses by the CPU core and then applies a multiplication factor based on the CPU architecture (e.g. 0.5 for most x86 processors) you can quickly rack up a massive license bill (plus ongoing support) for some of the larger multi-core processors available on the market today. By virtualising, can you tie VMs containing Oracle databases to just a specific set of CPUs (Oracle calls this server partitioning), thus reducing cost?

The complicated answer is that it depends on the hypervisor. The simple answer is almost always no. In the world of Oracle there are two methods of server partitioning: soft and hard. Oracle’s list of approved hard partitioning technologies includes Solaris 10 Containers, IBM LPARs and Fujitsu PPARs – these are the ones where you license only a subset of your processors. Everything that’s not on the approved hard partitioning list requires every processor core to be licensed. And guess what’s on the list of soft partitioning products? VMware. You can read VMware’s own take on that here. The case of Oracle VM is a more complex one. In general OVM is considered soft partitioning and so a full compliment of licenses is required, but there are methods for configuring hard partitioning (both for OVM on SPARC and OVM on X86) so that this license saving can be achieved.

Flash memory has an angle here as well though. As I have discussed on my previous database consolidation posts, flash memory allows for a greater utilisation of your CPUs (because of the reduction in IOWAIT time), which means you can do more with the same resources. So by using flash you can either resist the need for more CPUs (and therefore more Oracle licenses) or actually reduce them.

Virtualisation Means Consolidation

There are some other challenges faced around virtualising databases. Many of them are the same as the challenges faced when consolidating databases: namely how to achieve a better density of databases per physical infrastructure (thereby realising more cost savings). One of the most important of these is memory (as in DRAM), which can often be the limiting factor when squeezing multiple virtualised databases into a confined physical space.

I’m not going to recycle the whole consolidation subject again here, since I (hopefully) covered all of these points in my series of articles on database consolidation. In this sense, you could consider database virtualisation a subset of database consolidation; effectively one of the methods for delivering it, although database virtualisation offers more than a simple consolidation platform.

I could probably write a whole load more on that subject, but as this blog entry is already long enough I’m going to just hand it over to my friends at Delphix instead.

The OVM on x86 (which has Open Source XEN inside) counted as hard partitioning VS XEN being counted as soft partitioning is the just laughable…. I’m still waiting for someone to show me how “xm vcpu-pin” under Open Source XEN e.g. 4.0 or 4.1 is different than the-same-XEN-code-base-inside-OVM-x86…

You could be waiting a long time my friend.