Last week, Andrew Mendelsohn gave a talk at the Enkitec Extreme Exadata Expo (“E4”) run in Texas by those excellent guys at Enkitec. Andrew is the SVP of Oracle’s Database Server Technologies group, so it’s fair to say he has his finger on the pulse of the Oracle roadmap for Exadata.

Last week, Andrew Mendelsohn gave a talk at the Enkitec Extreme Exadata Expo (“E4”) run in Texas by those excellent guys at Enkitec. Andrew is the SVP of Oracle’s Database Server Technologies group, so it’s fair to say he has his finger on the pulse of the Oracle roadmap for Exadata.

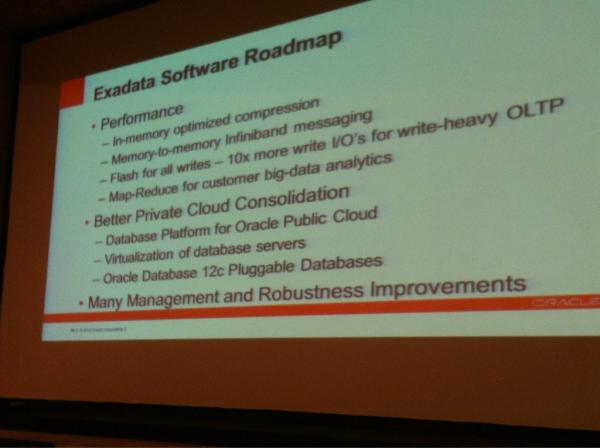

Big thanks to Frits Hoogland for tweeting a picture of the roadmap slide. As you can see there are some interesting things on there… I’m told that Andrew described these features as “coming within the next 12 months”. Of course, that could mean they arrive at the next Oracle Open World in a month’s time, or they could be 365 days away. I suspect some are coming sooner than others, but as usual it is all wild speculation. Never mind though, if there’s one thing I’m quite good at it’s wild(ly inaccurate) speculation.

The first one to consider is the in-memory optimized compression. Why is this important? Well, for Exadata, one reason is that no compression functionality can be offloaded to the storage cells, with their 168 cores (in a full rack). Instead it has to take place on the far-less processor-heavy compute nodes (only 96 cores on a full rack X2-2). Of course, it may be that the cells are busy and the compute nodes are idle, in which case this is a happy coincidence and there would be plenty of resource available for compression (although actually if the cells are really busy they may be performing “passthrough“, where work is offloaded back to the compute nodes!). But the fact remains that since the Exadata design is asymmetrical, you are still limited to only using the CPUs in the compute nodes. If you want to know what that means, you really need to be watching these videos by Kevin Closson. It seems like everyone wants to do everything in memory these days, but then I guess that’s not surprising when the alternative is doing it on disk.

The second important feature is the “flash for all writes” write-back flash cache, enabling the database writer to use some of the 5.3TB of flash available in a full rack. Of course, this is effectively a cache, albeit a persistent one. The writes still have to be de-staged back to disk at some point. Andrew is claiming a 10x improvement here on the slide, but it will be interesting to see how that plays out – particularly if those writes are sustained and the area allocated on the flash cards starts to run out. Kevin posted some views about this on his site, although being Kevin he likes to stick to the facts rather than throw about the armfuls of wildly inaccurate speculation that you’ll find here.

Finally, the feature that caught my eye the most was “Virtualization of database servers”. Regular readers will know my absolute faith in the meeting of databases with virtualization technology, so for me this appears to be yet another clear sign (if you look for them hard enough you can always find them 🙂 ). I wonder if this means the introduction of Oracle VM onto the compute nodes. The x86 hardware is there, the Infiniband network is there, so this could pave the way for OVM on Exadata with all of the resultant Live Migration technology… it’s a thought.

Let’s face it, Oracle is getting spanked in the virtualisation arena by VMware, so they need to do something big to get people to notice OVM. With the release of EMC’s vFabric Data Director 2.0 it’s now time to fight or give up. And we all know Oracle likes a fight.

For my money OVM is actually a great product, but then so is VMware. And for all Larry’s words on virtualization being the best security model, it’s a technology that has been noticeably lacking on what is, after all, Oracle’s strategic platform for all database workloads…

Comments welcome… and feel free to call me out on what is clearly an obvious lack of insider knowledge.

The slide is full of “thoughtware.” The memory-to-memory IB messaging is odd. Oracle uses RDS zero-copy which is a memory to memory DMA protocol so I have no idea what that item might be.

I attended that session (via the web). Andy was very light on details on all these points. In fact I don’t recall any elaboration on the compression line item.

It’s possible the write-back caching may be coming sooner than we think. Andy Colvin, one of those excellent guys at Enkitec, wrote an interesting article on the subject here:

http://blog.oracle-ninja.com/2012/08/exadata-flash-write-back-sooner-than-we-think/

While I’m in the mood for posting links to interesting stuff written by the people from Enkitec, here’s a link to Kerry Osborne’s article about Exadata and OLTP:

http://kerryosborne.oracle-guy.com/2012/08/e4-wrap-up-part-i-oltp-bashing/

Kerry has an alternative viewpoint to me on Exadata, although we do at least have some shared middle ground 🙂 Whatever your own view is I recommend reading the views from both sides of the spectrum before making your decision. We can’t all be right, after all…