Storage for DBAs: Here’s a question I get asked a lot: “Does my database need flash?”. In fact it’s the most common question customers have, followed by the alternative version, “Does my database need SSD?”. In fact, often customers already have some SSDs in their disk arrays but still see poor performance, so really I ought to wind it back a level and call this article, “Does my database need low latency storage?”. This would in fact be a much better headline from a technical perspective, but until I change the name of this site to LowLatencyDBA I’m sticking with the current title.

Flash is no longer a cutting edge new technology, it’s a mainstream product sold by almost every storage vendor. This means that you or your organisation will probably already have some flash sales person beating down your door to flog you some sort of flash product, whether it’s an all-flash array, a hybrid flash/disk system or a set of PCIe flash cards. While these products are diverse in nature, they all share two main characteristics: low latency and large numbers of IOPS. But how do you know whether you really need them?

In a later post I’ll be running through the questions which I think need to be asked in order to whittle down the massive list of flash vendors to the select few capable of servicing your needs. This, of course, will be difficult to achieve without being biased towards my own employer – but that’s a problem for another day. For now, here’s the first (and potentially most important) step: working out whether you actually need low latency flash storage in the first place.

Who Needs Flash?

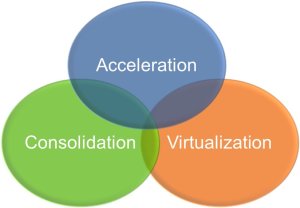

For the world of databases, there are three main reasons why you might want to switch to low latency flash:

Acceleration – perhaps the most obvious reason is to go faster. There are many reasons why people desire better performance, but they generally boil down to one of two scenarios: Not Good Enough Now and Not Good Enough For The Future. In the former, bad performance is holding back an application, denying potential revenue or incurring penalties in some way (either SLA-based financial penalties or simply the loss of customers due to poor service levels). In the latter, existing infrastructure is incapable of allowing increased agility, i.e. the ability to do more (offering new services for example, or adding more concurrent users).

Consolidation – always on the mind of CIOs and CTOs is the benefit of consolidating database and server estates. Consolidation brings agility and risk benefits as well as the new and important benefit of cost savings. By consolidating (and standardising) multiple databases onto a smaller pool of servers, organisations save money on hardware, on maintenance and administration, and on the holy grail of all cost savings: software license fees. If you think that sounds like an exaggeration, take a look at this article on Wikibon which demonstrates that Oracle license costs account for 82% of the total cost of a traditional database deployment. Consolidation allows for reduced CPU cores, which means a reduction in the number of licenses, but it also increases I/O as workloads are “stacked” on the same infrastructure. The Wikibon article argues that by moving to flash storage and consolidating, the total cost drops significantly – by around 26% in fact.

Virtualisation – an increasingly prevalent option in the database world. The use of server virtualisation technologies is allowing organisations to move to cloud architectures, where environments are automatically provisioned, managed and migrated across hardware. Virtualisation brings massive agility benefits but also carries a risk because, just like with consolidation, I/O workloads accumulate on the same infrastructure. Unlike consolidation though, virtualisation adds an extra layer of latency, making the I/O even more of a potential bottleneck. Flash systems now make this option practical, as hypervisor vendors begin to realise the potential of flash memory.

There is actually a fourth reason, which is Infrastructure Optimisation. If you have data centres stuffed with disk arrays there is every chance that they can be replaced by a small number of flash arrays, thus reducing power, cooling and real estate requirements and saving large amounts of money. But as this article is primarily targeted at databases I thought I’d leave that one out for now. Consider it the icing on the cake… but don’t forget it, because sometimes it turns out that there’s a lot of icing.

So now we know the reasons why, let’s have a look at which sorts of systems are suitable for flash and which aren’t, starting with the Performance requirement…

Databases Love Flash If…

- They create lots of I/O! I know, it sounds obvious, but more than once I’ve seen customers with CPU-bound applications that generate hardly any I/O. Flash is a fantastic technology, but its not magic.

- There is lots of random I/O. Now don’t take that the wrong way – sequential I/O is good too. But if you currently have a random I/O workload running on a disk system you will see the most dramatic benefit after switching that to flash. Here’s why.

- High amounts of parallelism. The simple fact is that a single process cannot drive anywhere near the amount of I/O that a good flash system can support. If you think of flash as being like a highway, not only is it fast, it’s also wide. Use all the lanes.

- Large IOWAIT times. If you are using an operating system that has a concept of IOWAIT (Linux and most versions of UNIX do, Windows doesn’t) then this can be a great indicator that processes are stuck waiting on I/O. It’s not perfect though, because IOWAIT is actually an idle wait (within the operating system, this is nothing to do with Oracle wait events) so if the system is really busy it may not be present.

Those are all great indicators, but the next two should be considered the golden rules:

- I/O wait times are high. Essentially we are looking for high latency from the existing storage system. Flash memory systems should deliver I/O with sub-millisecond latency, so if you see an average latency of 8ms on random reads (db file sequential read), for example, you know there is potential for reducing latency to an eighth of its previous average value.

- I/O forms a significant percentage of Database Time. If I/O is only responsible for 5% of database time, no amount of lightening-fast flash is going to give you a big performance boost… your problems are elsewhere. On the other hand, if I/O is comprising a large portion of database time, you have lots of room for improvement. (I plan to post a guide to reading AWR Reports pretty soon)

If any of this is ticking boxes for you, it’s time to consider what flash could do for the performance of your database. On the other hand…

Performance Won’t Improve If…

- There isn’t any I/O. Any flash vendor in the industry would be happy to sell you their products in this situation – and let’s face it you’ll get great latency! – but be realistic. If you don’t generate I/O, what’s the point? Unless of course you aren’t after performance. If consolidation, virtualisation or infrastructure optimisation is your aim, there could be a benefit. Also, consider the size of your memory components – if your database produces no physical I/O, could you consider reducing the size of the buffer cache? One of the big benefits of flash to consolidation is the ability to reduce SGA sizes and thus fit more databases onto the same DRAM-restricted server.

- Single threaded workloads. Sure your application will run slightly faster, but will that speed-up be enough to justify the change of infrastructure? I’m not ruling this out – I have customers with single-threaded ETL jobs that bought flash because it was easier (and cheaper) than rewriting legacy code, but the impact of low-latency storage may well be reduced.

- Application serialisation points. A session waiting on a lock will not wait any faster! Basically, if your application regularly ties itself in a knot with locks and contention issues, putting it on flash may well just increase the speed at which you hit those problems. Sometimes people use flash to overcome bad programming, but it’s by no means guaranteed to work.

- CPU-bound systems. CPU starvation is a CPU problem, not an I/O problem. If anything, moving to low-latency storage will reduce the amount of time CPUs spent waiting on I/O and thus increase the amount of time they spend working, i.e. in a busy state. If your CPU is close to the limit and you remove the ballast that is a disk system, you might find that you hit the limit very quickly.

If you are unfortunate enough to be struggling with a badly-performing application that fits into one of these areas, flash probably isn’t the magic bullet you’re looking for.

Consolidation and Virtualisation

This is a different area where it’s no longer valid to only look at individual databases and their workloads. The key factor for both of these areas is density i.e. the number of databases or virtual machines that can fit on a single physical server. The main challenges here are memory usage and I/O generation: databases SGAs tend to be large, but flash allows for the possibility of reducing the buffer cache; while I/O generation is a problem in the disk world because consolidated workloads tend to create more random I/O. Of course, with flash that’s not really a problem. I’ve written a number of articles on consolidation and virtualisation in the past – I’m sure I’ll be writing more about them in the future too.

Summary

I work for a flash vendor – we want you to buy our products. We have competitors who want you to buy their products instead. If everyone in the industry is telling you to buy flash, how do you know if it’s relevant to you? Here’s my advice: make them speak your language and then check their claims against what you can see yourself.

Take some time to understand your workload. Look at the amount of I/O generated and the latency experienced; look at how random the workload is and the ratio of reads to writes (I’ll post a guide for this soon). Ask your (potential) flash vendor how much benefit you will see from your existing storage and then get them to explain why. If you’re a database person, make them speak in your language – don’t accept someone talking in the language of storage. Likewise if you’re an application person make them explain the benefits from an application perspective. You’re the customer, after all.

If your flash vendor can’t communicate with you in your language to explain the benefit you will see, there’s only one course of action: Get rid of them in a flash.

Footnote

Incidentally, if you live outside the UK and you’re wondering about the picture at the top of this article, check out this. If you live inside the UK you will know it’s a Cillit Bang reference… unless you live in a cave and shun the outside world – in which case, how are you reading this?

Storage architecture shapes database behaviour – and that relationship is evolving again. If you found this series useful, you might also be interested in Databases in the Age of AI, which explores how AI agents are changing the assumptions at the heart of enterprise data systems.

Hi Thanks again for this article which provides a good summary of when one should consider moving to Flash. However I think it’s also important to remind that a poor database design, a poor index design are likely to generate access paths over generating massive amount of random I/Os when one could fix this by properly designing the database schema, indexes as well as using the good table structure in order to meet response time requirement. too many times i have seen companies not even trying to understand why their database was I/O bound. Flash is simply seen as a “quick win” and unfortunately it will fix the poor underlying desing. But For how much time ? The most ridiculous situation was when i had to work on databases when a previous DBA had set optimizer_index_cost_adj=10, simply telling to the Oracle optimizer : The physical disks are allways better at doing random I/Os …. leading to massive and never ending full index scans …. I see flash as an amazing technology but also as an easy way to stop thinking about good database/query design.

You are absolutely right. Would it surprise you to know that I regularly visit customers who want to use a flash system to fix (alleviate) their problems rather than fix the code?

I’m kind of a purist on these things, so the idea of throwing hardware at a coding problem makes me uneasy. But I also try to remain a realist – and some people simply have no choice. I have a customer whose application is third party and the supplier long ago stopped supporting their version, but it’s *much* cheaper for them to use flash than it is to pay for the upgrade or code fix. Application changes are costly, risky and take lots of time – plus there is no guarantee they will work. A hardware fix such as a faster server or storage system is simple to implement and test, avoiding risk. It’s a simple business risk analysis exercise.

I always make sure the customer knows that this is just a bandaid though. It always pays to be honest about these things – so if our solution is going to cover up a problem rather than resolve it we owe it to the customer to make sure they understand this.

But as a rather pragmatic customer said to me only last week, “There’s no difference between permanently hiding a problem and fixing it…”

Thanks for writing this up – we were planning to write up something similar to avoid our sales guys throw flash(in fact VMA) at everything to win deals! So now we can just point them to this! We’d rather look at the AWR and system statistics to make a call.

I don’t get the virtualization/consolidation play.

If you have today a number of physical databases around, either each connected to their own storage today or running on a shared storage then why would you need a Flash array to make consolidation/virtualization viable?

In my eyes you don’t as long as you don’t experience performance problems today. In such a scenario you would either consolidate the database instances on a single or few hosts and dedicate their previous storage directly to them: NPIV and FibreChannel SAN make this possible. From an I/O perspective nothing really changed… only that now all the IO comes from a single or fewer large hosts with more initiator ports instead of multiple separate hosts. But the sum of all I/O per disk array and in total is the same – why would it change?

Or otherwise in the virtualization scenario: you virtualize the databases on single/fewer hosts and at the same time you are also virtualizing potentially separate storage resources. If you had 5 dedicated arrays before, each capable of let’s say 20,000 8k random write IOPS and you virtualize them – why would your virtualized workloads all together suddenly exceed that consolidated 100,000 8k random write IOPS that all arrays working together could deliver?

I don’t see any reason for that.

Your statement “consolidated workloads tend to create more random I/O” makes no sense to me as long as you are using the same storage infrastructure as before.

Furthermore, when virtualizing and consolidating you will quickly run into capacity problems. Enterprise databases that can benefit from the speed of NAND and who’s owners have the money to invest in NAND are not just a couple of dozen GB. It’s usually terabytes per database but only a fraction of them is hot (typically <= 5%).

Violin and most other Flash kids on the block do not offer any kind of tiering, caching nor integration with mass capacity low performance storage. So your advise would be to either throw everything on NAND which makes it a super expensive solution or to start fiddle around with certain indexes, tablespaces, temp table spaces from you various databases and you place them on dedicated Flash partitions whereas the rest resides on the existing infrastructure? Good luck with that in a 10+ database consolidation project and when databases grow.

We seem to be having a disagreement over what consolidation means. Are you suggesting that you would just take all of the disparate storage arrays from your consolidation targets and connect them to the new consolidated system? Consolidation is partly about reducing management overhead, I don’t see how that would happen in your case.

For tier one databases, most disk arrays are over-provisioned in order to supply the required amount of IOPS. Consolidating those databases allows for a reduction in physical cores, thus reducing license cost. Changing the storage to flash allows for a significant reduction in storage footprint, as multiple floor tiles of disk arrays are replaced with a small number of flash units. This isn’t just a marketing story, we do this. So do our competitors.

You make a point about capacity, in which you attempt to force me into offering one of two choices: a “super expensive” all-flash solution or a complex customised environment with manual tiering. The latter is a bad idea and certainly not one that I’ve ever suggested. So yes, my advice is absolutely to use an all-flash solution. But if you think this is “super expensive” then you aren’t looking at it properly. Stop thinking about the price of the storage and start looking at the total cost of ownership of the whole stack. It’s very simple. If you don’t want to take my word for it, go and read the independant advice here:

http://wikibon.org/wiki/v/Virtualization_of_Oracle_Evolves_to_Best_Practice_for_Production_Systems

I guess I shouldn’t leave this reply without addressing your other comment:

Well it wouldn’t, would it? If you are using the same storage infrastructure as before then all the consolidate databases would use separate SANs etc. But what would be the point in that? If you aren’t planning on optimizing the infrastructure to achieve cost and management savings, what exactly is the point of embarking on a consolidation project in the first place?

I respectfully disagree with your response. Let me address certain points:

A) Capacity

Say, you have customer who maintains a large order management database. Its size, including all indices, temp table space, archive redo logs, redo logs and staging as well as test and development databases is about 50 TiB. This is the regular customer I deal with. These are the traditional non-hyperscale, non-web-only customers who can afford all-flash arrays (AFA).

He has particular performance and capacity needs: his data grows about 10% per year on average, his hot data is about 5% of the total amount of capacity so let’s say 2TB.

However the data changes – a lot. His internal process dictate large amounts of data being written and overwritten or deleted. So the hot data changes too, making it impossible to pinpoint it to an all-flash solution for 2-3 TB in capacity for just this data. Nor is it practical to combine management between all-flash solutions like Violin to those with his existing capacity SAN. It would be two separate world.

So, in all honesty: what do you suggest to this customer? Buying 50TB net storage (not your raw capacity advised) worth of Violin SAN for what… like 8M USD list price?

Sorry my good sir, but there is no argument to justify this offer. Sure, he has to think about price / transaction, but at some point the marginal utility of the added performance diminishes where he still needs 50TB of addressable storage, because this is what is database looks like today. Re-engineering it would probably go into the millions as well.

Let’s say a quadruple decrease in per-transaction run time by using AFA shrinks his backup window to 1/8, double user throughput and accelerate certain batch jobs by 100%.

All good numbers, but in reality it looks way different: his 4h backup window today would be superb if it goes down to let’s say 2h, with AFA it’s 30 mins but there is no immediate benefit of the additional 1:30h. His user capacity doubles but his business does not immediately grow that much, 10% is the growth target for this FY. The batch jobs are similar to the backup window… faster is good, but the fastest is not needed. Certainly not for a multi-million rip&replace approach in his SAN fabric.

Does he need Flash? Yes, sir! Does he need AFA? Not so sure. What he needed (and in the end bought) was a NAND-based transparent tiering solution. Because sequential writes went directly through to the bandwidth-rich disk storage MLC flash was sufficient for life cycle and did not pollute the cache – since it’s cache eventually dying NAND is not an immediate problem. 2x2TB heads in front of his existing SAN infrastructure were easy to deploy and integrate with his existing process which heavily relied on snapshots and remote replication. Nothing of which AFA vendors usually offer. Heavy reads activity was lifted from the SAN so he could actually opt for a smaller scale disk solution. So in the end he reduced foot print and increase performance. Not as much as with an AFA-approach a la Violin. But at 10% of the cost and the complexity.

B) Consolidation creates more random I/O

Again you name the benefit of consolidating over-provisioned/under-utilized servers in one sentence with the use of AFA. But actually it has nothing to do with it. The server consolidation benefit could even be realized without any change in the storage infrastructure. That’s what my example was aiming at. Of course it makes sense however at this stage to also look at options to consolidate the storage. And yes NAND is more energy and space efficient per IO. But not per gigabyte.

Does AFA help make consolidation easier? Well it depends. Certainly the sizing is easier, because its a very fast tier. But limited capacity and features and increased cost do not. Just throwing NAND at the problem is not going to cut it.

Sure, performance goes up. But again, the final utility stops at a certain point where the price of AFA keeps growing. If the database would absolutely benefit of every millisecond I save per transaction then your argument of price/transaction would be true. But that’s not the real world. At the end the customer needs xx TB of net capacity and a fraction of that with low-latency. I don’t see where an AFA approach would fit here nor where it would change the numbers of the required total capacity.

In fact there are very few customers who can actually utilize the full IOPS performance and bandwidth just from a single Violin array. Adding multiple of those then only for capacity needs is the same as adding more and more disk spindles for IOPS performance – it’s not clever. Instead you replace one stovepipe solution with another.

it may work on the green field, with apps and database optimized for every latency microsecond. But that’s maybe what… 10% of your customers? The majority are those old, slow behemoths of database landscapes in public agencies or large and medium enterprises where the database and software has grown over decades. Not something you can change overnight.

So back to the topic: you go to these customers and tell them that NAND magically solves all their problems if the would just NAND for everything. As a justification you tell them that if they want to consolidate database A with 50,000 IOPS peak and database B with 25,000 IOPS peak on whatever single storage array it suddenly needs 100,000 IOPS or more to cover this. Reason?

My point is: Flash helps, yes. But only to a certain degree and for a very specific problem. If your system is solely waiting for disk I/O.

Aging customer applications have all kinds of problems and you will likely run into another bottleneck (greetings from our friends mutex, latches and spin_locks) soon after you fixed the storage side.

The easiest approach is to use Flash for everything. That would make you guys very happy, I get that.

The more clever approach would be to put Flash where it’s actually needed and intelligently tier and integrate it with whatever type of large capacity storage. So that you create the solution of super-fast storage with real-world capacity.

Whatever the analysts tell us: the GB of disk is still a fraction of GB of NAND. And it’s not going to go away in 2 years. Compare the price of Violin MLC versus an array similar in capacity built out of conventional 10k disks. It’s not 50% difference. It’s 500%. If all I would need is performance then of course why bother. But that’s not reality. I need performance AND capacity. But not extrem performance.

A NAND-cache layer is what I need most. It gives me time and money to bridge the gap between massive I/O systems of inefficiently written code to all in-memory systems with efficient code in 5 years.

Guten Tag mein Freund

I’m very glad that you took the time to write such a comprehensive comment – and I welcome this from any reader – but if I were to reply to every point here it would result in an equally long response. And to be honest, I’m not sure anyone would benefit, since you clearly have your opinion and I have mine. So I will try and keep my responses brief. I’ll probably fail though.

Your understanding of the price of flash is way, way off. Obviously I cannot discuss exact prices publicly, but your estimated list price for 50TB (usable) of Violin is about 7-8 times too high. And that’s the *list* price. You believe that flash memory arrays are vastly more expensive than disk arrays, yet I have had customers show me quotes from HDD array manufacturers which had a higher cost per GB than that of Violin. You believe that this point is still some years away – I have seen hard evidence that it has already arrived.

Yet there will always be cheaper disk arrays. Just like there will most probably always be cheaper flash arrays. To get a better price you can simply sacrifice enterprise functionality such as high availability, manageability, serviceability, etc. On paper that works, so then you can argue that there are less expensive solutions available – but in the “real world” you described, most customers require those enterprise solutions.

My point is that the storage is almost always a fraction of the total cost of ownership. When you consider the complete cost of the hardware and software, it’s almost always the case that software licenses (plus support and maintenance) make up the bulk of the cost – with Oracle being the largest single culprit. If the storage allows you to *significantly* reduce the cost of the software licenses then it has a much more positive impact on TCO than the negative impact of what you pay in $/GB.

You will argue that this isn’t always the case. I cannot deny that – every system is different and I make no claims that flash in general or Violin in particular is a golden bullet that will magically make your performance go up and your bills go down. That’s why I wrote this article in the first place, to show that, like any technology, flash has its place. But the weight of evidence is mounting: I have customers doing this, IBM TMS has customers doing this, EMC will soon have customers doing this. At some point, your beloved disk arrays will cease to be used in tier one storage solutions and will take up their rightful place as a solution for archive data.

“In fact there are very few customers who can actually utilize the full IOPS performance and bandwidth just from a single Violin array.”

I need to reply to this sentence: Yes… exactly! With disk systems IOPS was a constraint. With flash systems IOPS is not a constraint – it is effectively an unlimited resource. I fear that you are entirely missing the point: nobody should ever have to worry about IOPS anymore because IOPS is not what holds back applications (and therefore businesses). The number one enemy here is *latency*. IOPS is a distraction we no longer need to pay attention to. It is ridiculous to look at the massive IOPS capabilities of flash systems and suggest that they are in some way over-provisioned – the technology simply works in a way that delivers almost unusable IOPS capabilities, so cross IOPS off your list of potential issues and start focussing on the real problem.

It is latency that introduces delays into our systems. These delays can be directly associated with *business* costs, whether it be in the form of users who work less efficiently or batch jobs that fail to complete in time. You may say that your example system does not have these problems – again I reiterate that I am not trying to solve problems that do not exist. If you have built a system that has no latency issues, I commend you. And as you rightly point out, some applications have concurrency issues which mean as soon as you clear the I/O bottleneck you run into a different kind of brick wall. But in my position I get to see a lot of database systems – and by far the most common theme is I/O contention. If I can give my customers back their “lost application time” then they are happy. If I can also save them large amounts of license fees, resulting in a flash system which pays for itself within a matter of months, then the argument is too compelling to overlook.

I’m interested to see that my claim about multiple virtualised workloads causing I/O patterns to tend from sequential towards random now has a name: “The I/O Blender Effect”:

http://searchvirtualstorage.techtarget.com/definition/I-O-Blender

I started to read your posts today – What an outstanding work! Keep going mate.,

How is the post “a guide to reading AWR Reports” coming along?

Thank you. I must confess that posting has been on the back burner recently. But maybe I’ll find some new inspiration soon.