Storage for DBAs: Ever been to one of those sushi restaurants where the food comes round in dishes on a conveyor belt? As each dish travels around the loop you eye it up and, as long as you can make your mind up in time, grab it. However, if you are as indecisive as me, there’s a chance it will be out of range before you come to your senses – in which case you have to wait for it to complete a further full revolution before getting another chance. And that’s assuming someone else doesn’t get to it first.

Let’s assume that it takes a dish exactly 4 minutes to complete a whole lap of the conveyor belt. And just for simplicity’s sake let’s also assume that no two dishes on the belt are identical. As a hungry diner you look in the little menu and see a particular dish which you decide you want. It’s somewhere on the belt, so how long will it take to arrive?

Probability dictates that it could be anywhere on the belt. It could be passing by right now, requiring no wait time – or it could have just passed out of reach, thus requiring 4 minutes of wait time to go all the way round again. As you follow this random method (choose from the menu then look at the belt) it makes sense that the average wait time will tend towards half way between the min and max wait times, i.e. 2 minutes in this case. So every time you pick a dish you wait an average of 2 minutes: if you have eight dishes the odds say that you will spend (8 x 2) = 16 minutes waiting for your food. Welcome to the disk data diet, I hope you weren’t too hungry?

Now let’s consider an alternative option, where you order eight dishes from the chef and he or she places all of them sequentially (i.e. next to each other) somewhere on the conveyor belt. That location is random, so again you might have to wait anywhere between 0 and 4 minutes (an average of 2 minutes) for the first dish to pass… but the next seven will follow one after the other with no wait time. So now, in this scenario, you only had to wait 2 minutes for all eight dishes. Much better.

I’m sure you will have seen through my analogy right from the start. The conveyor belt is a hard disk and the sushi dishes are blocks which are being eaten / read. I haven’t yet worked out how to factor a bottle Asahi Super Dry into this story, but I’ll have one all the same thanks.

Random versus Sequential I/O

I have another article planned for later in this series which describes the inescapable mechanics of disk. For now though, I’ll outline the basics: every time you need to access a block on a disk drive, the disk actuator arm has to move the head to the correct track (the seek time), then the disk platter has to rotate to locate the correct sector (the rotational latency). This mechanical action takes time, just like the sushi travelling around the conveyor belt.

Obviously the amount of time depends on where the head was previously located and how fortunate you are with the location of the sector on the platter: if it’s directly under the head you do not need to wait, but if it just passed the head you have to wait for a complete revolution. Even on the fastest 15k RPM disk that takes 4 milliseconds (15,000 rotations per minute = 250 rotations per second, which means one rotation is 1/250th of a second or 4ms). Admittedly that’s faster than the sushi in my earlier analogy, but the chances are you will need to read or write a far larger number of blocks than I can eat sushi dishes (and trust me, on a good day I can pack a fair few away).

What about the next block? Well, if that next block is somewhere else on the disk, you will need to incur the same penalties of seek time and rotational latency. We call this type of operation a random I/O. But if the next block happened to be located directly after the previous one on the same track, the disk head would encounter it immediately afterwards, incurring no wait time (i.e. no latency). This, of course, is a sequential I/O.

Size Matters

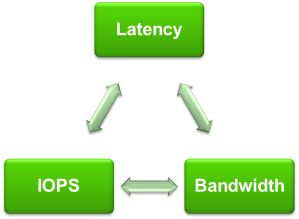

In my last post I described the Fundamental Characteristics of Storage: Latency, IOPS and Bandwidth (or Throughput). As a reminder, IOPS stands for I/Os Per Second and indicates the number of distinct Input/Output operations (i.e. reads or writes) that can take place within one second. You might use an IOPS figure to describe the amount of I/O created by a database, or you might use it when defining the maximum performance of a storage system. One is a real-world value and the other a theoretical maximum, but they both use the term IOPS.

When describing volumes of data, things are slightly different. Bandwidth is usually used to describe the maximum theoretical limit of data transfer, while throughput is used to describe a real-world measurement. You might say that the bandwidth is the maximum possible throughput. Bandwidth and throughput figures are usually given in units of size over units of time, e.g. Mb/sec or GB/sec. It pays to look carefully at whether the unit is using bits (b) or bytes (B), otherwise you are likely to end up looking a bit silly (sadly, I speak from experience).

In the previous post we stated that IOPS and throughput were related by the following relationship:

Throughput = IOPS x I/O size

It’s time to start thinking about that I/O size now. If we read or write a single random block in one second then the number of IOPS is 1 and the I/O size is also 1 (I’m using a unit of “blocks” to keep things simple). The Throughput can therefore be calculated as (1 x 1) = 1 block / second.

Alternatively, if we wanted to read or write eight contiguous blocks from disk as a sequential operation then this again would only result in the number of IOPS being 1, but this time the I/O size is 8. The throughput is therefore calculated as (1 x 8) = 8 blocks / second.

Hopefully you can see from this example the great benefit of sequential I/O on disk systems: it allows increased throughput. Every time you increase the I/O size you get a corresponding increase in throughput, while the IOPS figure remains resolutely fixed. But what happens if you increase the number of IOPS?

Latency Kills Disk Performance

In the example above I described a single-threaded process reading or writing a single random block on a disk. That I/O results in a certain amount of latency, as described earlier on (the seek time and rotational latency). We know that the average rotational latency of a 15k RPM disk is 4ms, so let’s add another millisecond for the disk head seek time and call the average I/O latency 5ms. How many (single-threaded) random IOPS can we perform if each operation incurs an average of 5ms wait? The answer is 1 second / 5 ms = 200 IOPS. Our process is hitting a physical limit of 200 IOPS on this disk.

What do you do if you need more IOPS? With a disk system you only really have one choice: add more disks. If each spindle can drive 200 IOPS and you require 80,000 IOPS then you need (80,000 / 200) = 400 spindles. Better clear some space in that data centre, eh?

On the other hand, if you can perform the I/O sequentially you may be able to reduce the IOPS requirement and increase the throughput, allowing the disk system to deliver more data. I know of Oracle customers who spend large amounts of time and resources carving up and re-ordering their data in order to allow queries to perform sequential I/O. They figure that the penalty incurred from all of this preparation is worth it in the long run, as subsequent queries perform better. That’s no surprise when the alternative was to add an extra wing to the data centre to house another bunch of disk arrays, plus more power and cooling to run them. This sort of “no pain, no gain” mentality used to be commonplace because there really weren’t any other options. Until now.

Flash Offers Another Way

The idea of sequential I/O doesn’t exist with flash memory, because there is no physical concept of blocks being adjacent or contiguous. Logically, two blocks may have consecutive block addresses, but this has no bearing on where the actual information is electronically stored. You might therefore say that all flash I/O is random, but in truth the principles of random I/O versus sequential I/O are disk concepts so don’t really apply. And since the latency of flash is sub-millisecond, it should be possible to see that, even for a single-threaded process, a much larger number of IOPS is possible. When we start considering concurrent operations things get even more interesting… but that topic is for another day.

Back to the sushi analogy, there is no longer a conveyor belt – the chefs are standing right in front of you. When you order a dish, it is placed in front of you immediately. Order a number of dishes and you might want to enlist the help of a few friends to eat in parallel, because the food will start arriving faster than you can eat it on your own. This is the world of flash memory, where hunger for data can be satisfied and appetites can be fulfilled. Time to break that disk diet, eh?

Looking back at the disk model, all that sitting around waiting for the sushi conveyor belt just takes too long. Sure you can add more conveyor belts or try to get all of your sushi dishes arranged in a line, but at the end of the day the underlying problem remains: it’s disk. And now that there’s an alternative, disk just seems a bit too fishy to me…

Storage architecture shapes database behaviour – and that relationship is evolving again. If you found this series useful, you might also be interested in Databases in the Age of AI, which explores how AI agents are changing the assumptions at the heart of enterprise data systems.

Brilliant post, thank you.

Hi,

Thanks again for this new blog post.

Have a few questions regarding disk :

1) what is the impact of I/O size increasing on disk drives in terms of I/Ops ? Doesn’t it take much more time to service a large number of blocks within a single I/O compared to a 1 block I/O ? Can we really consider that if my disk can service 200 I/Ops (with 1 bloc unit per I/O) then, whatever the I/O size is (and provided blocks are contiguous for each large I/O) , this disk drive will be able to service 200 large I/Os within 1second ?

2) Regarding the concept of random I/O, where does it apply ? from an application or from a disk perspective ? what i mean is the following :

Let’s say i have an application which can generate some sort of workload : random I/Os, sequential I/Os. From an application perspective let’s say that i ask for Random I/Os of 1MB size.

The application isn’t aware of where the blocks are physically located on the disk drive. So it could be that my 1MB I/O is actually splitted and the disk has too complete many single bloc I/Os when i’m expecting a single 1M I/O. But it could also be that each 1MB “random” I/O is contiguous with previous and next one on disk. In that case, this would mean i’m doing sequential access. And same applies to sequential I/Os which could be in the end random.

What i do not get exactly is the following : When a benchmark tool is performing different Random I/O batch with a distinct bloc size, how can we garantee it’s really random ? how can the application can garantee the blocs are not contiguous ?

Also regarding I/O size, from an application perspective, requesting a single 1MB I/O looks like to sequential activity, while requesting a single bloc I/O is something which is random.

So

1) is difference between random/sequential to be considered from a disk drive perspective or from an application perspective ?

2) Can a big 1MB single I/O be considered as random, or does it all depend on how blocs are spread on the disk drive ?

Thanks again for sharing. I Know i keep focused on Disk drives …. 😛

Hi

Always remember that there are many layers between the application and the physical data stored on persistent storage. Some of those layers are visible to you, some less so. When it comes to issuing larger IO requests there will be opportunities for increased efficiency throughout the stack, but by far the biggest differentiator will be from disk due to the seek time and rotational latency. Think of these as a type of “I/O tax” that must be paid for each I/O operation – no matter what size. It’s obvious that if you have to pay the same tax for any I/O size you will want to get the maximum return possible in order to pay the taxman the least number of times.

There are potential benefits (and drawbacks) to performing sequential I/O within Oracle itself, i.e. at the application layer, but that wasn’t the point of my article – I am only discussion what happens on a disk here. In fact, as you mentioned, if your system is incorrectly configured it’s possible that what should be large sequential I/Os are actually causing lots of smaller I/Os on the disk array. This is often an issue with “stripe size” and is discussed in the Performance Tuning Guide here:

http://docs.oracle.com/cd/E11882_01/server.112/e16638/iodesign.htm#PFGRF94386

Sometimes it can be difficult to determine if your I/O is being performed in the manner you expect, particularly on disk drives where both the stripe size and the stripe width are variable, meaning different LUNs could have different settings. On flash arrays, at least in the case of Violin, the RAID configuration (and thus the striping) is different and – in my opinion – much simpler both for configuration and monitoring. I have an article planned for that later in this series.

Going back to your point about application versus disk, yes it’s possible that the application could request multiple single blocks which happen to be adjacent on disk. This is what the I/O Scheduler is for in the OS – it will merge these many single I/Os into a smaller number of sequential I/Os. The application layer doesn’t have to be aware of this, it’s all taking place further down the stack.

As always, the number of layers in the stack between the application and the physical storage is both a blessing and a curse.

Thanks a lot, for your detailed answers. I really get it better with your explainations. I hope there are lots of readers on your blog because afaik, there’s no such equivalent quality stuff on this topic elsewhere on the web. I guess because one must have a registered wordpress account explains why there are.not that much comments. Thanks again, can’t wait to read next posts. Your stuff deserves to be presented at any 1st class oug conference 😉

Thank you – I really appreciate the feedback as it makes it feel worthwhile. Sometimes blogging can seem like shouting into the darkness 🙂

There are some great bloggers out there who do a good job of explaining this stuff – check my blog roll. Also, only this week I discovered that James Morle of Scalabilities is writing some excellent material on database I/O:

http://www.scaleabilities.co.uk/author/morlej/

Now I need to get on with writing the next article…

1. Real great blog!

2. When one specifies “Sequential I/O” in an RFP (for a Video Recording system, which writes and deletes FIFO), is this a requirement for the application, the O/S, the storage?

Thanks,

It will be for the storage. Video images are large files so naturally they are written sequentially.

Love sushi – and your use of the Sushi shop here is simply brilliant – well done sir!

I would however like you to discuss MS SQL and Windows more (that’s the space I’m in), if possible?

I also think putting some meat on the bone, with real world examples would be helpful e.g system x performance was 123, then moving to Flash was transformed to 123456789 (or 0.123456789 – depending on the scale!) – adding some high-level costs in would also help (but that may prove commercially difficult?)

Thanks again.

Hmm, I try to include Windows and MS SQL but I’m lacking in the same level of experience that I have with Oracle.

I take your point about real world examples but I’m loath to make this site too much of a Violin Memory marketing portal as I don’t think that’s what visitors want. And you are right in guessing that I am not able to talk about pricing.

Food for thought. I’ll have a think about how to present those sorts of details.

Hi,

Very nice and well presented blog, a pleasure to read.

In the Size Matters paragraph, there’s something I was expecting, that have been busying me for days and nights, but couldn’t find it.

One of our Oracle 11gR2 machine running multiple instances (+40) (Linux, w/ THP SAN storage) is experiencing very high IOwait usage (top).

During our investigations we found out that is was obviously correlated to disks service times climbing high (from 3-4 ms to +20 ms), but the reasons of this kept obscure for days.

We then started to measure the avg request size during these high IOwait periods, and we could directly link this to the service times observed.

Indeed, the bigger the request size is, the higher the service time gets. When using requests size ~20k latency (svc time ) is good, but Oracle seems sometimes to generate +300k request size that impacts svc time to the + 20 ms values. And this causes the high IOwait load.

Further digging, our DBA’s informed us that since 11g, table scans were not any more backed by SGA (shared instance cache) but were considered as less costly by doing physical IO’s !

And these requests are sized based on a coefficient (that’s still obscure for me, not a DBA 🙂 ) up to 512 kB size….

We are still thinking on how to proceed now:

¤ reduce this coefficient to limit the size of IOs and the impact on the server load, but without impacting the disk subsystem throughput ?

¤ reconfigure the instances to run in pre 11g mode (FTS should be backed on SGA) ?

And definitely, flash storage as an intermediate system cache (next smartIO feature of Veritas) …

Brem

Ok, this could be complicated.

Oracle performs read I/Os up to but no more than the size of the parameter DB_FILE_MULTIBLOCK_READ_COUNT database blocks. So multiply the database block size by the value of this parameter to find your largest I/O size.

http://docs.oracle.com/cd/E11882_01/server.112/e40402/initparams056.htm#REFRN10037

The whole thing about the buffer cache is not, in my opinion, the problem. Oracle has always attempted not to pollute the buffer cache with blocks read from full table scans, first by reading them into the least recently used end of the LRU chain and subsequently by performing them as direct (non-buffered) I/Os.

If you are using disk storage then large sequential I/Os should perform well…ish. Obviously, if all things are equal, the service time of a large I/O will be higher than a small I/O because there is more work to be performed. What’s possible in your case though is that the sequential I/O might be getting converted into lots of random I/Os on the storage.

An example of this would be if you used snapshots or clones on the disk array. This redirect-on-write technology can frequently result in blocks which were previously contiguous on disk being relocated so that they are now in disparate locations. The result is that, although the database thinks it is performing a single, large sequential I/O the disk array actually has to go and fetch multiple, small random I/Os to service it.

I’d start by looking at the activity on the array…

Chris,

Couple of things, first I’ve noticed you’ve used awr A lot. I’ve got a question about snap_id’s. Am I crazy that I think the headings for snapshots are not right which causes one to be an hour off in specifying snap_id’s? My thinking is when wanting to match up snap_ids to time of day, where the headings say “Snap Started” and “Snap Time” they should say “Snap Ended Time”.

Second, you mention above that large sequential I/Os can become a bunch of random I/Os. We have an old array, likely no ssd, I am not sure. We have everything going through asm. I am not sure how the disk guy does striping, and / or if asm does striping, but I believe striping is involved. If I have a normally created database on red hat linux (forgetting your cloning concern) will my full table scans normally result in the ideal “large sequential I/Os”. (not figuring in a track needs to end and get on to the next track). What I mean is with the os involved, and asm / oracle involved, and striping involved, and the array involved and the array cache involved do my full table scans end up mostly doing large sequential i/os operating in effect in the fastest way possible?

Thanks, Lynn

Hi

Not sure I understand your question about snapshots. When you talk about headings which say “Snap Started” where are you seeing that?

You are absolutely right that the many layers between database and persistent media make it hard to tell what is actually happening. However, striping is usually done at a far larger granularity than that of, for example, Oracle blocks. The illustrate that point, consider that Oracle ASM stripes data at the Allocation Unit (AU) level – with the default AU size typically 1MB. If you remember that the default Oracle blocksize is 8k then, with DB_FILE_MULTIBLOCK_READ_COUNT also set to its default value, the maximum size of a “sequential” read from a datafile (i.e. db file scattered read) will be 1MB. So even the largest physical read by Oracle will (should) only retrieve blocks from a single AU.

The only way to be sure, unfortunately, is to use tools to examine what’s happening at each level. You can use AWR in Oracle, my friend Bertrand’s asm_metrics.pl script (http://wp.me/p2NC5f-mF) for ASM, iostat in Linux and whatever array-based tools your storage comes with. You want to see a rough correlation between the number of IOPS each level thinks is taking place, otherwise something is happening. But that assumes no other databases, servers or whatever are generating load on the storage at the same time…

Chris,

When you asked:

“Not sure I understand your question about snapshots. When you talk about headings which say “Snap Started” where are you seeing that?”

I am talking about matching snap_id’s in dba_hist_sqlstat with the heading that comes out when running awrrpt.

Here is a sample:

I ran a full scan query with sql_id 974d5nygwpnk5 at 7:55 am.

At 8:05 am, here is what dba_hist_snapshot says (which I agree with):

SNAP_ID BEG_INTERVAL_TIME END_INTERVAL_TIME

———- —————————————- ————————————–

4918 31-MAR-17 07.00.20.613 AM 31-MAR-17 08.00.25.889 AM

Here is the startup of awrrpt at 08:10 am (which I don’t agree with “Snap Started” it should say “Snap Ended”:

Instance DB Name Snap Id Snap Started Level

———— ———— ——— —————— —————————–

EPM91CLN EPM91CLN 4910 31 Mar 2017 00:00 1

….

4917 31 Mar 2017 07:00 1

4918 31 Mar 2017 08:00 1

I run the awrrpt with parms of: start snap of 4917 end snap of 4918

I see my query on the report, ALL IS GOOD.

I query dba_hist_sqlstat for my query with sql_id=’974d5nygwpnk5′ and it says the snap_id was 4918. All is good.

SNAP_ID SQL_ID

———- ———————–

4918 974d5nygwpnk5

So if in the dba_hist_sqlstat table, the query was run in snap_id 4918, you would think in the awr heading when selecting the snaps_ids to run the heading that says “Snap Started” would say “Snap Ended”.

So I am confirming as I document this that in dealing with the awrrpt script startup and the actual awr report output, all is fine. Sql that was run between 7:00 am and 8:00 shows up fine with on a report that covers 7:00 am to 8:00 am.

When writing queries and reading the results joining the snap_id in dba_hist_sqlstat and the snap_id in dba_hist_snapshot all is well. The column names “begin_interval_time” and “end_interval_time” in dba_hist_snapshot match the real world time.

It is when getting the data out of of dba_hist_sqlstat with my own query, I can’t look at the startup heading of the awrrpt script and match sql_id’s to figure when the query ran. (Because 4918 ended at 08:00 it did not start at 08:00 as the startup heading of the awrrpt script says above). Or better stated, at 11:15 am today I can’t take a query I know I ran between 7:00 am and 8:00 am and start an awrrpt and properly select the right snap_id’s based on the bad heading.

Hope that explanation is clear!

And I hope I am seeing it all correctly and can get some agreement.

If not, please show me where I am wrong. I struggle with this every time I work with my own reports written from dba_hist_sqlstat.

Thanks,

Lynn

Ok I think I get you. What do you mean by “the startup heading of the awrrpt script”? Do you mean the filename?

I will try this again. I can’t get the formatting of what I paste to come through correctly. I am going to paste the screen output with periods in the whitespace.

At this moment if start an awrrpt query and enter 1 for num_days, I get the screens that ask me for the snap range I want the report to run for.

……………………………………………………………this is the screen

……………………………………………………………column I am trying

……………………………………………………………to discuss

……………………………………………………………………………………snap

InstanceDB…….Name……………..Snap Id….Snap..Started…..Level

..

EPM91CLN……EPM91CLN………….4910 31 Mar 2017 00:00……1

………………………………………………..4911 31 Mar 2017 01:00……1

…

…

…………………………………………………4918 31 Mar 2017 08:00…….1

I believe the “Snap Started” column should read “Snap Ended”.

I ran a query this morning at 7:55 that shows up in dba_hist_sqlstat as snap_id 4918.

The awrrpt startup screen says snap 4918 started at 08:00.

Is it clear now?

Thanks,

Lynn

Ok I think I get you. When you enter the value for num_days the AWRRPT script then displays a report with the column “Snap Started”. You are saying that this column heading is incorrect, it’s actually reporting the time that the snap ended.

I guess maybe most people don’t notice because they just use this view to pick two snapshot numbers which are the start and end point of the AWR Report generated by the call to AWRRPT.

You say latency on flash is sub millisecond. Here is a test for you. Generate a 100% write workload to flash and use a large I/O size such as 256K. You will see that sub millisecond latencies from flash are much more conditional that most think.

The latency of a write operation to a NAND flash die is sub-millisecond (for example, a Toshiba TH58TVG6S2FBA89 SLC NAND device can program a page in around 400 microseconds and read it in around 45 microseconds). If you want to use large I/O sizes from the host then these will of course be broken up into a large number of smaller I/Os at the physical flash layer. Each individual smaller I/O will be satisfied in a small amount of time, but of course a small amount of time multiplied by a large number will still be a longer amount of time.

So I don’t really know what your point is? I guess if you read or write enough data then it will take longer than any amount of time you care to mention. I dare say that with a theoretically massive system you could make the latency from a host go up to seconds, minutes, hours… who knows. But that would be the same with any storage medium.

Don’t get me wrong, I welcome any comment and/or constructive criticism. But so far I still don’t think it’s unreasonable to describe the latency of flash as sub-millisecond.

I simply thought that the assertion of latencies no longer being an issue with flash was an incomplete position to an otherwise well written piece. Let us assume for a moment that we are talking about high grade eMLC and SLC devices to rule out shoddy inconsistencies at the device level with cheaper alternatives. Regardless of the specs for the device, it is the latency that the host will see that will be the important measurement. The effective latency for a larger I/O writes are so much different than they are for reads. Even in a balanced read/write workload, if it is transactional/sequential dialog of I/O, then that really fast sub millisecond read is waiting for the orders of magnitude slower write that it follows. The negative impact won’t be as noticeable for workloads with very fixed, smaller I/O sizes, but shows up with workloads that varying I/O sizes throughout a single workload – and of which, is outside anyone’s control of adjusting (I/O size).

I welcome flash as much or more than the next guy. It’s changing everything. But I end up having to do damage control with many application owners and Developers who want to assume there are no considerations like these. Keep up the great posts though! As a fellow blogger, I know how much of a time investment it can be, and I tip my cap to everyone who takes the time to put out great content.

Hi Flashdba (aka Chris B),

I have started reading your blogs since last few weeks or so, when Google stumbled me upon your site. To say that, your blogs are brilliant is an understatement! The topics, vocabulary and lucidness with which you attempt to elucidate the subject makes me wonder how much effort and time is being spent at back-end.

Tremendous stuff. And please keep on blogging (I know, I am sounding selfish)….

By the way, I did happen to very briefly work/interact with you in past whilst working for one major Telcos. I am sure you can figure it out from my IP address. 🙂

Regards,

Vijay

Hi Vijay – and thanks. Say hello to my old friends at your “major telco” 🙂

Could any one say me where random workload is needed?like sequential workload is needed for Video images.

Examples of random workloads include transactional databases, virtual desktop infrastructure (VDI) and – increasingly – virtual server infrastructure (VSI). For the latter it’s a simple fact that by overlaying many independent workloads on top of the same physical hardware (through the use of VMware, Hyper-V, Xen etc) the underlying workload always tends towards a more random I/O pattern.

It’s therefore only a small leap to see that I/O patterns are becoming increasingly random in today’s data centres due to the continued rise of cloud computing (public or private) and the consolidation of workloads enabled by virtualisation and flash storage.

amazing article, it help me a lot.

Amazing! Probably one of the best article in the subject.

Very Good information. Could you please clarify my questions.

Question1:

I need to do the following operation. Let’s assume all 8 physical blocks are located inside one extent.

1) Read a contiguous 3 physical blocks

2) skip 1 block

3) Read the contiguous 2 physical blocks.

4) skip 1 block

5) Read 1 last block

what is better among the following 2?

1) Three different I/O request to read 3,2 and 1 physical blocks

2) One I/O to read the entire 8 physical blocks and use only the required one

Question2:

1) Read 1 physical block

2) skip 6 physical blocks

3) Read 1 last block

what is better among the following 2?

1) Two different I/O request for step (1) and (3)

2) One I/O to read the entire 8 physical blocks and use only the first and last blocks.

Your question is too generic to answer, because it depends on too many unknowns (e.g. the storage platform, the type of read, certain init.ora parameter settings, etc).

In principle, you should consider that any physical I/O operation which takes place on a disk storage system needs to first pay a “latency tax”. This tax, which is payable in milliseconds, is the price of the mechanical latency required for the disk actuator head to move to the correct track and the platter to rotate to the correct sector. However, the tax is only payable once per I/O operation, regardless of how many blocks are read/written (up to a limit which is dependant on your system, but which can still be considered to be much larger than the size of a single database block).

Thus it is more “tax efficient” to read or write as many blocks as possible whenever the tax is paid, unless of course those additional blocks are utterly useless and only have the effect of polluting your various cache memory structures with unwanted and unneeded data. The exact amount of tax that you pay is completely reliant on the specifics of your system and will also be greatly affected by such considerations as the amount of DRAM cache in the disk array and the amount of other activity taking place.

I really appreciate your response!