So this is it – the last article in my mini-series on understanding flash. This is the bit where I draw it all together in a neat conclusion that makes you think, “Yes! That was worth reading”. No pressure eh?

So let me start with the conclusion first: as a storage medium, NAND flash is a royal pain in the ass.

Chaos

Why? Well, let’s look back at what we’ve learned in the previous 9 articles:

- Data stored in flash pages cannot be overwritten without an erase operation taking place first

- Erase operations affect entire blocks of flash pages instead of just the page you want to erase

- Erase operations are an order of magnitude slower than read and program (write) operations

- Flash media wears out and is limited by the number of program and erase operations (P/E Cycles) possible

- The performance of a flash package is unbalanced: for example, some writes are faster than others

- Complex mapping tables are required to write updated data to free pages and track stale pages prior to erasure

- The process of garbage collection (the recycling of stale pages) requires the over-provisioning of flash

- The phenomenon of write amplification causes many more writes to take place on the backend than those issued from any hosts, negatively affecting performance and endurance

- The fabrication plants (or “flash foundries”) built to manufacture NAND flash are incredibly expensive

In short, NAND flash is a tricky medium to use for enterprise storage. A whole lot of work is required to make a collection of flash chips appear to be a unified, resilient block of storage with fast, predictable performance.

And I haven’t even told you everything. Consider, for example, the phenomenon of read disturb. When you read a page within a NAND flash chip, you cause a very minor electronic field in the locality of the cells it contains. That field will cause a small disturbance to any neighbouring cells – usually not enough to cause concern, but significant nevertheless. So what happens when you repeatedly read that page? Eventually, after X number of reads, the data stored within the nearby cells becomes questionable.

The solution, therefore, is to keep track of the number of times each page is disturbed in this manner and then set a threshold (let’s say 50 disturbances) beyond which you will copy the data out to a clean page and then mark the old page as stale. Easy.

But just think about what that means for a moment. Remember when I said that write amplification was mainly impacted by write workloads? This new piece of information means that even on a 100% read workload there will be additional back-end writes taking place on the array. Just another example of why flash is a tricky medium to manage.

Order

Of course, it would be remiss of me not to mention that NAND flash brings a tremendous set of benefits along with these problems. You could say they come as a package (oh come on, that was one of my better puns).

Let’s go back to basics for a moment: if you want to take a defined quantity of work and do it in a shorter amount of time, what are your choices? Put simply, there are two options: do the same work faster, or do more of it in parallel (and of course both options can be used together for extra gain).

The basic building block of a disk array is, obviously, the hard disk drive. I’ve already explained at tedious length about the performance gap between disk and flash, so we know that we can access data faster using flash. Technologies like RAID allow multiple disks to be used in parallel to achieve performance (and resilience) gains, but given a limited amount of physical space (such as a data centre rack), how many hard drives can you actually squeeze into one system?

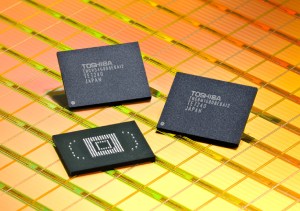

Now compare this to the number of NAND flash packages you could fit into the same space, all of which you could potentially utilise in parallel and at a lower latency. Doing the same work faster – and doing more of it in parallel.

And there’s more. Those clunky great big cabinets of disk use up horrendous amounts of power just to spin those little rotating platters – with much of the energy converted to heat and noise: waste. The heat results in a requirement for additional cooling, which uses even more power: more waste. And it all takes up so much physical space that data centres become overrun with storage.

In contrast, all flash arrays (AFAs) require less power, less cooling and take up less physical space: it’s not uncommon for customers to pay for the move to flash simply by avoiding the need to build a new data centre or extend an existing one. In summary, the net cost of using flash is now less than that of using disk.

When I first started writing this blog back in 2012 there was still a debate over whether flash would replace disk for enterprise storage. That debate was over some time ago: flash has already won.

Architecture Matters

So this post marks the end of my journey into explaining and understanding NAND flash. Yet there is a whole new area which needs exploring: the architecture of all flash arrays.

Enterprise storage needs be safe, reliable, predictable and fast. Yet at a package level, NAND flash is a tricky little beast that has to be constantly watched to make sure it behaves itself. There’s a dichotomy here: how do we use the latter to deliver the former? How do we take a component designed for consumer electronics and use it to build an enterprise-class AFA? In short, how we derive order from chaos?

The answer is in the architecture. At the time of writing this blog there are a number of AFA vendors on the market, each with a different approach to taming the beast. Apart from my own employer, Violin Memory, there is EMC, IBM, HDS, Pure Storage, SolidFire, Kaminario and a whole load more.

And that’s why this industry is so interesting to me. Everybody is trying to do this differently, although you can broadly categorise the solutions into three distinct ranges: hybrid arrays, SSD-based arrays and ground-up arrays. Everybody thinks their way is right – and nobody can afford to be wrong. The market for flash-based primary storage is huge and growing all the time: the winners get unparalleled success, while the losers … are simply left in disarray*

*I won’t lie – I’m so proud of that pun I’m going to award myself a couple of weeks off.

This article is part of the Storage for DBAs series. If you found this series useful, you might also be interested in Databases in the Age of AI, which explores how AI agents are changing the assumptions at the heart of enterprise data systems.

Reblogged this on Musings.