Before I draw this series on Understanding Flash to a close, I wanted to briefly touch on the subject of manufacturing. Don’t worry, I’ve taken heed of the kind feedback I had after my floating gate transistor blog post (“Please stop talking about electrons!“) and will instead focus on the commercial aspects, because ultimately they affect the price you will be paying for your flash-based storage. If you’re not familiar with the way things are you might be surprised…

The Supplier Landscape

Semiconductor chips, such as the NAND flash packages found in your storage, phones and tablets, are manufactured in fabrication plants known as “fabs” or flash “foundries”. To say that fabs are not cheap to build would be somewhat of an understatement – they are mind-bogglingly, ludicrously expensive.

In 2014 the global semiconductor industry posted record sales totalling $335.8 billion. That’s the entire semiconductor industry, not just the subset that produces NAND flash… and I think you’ll agree that’s quite a lot of money. But to put that figure into perspective, when Samsung decided to build an entirely new fab in late 2014, it had to commit $15 billion for a project that won’t be completed “until mid-2017”.

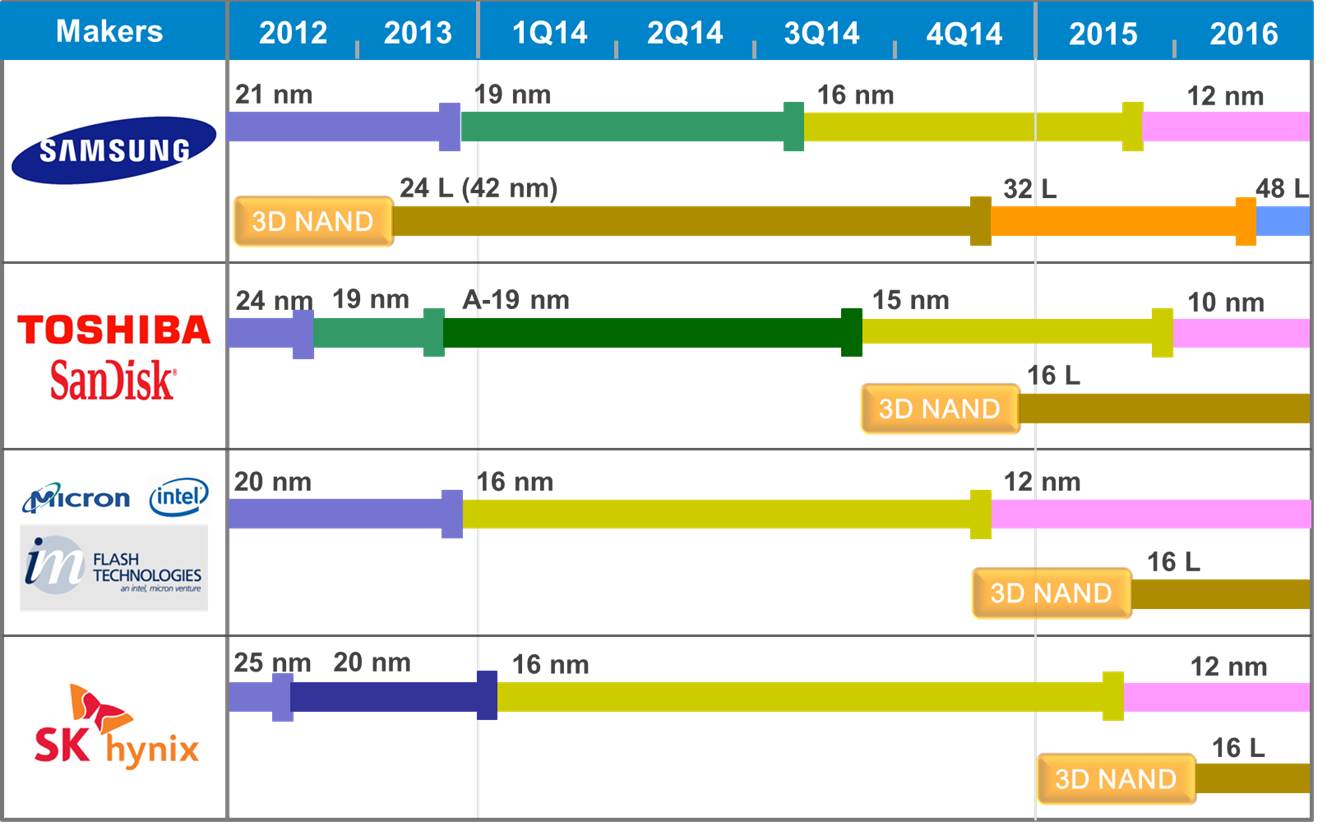

Clearly a fab is an eye-watering investment – and it is mainly for this reason that there are (at the time of writing) only six key companies worldwide who run flash foundries. What’s more, because of that staggering cost, four of those six are working together in pairs to share the investment burden. The four teams are:

- Toshiba and SanDisk

- Samsung

- Intel and Micron

- SK Hynix

With only four sets of fabs in operation, the market is hardly awash with an abundance of NAND flash – which of course suits the fab operators just fine as it keeps the price of flash higher. Oversupply would be unsustainable for an industry with such high costs.

Process Shrinking

Talking of costs, the fab operators are never allowed to stand still because of the relentless drive to make things smaller – Moore’s Law doesn’t just apply to processors; NAND flash is a semiconductor too. The act of taking the design of a microchip and reducing it in size is known as a process shrink – and it brings all sorts of benefits. Remember that a NAND flash cell is basically constructed from floating gate transistors? Well if those transistors are reduced in size:

- Less current is needed per transistor, reducing the overall power consumption of the flash

- This in turn reduces the heat output of an identical design with the same clock frequency (or alternatively the clock frequency can be increased)

- Most importantly, more flash dies can be produced from the same silicon wafers (the raw material), resulting in either reduced costs or increased density

It may sound like smaller is better, but as always there is a flip side. Retooling a flash foundry to move to a smaller process geometry takes time and money – and the return on investment for the fab is reduced with each shrink. But we’ll come back to that in a minute.

Process Geometries

The different sizes in which flash is fabricated are known as process geometries. Traditionally, they are defined by measuring the distance (in nanometers) between the source and drain of each transistor (although these days this practice has become much less well-defined). In a strange quirk of algebra, the two digit numbers are written as <digit><letter> so that, for example, the first geometry to be in the range 29-20nm (e.g. 25nm) is called 2X, then a second in that range (e.g. 21nm) is called 2Y. Once into the teens (e.g. 19nm) we go back to 1X. [Although interestingly, Toshiba and SanDisk have a second generation NAND which they call 1Y to distinguish it from first-generation 1X even though both are 19nm]

Here’s the current technology roadmap for NAND flash courtesy of my friends at TechInsights:

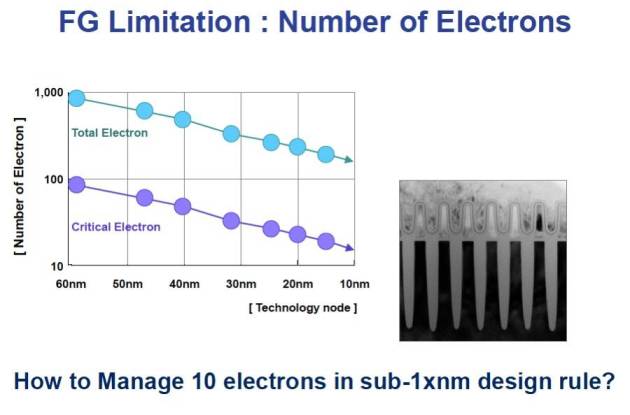

So what’s the problem with flash getting smaller? Well, hopefully for the very last time, cast your mind back to the concept of floating gate transistors. On these tiny devices the floating gates effectively capture and store electrons that are passing from one side of the transistor to another, retaining charge – and that charge determines the cell’s value of 0 or 1. But at the tiny geometries that flash is approaching now, the number of electrons involved is desperately small, resulting in large margins for error. Electrons, after all, are notoriously good at hide and seek.

This means that flash cells are less reliable, that even more error correction codes are required… and that ultimately, we are reaching what is known as the scaling limit. It looks like the end is it sight for flash as we know it.

3D NAND Flash

Except it isn’t. If you look again at the Technology Roadmap picture you’ll see entries appearing alongside each foundry operator for 3D NAND. This is a significant new fabrication process that looks like it will be the direction taken by the flash industry for the foreseeable future. I would love to attempt a detailed explanation of 3D NAND design here, but a) it’s beyond me, b) I promised to listen to the feedback after the last time I went sub-atomic, and c) Jim Handy (AKA The Memory Guy) already has a fantastic set of articles all about it.

The point is, 3D NAND maps out a future for NAND flash beyond the scaling limits of 2D planar NAND – and the big fab operators have already invested. That puts 3D NAND in pole position as we increasingly look to non-volatile memory to store our data.

But there are other technologies trying to compete…

The Next Big Thing

The holy grail of memory technology is universal memory. This is a hypothetical technology which brings all the benefits, but none of the drawbacks, of the multitude of memory technologies in use today: SRAM, DRAM, NAND Flash and so on. SRAM (often used on-chip as a CPU cache) is fast but expensive, DRAM is cheaper but must be constantly refreshed (using considerably more power) while NAND flash is comparatively slower and wears out with use. If only there were some new technology that met all our requirements?

Well, first of all, it’s worth mentioning that if universal memory did come about it would have far-reaching consequences on the way we design and build computers… but all the same, there are various technologies in R&D right now which make claims to be the NVM solution of the future: PCM, MRAM, RRAM, FeRAM, Memristor and so on. But these – and any other – technologies all face the same challenge: that massive initial investment required to go from prototype to production. In other words, the cost and time associated with building a fabrication plant.

Say you converted your shed into a clean room and invented a wonderful new memory technology called Shed-RAM (SHRAM). SHRAM is fast, cheap, dense and only uses a tiny amount of power. You’ve still got to convince somebody to splurge billions of dollars on a foundry before it can be productised. And, as I’m sure you’ll agree, that’s a pretty big bet on an untested technology – especially if it takes years to build. What happens if, one year in, your neighbour (who recently converted his garage into a clean room) invents Garage-RAM (GaRAM) which makes your SHRAM worthless? Nobody is going to cope well with the loss of billions of dollars on a bad bet.

Conclusion

The investment hurdle required to turn a new NVM technology into a product is both a challenge and a stabiliser to the NVM industry. In theory it could stifle potential new technologies, but at the same time (at least for those of us in enterprise storage) it means there is plenty of warning when the tide turns: you are likely to see any successful new tech in your phone before it appears in your data centre.

Now, who can lend me $15 billion to build a new fab? I can’t afford anything more than 0% interest, but I can offer a lifetime’s supply of USB sticks to sweeten the deal…

Storage architecture shapes database behaviour – and that relationship is evolving again. If you found this series useful, you might also be interested in Databases in the Age of AI, which explores how AI agents are changing the assumptions at the heart of enterprise data systems.

pah! A life time of free USB disks can be obtained simply be walking about any exhibitors hall at a conference. 🙂

Haha, you make a good point Connor…