Almost exactly a year ago I published a post covering my first impressions of the ASM Filter Driver (ASMFD) released in Oracle 12.1.0.2, followed swiftly by a second post showing that it didn’t work with 4k native devices.

When I wrote that first post I was about to start my summer holidays, so I’m afraid to admit that I was a little sloppy and made some false assumptions toward the end – assumptions which were quickly overturned by eagle-eyed readers in the comments section. So I need to revisit that at some point in this post.

But first, some background.

Some Background

If you don’t know what ASMFD is, let me just quote from the 12.1 documentation:

Oracle ASM Filter Driver (Oracle ASMFD) is a kernel module that resides in the I/O path of the Oracle ASM disks. Oracle ASM uses the filter driver to validate write I/O requests to Oracle ASM disks.

The Oracle ASMFD simplifies the configuration and management of disk devices by eliminating the need to rebind disk devices used with Oracle ASM each time the system is restarted.

The Oracle ASM Filter Driver rejects any I/O requests that are invalid. This action eliminates accidental overwrites of Oracle ASM disks that would cause corruption in the disks and files within the disk group. For example, the Oracle ASM Filter Driver filters out all non-Oracle I/Os which could cause accidental overwrites.

This is interesting, because ASMFD is considered a replacement for Oracle ASMLib, yet the documentation for ASMFD doesn’t make all of the same claims that Oracle makes for ASMLib. Both ASMFD and ASMLib claim to simplify the configuration and management of disk devices, but ASMLib’s documentation also claims that it “greatly reduces kernel resource usage“. Doesn’t ASMFD have this effect too? What is definitely a new feature for ASMFD is the ability to reject invalid (i.e. non-Oracle) I/O operations to ASMFD devices – and that’s what I got wrong last time.

However, before we can revisit that, I need to install ASMFD on a brand new system.

Installing ASMFD

Last time I tried this I made the mistake of installing 12.1.0.2.0 with no patch set updates. Thanks to a reader called terry, I now know that the PSU is a very good idea, so this time I’m using 12.1.0.2.3. First let’s do some preparation.

Preparing To Install

I’m using an Oracle Linux 6 Update 5 system running the Oracle Unbreakable Enterprise Kernel v3:

[root@server4 ~]# cat /etc/oracle-release

Oracle Linux Server release 6.5

[root@server4 ~]# uname -r

3.8.13-26.2.3.el6uek.x86_64

As usual I have taken all of the necessary pre-installation steps to make the Oracle Universal Installer happy. I have disabled selinux and iptables, plus I’ve configured device mapper multipathing. I have two sets of 8 LUNs from my Violin storage: 8 using 512e emulation mode (512 byte logical block size but 4k physical block size) and 8 using 4kN native mode (4k logical and physical block size). If you have any doubts about what that means, read here.

[root@server4 ~]# ls -l /dev/mapper

total 0

crw-rw---- 1 root root 10, 236 Jul 20 16:52 control

lrwxrwxrwx 1 root root 7 Jul 20 16:53 mpatha -> ../dm-0

lrwxrwxrwx 1 root root 7 Jul 20 16:53 mpathap1 -> ../dm-1

lrwxrwxrwx 1 root root 7 Jul 20 16:53 mpathap2 -> ../dm-2

lrwxrwxrwx 1 root root 7 Jul 20 16:53 mpathap3 -> ../dm-3

lrwxrwxrwx 1 root root 7 Jul 20 16:53 v4kdata1 -> ../dm-6

lrwxrwxrwx 1 root root 7 Jul 20 16:53 v4kdata2 -> ../dm-7

lrwxrwxrwx 1 root root 7 Jul 20 16:53 v4kdata3 -> ../dm-8

lrwxrwxrwx 1 root root 7 Jul 20 16:53 v4kdata4 -> ../dm-9

lrwxrwxrwx 1 root root 8 Jul 20 16:53 v4kdata5 -> ../dm-10

lrwxrwxrwx 1 root root 8 Jul 20 16:53 v4kdata6 -> ../dm-11

lrwxrwxrwx 1 root root 8 Jul 20 16:53 v4kdata7 -> ../dm-12

lrwxrwxrwx 1 root root 8 Jul 20 16:53 v4kdata8 -> ../dm-13

lrwxrwxrwx 1 root root 8 Jul 20 17:00 v512data1 -> ../dm-14

lrwxrwxrwx 1 root root 8 Jul 20 17:00 v512data2 -> ../dm-15

lrwxrwxrwx 1 root root 8 Jul 20 17:00 v512data3 -> ../dm-16

lrwxrwxrwx 1 root root 8 Jul 20 17:00 v512data4 -> ../dm-17

lrwxrwxrwx 1 root root 8 Jul 20 17:00 v512data5 -> ../dm-18

lrwxrwxrwx 1 root root 8 Jul 20 17:00 v512data6 -> ../dm-19

lrwxrwxrwx 1 root root 8 Jul 20 17:00 v512data7 -> ../dm-20

lrwxrwxrwx 1 root root 8 Jul 20 17:00 v512data8 -> ../dm-21

lrwxrwxrwx 1 root root 8 Jul 20 16:53 vg_halfserver4-lv_home -> ../dm-22

lrwxrwxrwx 1 root root 7 Jul 20 16:53 vg_halfserver4-lv_root -> ../dm-4

lrwxrwxrwx 1 root root 7 Jul 20 16:53 vg_halfserver4-lv_swap -> ../dm-5

The 512e devices are shown in green and the 4k devices shown in red. The other devices here can be ignored as they are related to the default filesystem layout of the operating system.

Installing Oracle 12.1.0.2.3 Grid Infrastructure (software only)

This is where the first challenge comes. When you perform a standard install of Oracle 12c Grid Infrastructure you are asked for storage devices on which you can locate items such as the ASM SPFILE, OCR and voting disks. In the old days of using ASMLib you would have prepared these in advance, because ASMLib is a separate kernel module located outside of the Oracle GI home. But ASMFD is part of the Oracle Home and so doesn’t exist prior to installation. Thus we have a chicken and egg situation.

Even worse, I know from bitter experience that I need to install some patches prior to labelling my disks, but I can’t install patches without installing the Oracle home either.

So the only thing for it is to perform a Software Only installation from the Oracle Universal Installer, then apply the PSU, then create an ASM instance and finally label the LUNs with ASMFD. It’s all very long winded. It wouldn’t be a problem if I was migrating from an existing ASMLib setup, but this is a clean install. Such is the price of progress.

To save this post from becoming longer and more unreadable than a 12c AWR report, I’ve captured the entire installation and configuration of 12.1.0.2.3 GI and ASM on a separate installation cookbook page, here:

Installing 12.1.2.0.3 Grid Infrastructure with Oracle Linux 6 Update 5

It’s simpler that way. If you don’t want to go and read it, just take it from me that we now have a working ASM instance which currently has no devices under its control. The PSU has been applied so we are ready to start labelling.

Using ASM Filter Driver to Label Devices

The next step is to start labelling my LUNs with ASMFD. I’m using what the documentation describes as an “Oracle Grid Infrastructure Standalone (Oracle Restart) Environment”, so I’m following this set of steps in the documentation.

Step one tells me to run a dsget command and then a dsset command to add a diskstring of ‘AFD:*’. Ok:

[oracle@server4 ~]$ asmcmd dsget

parameter:

profile:++no-value-at-resource-creation--never-updated-through-ASM++

[oracle@server4 ~]$ asmcmd dsset 'AFD:*'

[oracle@server4 ~]$ asmcmd dsget

parameter:AFD:*

profile:AFD:*

Next I need to stop CRS (I’m using a standalone config so actually it’s HAS):

[root@server4 ~]# crsctl stop has

CRS-2791: Starting shutdown of Oracle High Availability Services-managed resources on 'server4'

CRS-2673: Attempting to stop 'ora.LISTENER.lsnr' on 'server4'

CRS-2673: Attempting to stop 'ora.asm' on 'server4'

CRS-2673: Attempting to stop 'ora.evmd' on 'server4'

CRS-2677: Stop of 'ora.LISTENER.lsnr' on 'server4' succeeded

CRS-2677: Stop of 'ora.evmd' on 'server4' succeeded

CRS-2677: Stop of 'ora.asm' on 'server4' succeeded

CRS-2673: Attempting to stop 'ora.cssd' on 'server4'

CRS-2677: Stop of 'ora.cssd' on 'server4' succeeded

CRS-2793: Shutdown of Oracle High Availability Services-managed resources on 'server4' has completed

CRS-4133: Oracle High Availability Services has been stopped.

And then I need to run the afd_configure command (all as the root user). Before and after doing so I will check for any loaded kernel modules with oracle in the name, so see what changes:

[root@server4 ~]# lsmod | grep oracle

oracleacfs 3308260 0

oracleadvm 508030 0

oracleoks 506741 2 oracleacfs,oracleadvm

[root@server4 ~]# asmcmd afd_configure

Connected to an idle instance.

AFD-627: AFD distribution files found.

AFD-636: Installing requested AFD software.

AFD-637: Loading installed AFD drivers.

AFD-9321: Creating udev for AFD.

AFD-9323: Creating module dependencies - this may take some time.

AFD-9154: Loading 'oracleafd.ko' driver.

AFD-649: Verifying AFD devices.

AFD-9156: Detecting control device '/dev/oracleafd/admin'.

AFD-638: AFD installation correctness verified.

Modifying resource dependencies - this may take some time.

[root@server4 ~]# lsmod | grep oracle

oracleafd 211540 0

oracleacfs 3308260 0

oracleadvm 508030 0

oracleoks 506741 2 oracleacfs,oracleadvm

[root@server4 ~]# asmcmd afd_state

Connected to an idle instance.

ASMCMD-9526: The AFD state is 'LOADED' and filtering is 'ENABLED' on host 'server4'

Notice the new kernel module called oracleafd. Also, AFD is showing that “filtering is enabled” – I guess this relates to the protection against invalid writes.

Time to start up HAS or CRS again:

[root@server4 ~]# crsctl start has

CRS-4123: Oracle High Availability Services has been started.

Ok, let’s start labelling those devices.

Labelling (Incorrectly)

Now remember that I am testing with two sets of devices here: 512e and 4k. The 512e devices are emulating a 512 byte blocksize, so they should result in ASM creating diskgroups with a blocksize of 512 bytes – thus avoiding all the tedious bugs from which Oracle suffers when using 4096 byte diskgroups.

So let’s just test a 512e LUN to see what happens when I label it and present it to ASM. First, the label is created using the afd_label command:

[oracle@server4 ~]$ ls -l /dev/mapper/v512data1

lrwxrwxrwx 1 root root 8 Jul 24 10:30 /dev/mapper/v512data1 -> ../dm-14

[oracle@server4 ~]$ ls -l /dev/dm-14

brw-rw---- 1 oracle dba 252, 14 Jul 24 10:30 /dev/dm-14

[oracle@server4 ~]$ asmcmd afd_label v512data1 /dev/mapper/v512data1

[oracle@server4 ~]$ asmcmd afd_lsdsk

--------------------------------------------------------------------------------

Label Filtering Path

================================================================================

V512DATA1 ENABLED /dev/sdpz

Well, it worked.. sort of. The path we can see in the lsdsk output does not show the /dev/mapper/v512data1 multipath device I specified… instead it’s one of the non-multipath /dev/sd* devices. Why?

Even worse, look what happens when I check the SECTOR_SIZE column of the v$asm_disk view in ASM:

SQL> select group_number, name, sector_size, block_size, state

2 from v$asm_diskgroup;

GROUP_NUMBER NAME SECTOR_SIZE BLOCK_SIZE STATE

------------ ---------- ----------- ---------- -----------

1 V512DATA 4096 4096 MOUNTED

Even though my LUNs are presented as 512e, ASM has chosen to see them as 4096 byte. That’s not wanted I want. Gaah!

Labelling (Correctly)

To fix this I need to unlabel that LUN so that AFD has nothing under its control, then update the oracleafd_use_logical_block_size parameter via the special SYSFS files /sys/modules/oracleafd:

[root@server4 ~]# cd /sys/module/oracleafd

[root@server4 oracleafd]# ls -l

total 0

-r--r--r-- 1 root root 4096 Jul 20 14:43 coresize

drwxr-xr-x 2 root root 0 Jul 20 14:43 holders

-r--r--r-- 1 root root 4096 Jul 20 14:43 initsize

-r--r--r-- 1 root root 4096 Jul 20 14:43 initstate

drwxr-xr-x 2 root root 0 Jul 20 14:43 notes

drwxr-xr-x 2 root root 0 Jul 20 14:43 parameters

-r--r--r-- 1 root root 4096 Jul 20 14:43 refcnt

drwxr-xr-x 2 root root 0 Jul 20 14:43 sections

-r--r--r-- 1 root root 4096 Jul 20 14:43 srcversion

-r--r--r-- 1 root root 4096 Jul 20 14:43 taint

--w------- 1 root root 4096 Jul 20 14:43 uevent

[root@server4 oracleafd]# cd parameters

[root@server4 parameters]# ls -l

total 0

-rw-r--r-- 1 root root 4096 Jul 20 14:43 oracleafd_use_logical_block_size

[root@server4 parameters]# cat oracleafd_use_logical_block_size

0

[root@server4 parameters]# echo 1 > oracleafd_use_logical_block_size

[root@server4 parameters]# cat oracleafd_use_logical_block_size

1

After making this change, AFD will present the logical blocksize of 512 bytes to ASM rather than the physical blocksize of 4096 bytes. So let’s now label those disks again:

[root@server4 mapper]# for lun in 1 2 3 4 5 6 7 8; do

> asmcmd afd_label v512data$lun /dev/mapper/v512data$lun

> done

Connected to an idle instance.

Connected to an idle instance.

Connected to an idle instance.

Connected to an idle instance.

Connected to an idle instance.

Connected to an idle instance.

Connected to an idle instance.

Connected to an idle instance.

[root@server4 mapper]# asmcmd afd_lsdsk

Connected to an idle instance.

--------------------------------------------------------------------------------

Label Filtering Path

================================================================================

V512DATA1 ENABLED /dev/mapper/v512data1

V512DATA2 ENABLED /dev/mapper/v512data2

V512DATA3 ENABLED /dev/mapper/v512data3

V512DATA4 ENABLED /dev/mapper/v512data4

V512DATA5 ENABLED /dev/mapper/v512data5

V512DATA6 ENABLED /dev/mapper/v512data6

V512DATA7 ENABLED /dev/mapper/v512data7

V512DATA8 ENABLED /dev/mapper/v512data8

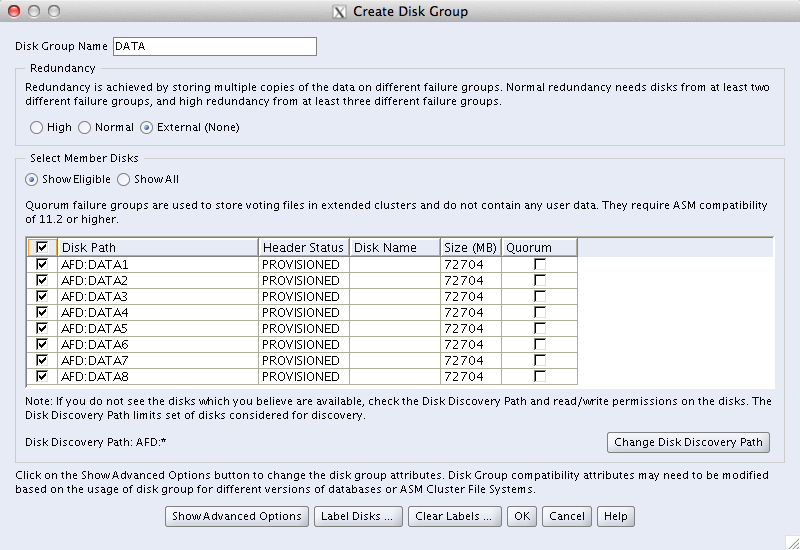

Note the correct multipath devices (“/dev/mapper/*”) are now being shown in the lsdsk command output. If I now create an ASM diskgroup on these LUNs, it will have a 512 byte sector size:

SQL> get afd.sql

1 CREATE DISKGROUP V512DATA EXTERNAL REDUNDANCY

2 DISK 'AFD:V512DATA1', 'AFD:V512DATA2',

3 'AFD:V512DATA3', 'AFD:V512DATA4',

4 'AFD:V512DATA5', 'AFD:V512DATA6',

5 'AFD:V512DATA7', 'AFD:V512DATA8'

6 ATTRIBUTE

7 'compatible.asm' = '12.1',

8* 'compatible.rdbms' = '12.1'

SQL> /

Diskgroup created.

SQL> select disk_number, mount_status, header_status, state, sector_size, path

2 from v$asm_disk;

DISK_NUMBER MOUNT_S HEADER_STATU STATE SECTOR_SIZE PATH

----------- ------- ------------ -------- ----------- --------------------

0 CACHED MEMBER NORMAL 512 AFD:V512DATA1

1 CACHED MEMBER NORMAL 512 AFD:V512DATA2

2 CACHED MEMBER NORMAL 512 AFD:V512DATA3

3 CACHED MEMBER NORMAL 512 AFD:V512DATA4

4 CACHED MEMBER NORMAL 512 AFD:V512DATA5

5 CACHED MEMBER NORMAL 512 AFD:V512DATA6

6 CACHED MEMBER NORMAL 512 AFD:V512DATA7

7 CACHED MEMBER NORMAL 512 AFD:V512DATA8

8 rows selected.

SQL> select group_number, name, sector_size, block_size, state

2 from v$asm_diskgroup;

GROUP_NUMBER NAME SECTOR_SIZE BLOCK_SIZE STATE

------------ ---------- ----------- ---------- -----------

1 V512DATA 512 4096 MOUNTED

Phew.

Failing To Label 4kN Devices

So what about my 4k native mode devices, the ones with a 4096 byte logical block size? What happens if I try to label them?

[root@server4 ~]# asmcmd afd_label V4KDATA1 /dev/mapper/v4kdata1

Connected to an idle instance.

ASMCMD-9513: ASM disk label set operation failed.

Yeah, that didn’t work out did it? Let’s look in the trace file:

[root@server4 ~]# tail -5 /u01/app/oracle/log/diag/asmcmd/user_root/server4/alert/alert.log

24-Jul-15 12:38 ASMCMD (PID = 8695) Given command - afd_label V4KDATA1 '/dev/mapper/v4kdata1'

24-Jul-15 12:38 NOTE: Verifying AFD driver state : loaded

24-Jul-15 12:38 NOTE: afdtool -add '/dev/mapper/v4kdata1' 'V4KDATA1'

24-Jul-15 12:38 NOTE:

24-Jul-15 12:38 ASMCMD-9513: ASM disk label set operation failed.

I’ve struggled to find any more meaningful message, even when I manually run the afdtool command shown in the log – but it seems pretty likely that this is failing due to the device being 4kN. I therefore assume that AFD still isn’t 4kN ready. I do wish Oracle would make some meaningful progress on its support of 4kN devices…

I/O Filter Protection

So now let’s investigate this protection that ASMFD claims to have against non-Oracle I/Os. First of all, what do those files in /dev/oracleafd/disks actually contain?

[root@server4 ~]# cd /dev/oracleafd/disks

[root@server4 disks]# ls -l

total 32

-rw-r--r-- 1 root root 22 Jul 24 12:34 V512DATA1

-rw-r--r-- 1 root root 22 Jul 24 12:34 V512DATA2

-rw-r--r-- 1 root root 22 Jul 24 12:34 V512DATA3

-rw-r--r-- 1 root root 22 Jul 24 12:34 V512DATA4

-rw-r--r-- 1 root root 22 Jul 24 12:34 V512DATA5

-rw-r--r-- 1 root root 22 Jul 24 12:34 V512DATA6

-rw-r--r-- 1 root root 22 Jul 24 12:34 V512DATA7

-rw-r--r-- 1 root root 22 Jul 24 12:34 V512DATA8

[root@server4 disks]# cat V512DATA1

/dev/mapper/v512data1

Aha. This is what I got wrong in my original post last year, because – keen as I was to start my summer vacation – I didn’t spot that these files are simply pointers to the relevant multipath device in /dev/mapper. So let’s follow the pointers this time.

Let’s remind ourselves that the files in /dev/mapper are actually symbolic links to /dev/dm-* devices:

root@server4 disks]# ls -l /dev/mapper/v512data1

lrwxrwxrwx 1 root root 8 Jul 24 12:34 /dev/mapper/v512data1 -> ../dm-14

[root@server4 disks]# ls -l /dev/dm-14

brw-rw---- 1 oracle dba 252, 14 Jul 24 12:34 /dev/dm-14

So it’s these /dev/dm-* devices that are at the end of the trail we just followed. If we dump the first 64 bytes of this /dev/dm-14 device, we should be able to see the AFD label:

[root@server4 disks]# od -c -N 64 /dev/dm-14

0000000 ( o u t

0000020

0000040 O R C L D I S K V 5 1 2 D A T A

0000060 1

There it is. We can also read it with kfed to see what ASM thinks of it:

[root@server4 ~]# kfed read /dev/dm-14

kfbh.endian: 0 ; 0x000: 0x00

kfbh.hard: 0 ; 0x001: 0x00

kfbh.type: 0 ; 0x002: KFBTYP_INVALID

kfbh.datfmt: 0 ; 0x003: 0x00

kfbh.block.blk: 0 ; 0x004: blk=0

kfbh.block.obj: 0 ; 0x008: file=0

kfbh.check: 1953853224 ; 0x00c: 0x74756f28

kfbh.fcn.base: 0 ; 0x010: 0x00000000

kfbh.fcn.wrap: 0 ; 0x014: 0x00000000

kfbh.spare1: 0 ; 0x018: 0x00000000

kfbh.spare2: 0 ; 0x01c: 0x00000000

000000000 00000000 00000000 00000000 74756F28 [............(out]

000000010 00000000 00000000 00000000 00000000 [................]

000000020 4C43524F 4B534944 32313556 41544144 [ORCLDISKV512DATA]

000000030 00000031 00000000 00000000 00000000 [1...............]

000000040 00000000 00000000 00000000 00000000 [................]

Repeat 251 times

So what happens if I overwrite it, as the root user, with some zeros? And maybe some text too just for good luck?

root@server4 ~]# dd if=/dev/zero of=/dev/dm-14 bs=4k count=1024

1024+0 records in

1024+0 records out

4194304 bytes (4.2 MB) copied, 0.00570833 s, 735 MB/s

[root@server4 ~]# echo CORRUPTION > /dev/dm-14

[root@server4 ~]# od -c -N 64 /dev/dm-14

0000000 C O R R U P T I O N \n

0000020

It looks like it’s changed. I also see that if I dump it from another session which opens a fresh file descriptor. Yet in the /var/log/messages file there is now a new entry:

F 4626129.736/150724115533 flush-252:14[1807] afd_mkrequest_fn: write IO on ASM managed device (major=252/minor=14) not supported i=0 start=0 seccnt=8 pstart=0 pend=41943040

Jul 24 12:55:33 server4 kernel: quiet_error: 1015 callbacks suppressed

Jul 24 12:55:33 server4 kernel: Buffer I/O error on device dm-14, logical block 0

Jul 24 12:55:33 server4 kernel: lost page write due to I/O error on dm-14

Hmm. It seems like ASMFD has intervened to stop the write, yet when I query the device I see the “new” data. Where’s the old data gone? Well, let’s use kfed again:

[root@server4 ~]# kfed read /dev/dm-14

kfbh.endian: 0 ; 0x000: 0x00

kfbh.hard: 0 ; 0x001: 0x00

kfbh.type: 0 ; 0x002: KFBTYP_INVALID

kfbh.datfmt: 0 ; 0x003: 0x00

kfbh.block.blk: 0 ; 0x004: blk=0

kfbh.block.obj: 0 ; 0x008: file=0

kfbh.check: 1953853224 ; 0x00c: 0x74756f28

kfbh.fcn.base: 0 ; 0x010: 0x00000000

kfbh.fcn.wrap: 0 ; 0x014: 0x00000000

kfbh.spare1: 0 ; 0x018: 0x00000000

kfbh.spare2: 0 ; 0x01c: 0x00000000

000000000 00000000 00000000 00000000 74756F28 [............(out]

000000010 00000000 00000000 00000000 00000000 [................]

000000020 4C43524F 4B534944 32313556 41544144 [ORCLDISKV512DATA]

000000030 00000031 00000000 00000000 00000000 [1...............]

000000040 00000000 00000000 00000000 00000000 [................]

Repeat 251 times

The label is still there! Magic.

I have to confess, I don’t really know how ASM does this. Indeed, I struggled to get the system back to a point where I could manually see the label using the od command. In the end, the only way I managed it was to reboot the server – yet ASM works fine all along and the diskgroup was never affected:

SQL> alter diskgroup V512DATA check all;

Mon Jul 20 16:46:23 2015

NOTE: starting check of diskgroup V512DATA

Mon Jul 20 16:46:23 2015

GMON querying group 1 at 5 for pid 7, osid 9255

GMON checking disk 0 for group 1 at 6 for pid 7, osid 9255

GMON querying group 1 at 7 for pid 7, osid 9255

GMON checking disk 1 for group 1 at 8 for pid 7, osid 9255

GMON querying group 1 at 9 for pid 7, osid 9255

GMON checking disk 2 for group 1 at 10 for pid 7, osid 9255

GMON querying group 1 at 11 for pid 7, osid 9255

GMON checking disk 3 for group 1 at 12 for pid 7, osid 9255

GMON querying group 1 at 13 for pid 7, osid 9255

GMON checking disk 4 for group 1 at 14 for pid 7, osid 9255

GMON querying group 1 at 15 for pid 7, osid 9255

GMON checking disk 5 for group 1 at 16 for pid 7, osid 9255

GMON querying group 1 at 17 for pid 7, osid 9255

GMON checking disk 6 for group 1 at 18 for pid 7, osid 9255

GMON querying group 1 at 19 for pid 7, osid 9255

GMON checking disk 7 for group 1 at 20 for pid 7, osid 9255

Mon Jul 20 16:46:23 2015

SUCCESS: check of diskgroup V512DATA found no errors

Mon Jul 20 16:46:23 2015

SUCCESS: alter diskgroup V512DATA check all

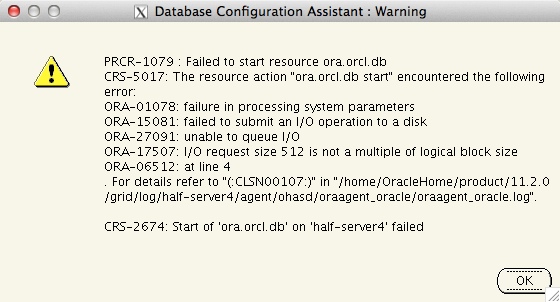

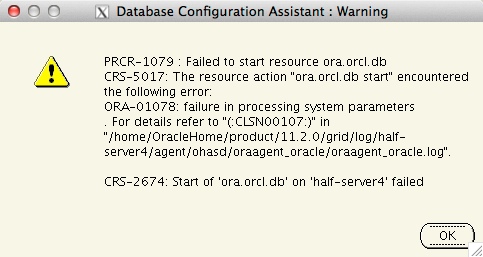

So there you go. ASMFD: it does what it says on the tin. Just don’t try using it with 4kN devices…

Trim and Unmap

Trim and Unmap At this point, what will the volumes on the storage array will be showing? We know that 10TB has been allocated, of which 5TB has been used. So shouldn’t that leave 5TB free? Probably not, because almost every All-Flash storage array uses data reduction technologies such as compression, deduplication and zero-detect. Since each block in the tablespace contains a unique block number, deduplication isn’t going to add any value (which is why arrays like the Kaminario allow dedupe to be disabled on a per-volume basis), but compression is going to have great fun with all the emptiness inside each Oracle block so the storage array will probably show significantly less than 5TB used.

At this point, what will the volumes on the storage array will be showing? We know that 10TB has been allocated, of which 5TB has been used. So shouldn’t that leave 5TB free? Probably not, because almost every All-Flash storage array uses data reduction technologies such as compression, deduplication and zero-detect. Since each block in the tablespace contains a unique block number, deduplication isn’t going to add any value (which is why arrays like the Kaminario allow dedupe to be disabled on a per-volume basis), but compression is going to have great fun with all the emptiness inside each Oracle block so the storage array will probably show significantly less than 5TB used. ASRU doesn’t issue UNMAP commands. Instead, it takes advantage of the fact that most modern storage platforms (including 3PAR, Pure Storage and Kaminario) treat blocks full of zeros as free space (a feature known as zero detect). Thus what ASRU does – when manually run by a DBA (presumably during a change window in the middle of the night while rubbing a lucky rabbit’s foot and praying to the gods of all major religions) – is compact the remaining data in any diskgroup toward the start of the volume and then write zeros above the high watermark where this compacted data ends.

ASRU doesn’t issue UNMAP commands. Instead, it takes advantage of the fact that most modern storage platforms (including 3PAR, Pure Storage and Kaminario) treat blocks full of zeros as free space (a feature known as zero detect). Thus what ASRU does – when manually run by a DBA (presumably during a change window in the middle of the night while rubbing a lucky rabbit’s foot and praying to the gods of all major religions) – is compact the remaining data in any diskgroup toward the start of the volume and then write zeros above the high watermark where this compacted data ends.